In this article

How Python drives AI innovation: a guide for startup teams

AI & Automation

Apr 6, 2026

14 min read

Learn why Python dominates AI development in 2026, which libraries power production AI, and how to hire senior Python engineers who can ship at scale.

Python is not the fastest language. It is not the most memory-efficient, and it will not win a raw benchmark against C++ or Rust. Yet Python dominates AI development due to its extensive ecosystem of libraries, a compounding talent pool, and the kind of network effects that make switching costs enormous. If you are a founder or product lead building an AI product in 2026, the real question is not whether Python is perfect. It is whether your team understands why Python keeps winning, and whether you have the senior engineers who can use it at production scale. This article breaks down the ecosystem, the tradeoffs, the lifecycle advantages, and what all of it means for your hiring decisions.

Key Takeaways

| Point | Details |

|---|---|

| Python ecosystem advantage | Python’s AI leadership comes from its vast libraries and community, not raw speed. |

| End-to-end AI support | Python enables rapid prototyping through to production deployment and MLOps for AI products. |

| Mitigating performance limits | Teams rely on hybrid approaches, using C++ or Rust where needed, but keep Python as the core. |

| Value of senior engineers | Hiring experienced Python engineers is essential for building robust, scalable AI systems. |

Why Python is foundational to modern AI

Python did not become the backbone of AI by accident. It started in research labs and university environments where scientists needed a language that was easy to read, fast to iterate with, and flexible enough to connect disparate tools. That culture of rapid experimentation spread quickly. When deep learning took off in the early 2010s, the frameworks that defined the field, including TensorFlow and PyTorch, were built with Python-first APIs. The community snowballed from there.

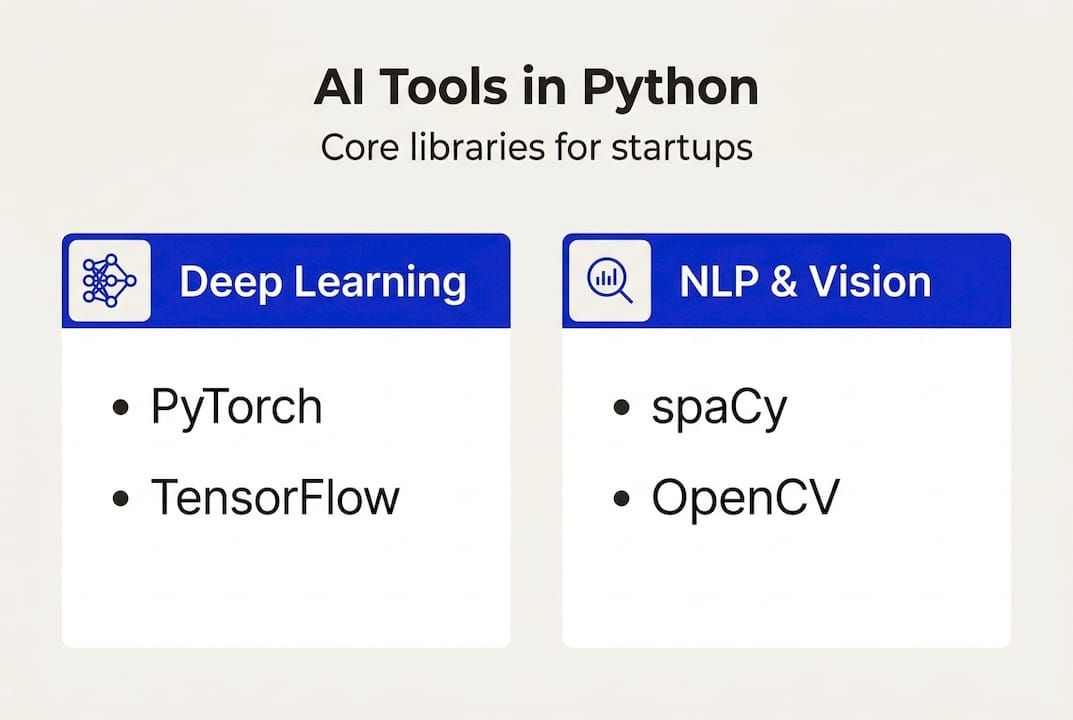

Today, Python’s AI ecosystem includes TensorFlow, PyTorch, Scikit-learn, Hugging Face, spaCy, and OpenCV, covering deep learning, classical machine learning, natural language processing, and computer vision. No other language comes close to this breadth. When you add LangChain, LlamaIndex, and the growing LLMOps tooling layer, you get a full-stack AI environment that a single Python engineer can navigate end to end.

One statistic captures just how deep this runs. LLMs generate Python code in 80 to 97 percent of AI-related coding tasks. That is not a coincidence. It reflects decades of Python-first documentation, tutorials, and open-source contributions that have trained both humans and AI models to default to Python when solving AI problems.

Why startups choose Python as their AI foundation:

- Fastest path from idea to working prototype

- Largest pool of engineers with production AI experience

- Seamless integration with cloud platforms (AWS, GCP, Azure)

- Rich tooling for data pipelines, model training, and deployment

- LLM-generated code is overwhelmingly Python, reducing AI-assisted dev friction

For a deeper look at how Python fits into modern product development, the Python development guide covers the full picture for startup teams. And if you want to compare frameworks before committing to a stack, this AI framework guide is a solid starting point.

| Domain | Primary Python tool | Maturity |

|---|---|---|

| Deep learning | PyTorch, TensorFlow | Production-ready |

| Classical ML | Scikit-learn | Production-ready |

| NLP and LLMs | Hugging Face, spaCy | Rapidly evolving |

| Computer vision | OpenCV, torchvision | Production-ready |

| LLM orchestration | LangChain, LlamaIndex | Emerging standard |

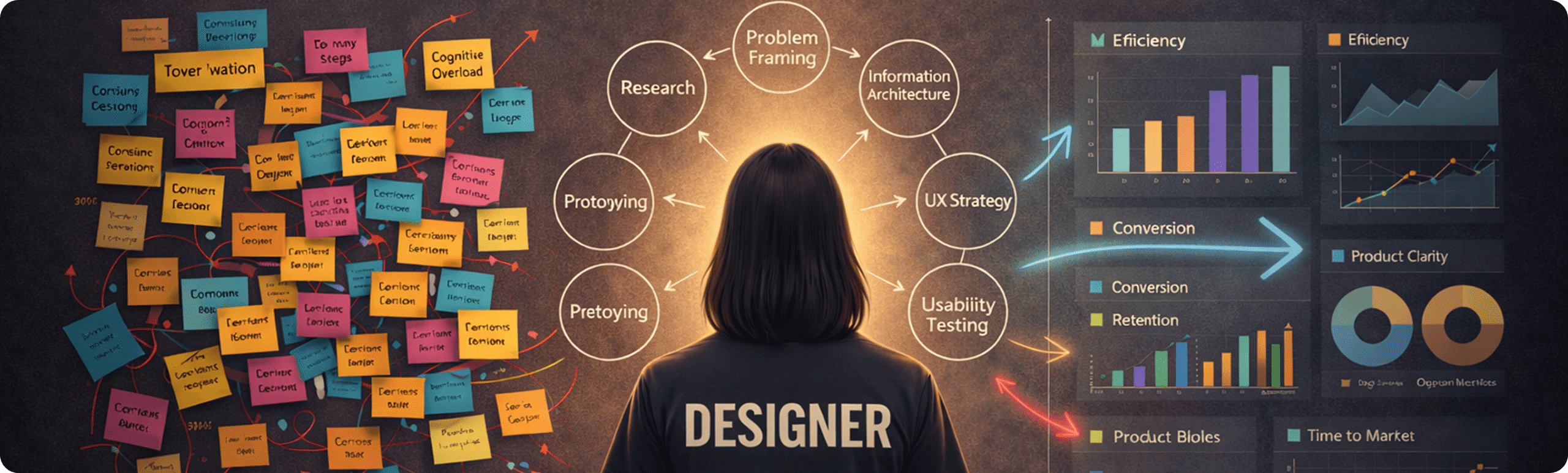

Core libraries and frameworks powering AI with Python

Knowing that Python dominates is one thing. Knowing which tools to reach for, and when, is what separates a productive AI team from one that rebuilds the same wheel three times before shipping.

TensorFlow suits production deep learning, PyTorch fits research and rapid prototyping, Scikit-learn handles classical machine learning, and Hugging Face Transformers is now the default for anything touching large language models. spaCy handles production-grade NLP pipelines, and OpenCV remains the go-to for computer vision tasks. Each has a distinct role, and a senior engineer knows not to mix them carelessly.

Choosing the right tool by context:

- Prototyping a new model architecture: PyTorch, because its dynamic computation graph lets you debug and iterate fast

- Deploying a model to serve millions of requests: TensorFlow with TensorFlow Serving, or export to ONNX via Vertex AI for cloud-native scale

- Building a RAG pipeline or LLM-powered feature: LangChain or LlamaIndex on top of Hugging Face models

- Processing text in a structured NLP pipeline: spaCy, which is faster and more production-stable than NLTK for most tasks

- Real-time image or video processing: OpenCV, often paired with a PyTorch model for inference

| Library | Best for | Production-ready | Learning curve |

|---|---|---|---|

| PyTorch | Research, prototyping | Yes (with effort) | Medium |

| TensorFlow | Large-scale deployment | Yes | Medium-High |

| Scikit-learn | Classical ML, tabular data | Yes | Low |

| Hugging Face | LLMs, fine-tuning, RAG | Yes | Medium |

| spaCy | NLP pipelines | Yes | Low-Medium |

| OpenCV | Computer vision | Yes | Medium |

For teams building AI solutions into SaaS products, the library selection decision is not just technical. It affects hiring, onboarding time, and long-term maintainability. PyTorch has the largest research community, which means more tutorials and faster debugging when things break. TensorFlow has stronger enterprise tooling and deployment infrastructure. Knowing when to use which is a skill that comes with experience, not just documentation reading.

Pro Tip: Start with PyTorch for rapid prototyping, then evaluate whether you need to migrate to TensorFlow or export via ONNX when you approach production scale. Avoid rebuilding core ML logic from scratch when a well-maintained library already solves the problem.

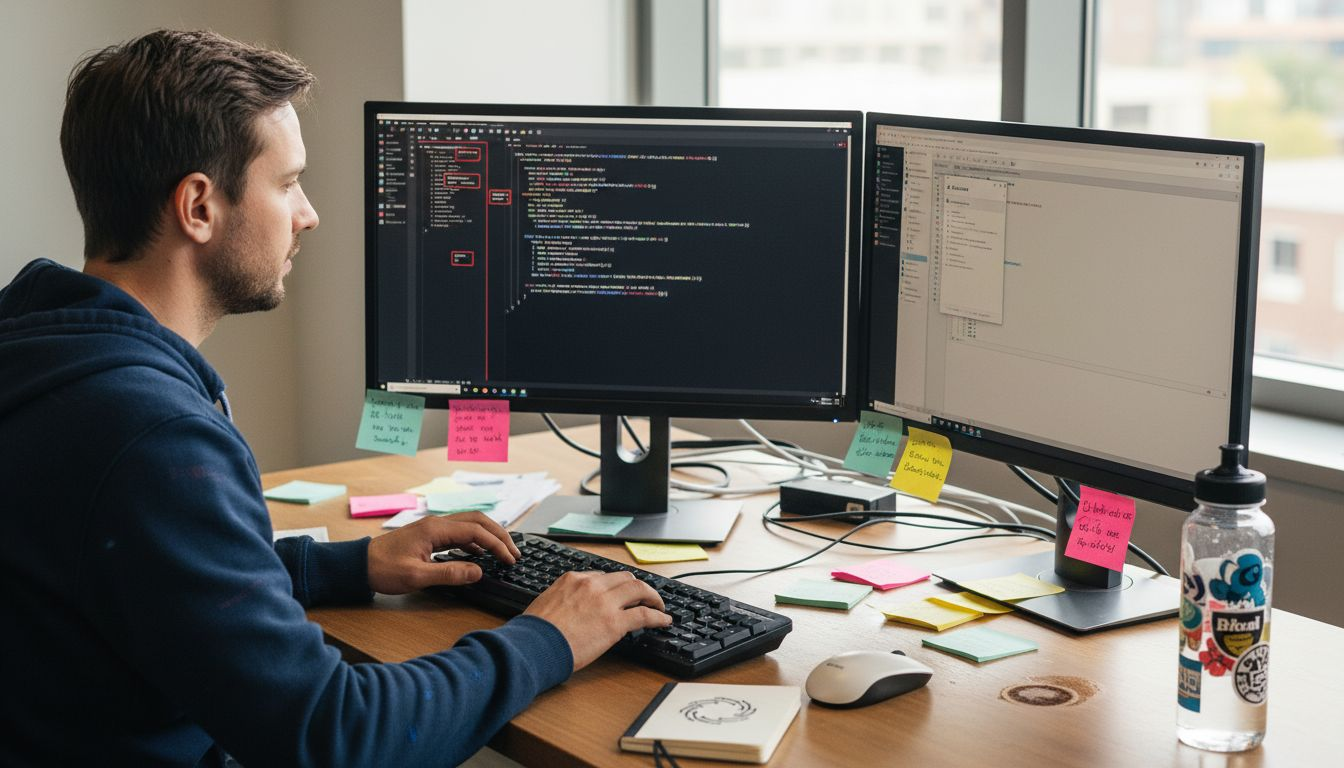

How Python supports the full AI development lifecycle

Python’s real strength is not any single library. It is the fact that you can use it at every stage of the AI development lifecycle without switching languages or rebuilding your toolchain.

Python supports rapid prototyping, model training, RAG pipelines, agentic workflows, and production deployment across multiple platforms. That continuity matters more than most founders realize. When your data scientist, ML engineer, and backend developer all work in the same language, integration friction drops significantly.

The AI development lifecycle in Python:

- Data ingestion and preprocessing: Pandas, Polars, and Apache Spark (via PySpark) handle everything from CSV files to streaming event data

- Prototyping and experimentation: Jupyter notebooks with PyTorch or TensorFlow let teams validate ideas in hours, not days

- Model training: Distributed training with PyTorch Lightning or Hugging Face Accelerate scales across GPUs without rewriting core logic

- Pipeline orchestration: Tools like Apache Airflow, Prefect, and Dagster manage complex data and model pipelines in pure Python

- Deployment: FastAPI or Flask wrap models as REST APIs; Docker and Kubernetes handle containerized deployment to any cloud

- Monitoring and MLOps: MLflow, Weights and Biases, and Evidently AI track experiments, model performance, and data drift in production

For teams scaling AI with Python, the jump from prototype to production is where most projects stall. The model works in a notebook. It does not work under real traffic, with real data quality issues, and real latency requirements. Senior engineers anticipate this. They build monitoring in from the start, containerize early, and treat MLOps as a first-class concern rather than an afterthought.

Common pitfalls include ignoring data drift (when the real-world data distribution shifts away from training data), skipping input validation, and deploying models without rollback strategies. These are not junior mistakes. They are systemic gaps that show up when teams move fast without experienced oversight. The engineering checklist for scaling covers several of these failure points in detail.

Pro Tip: Invest in MLOps infrastructure and containerized deployment early, even when your team is small. The cost of retrofitting monitoring and deployment pipelines after launch is far higher than building them in from the start.

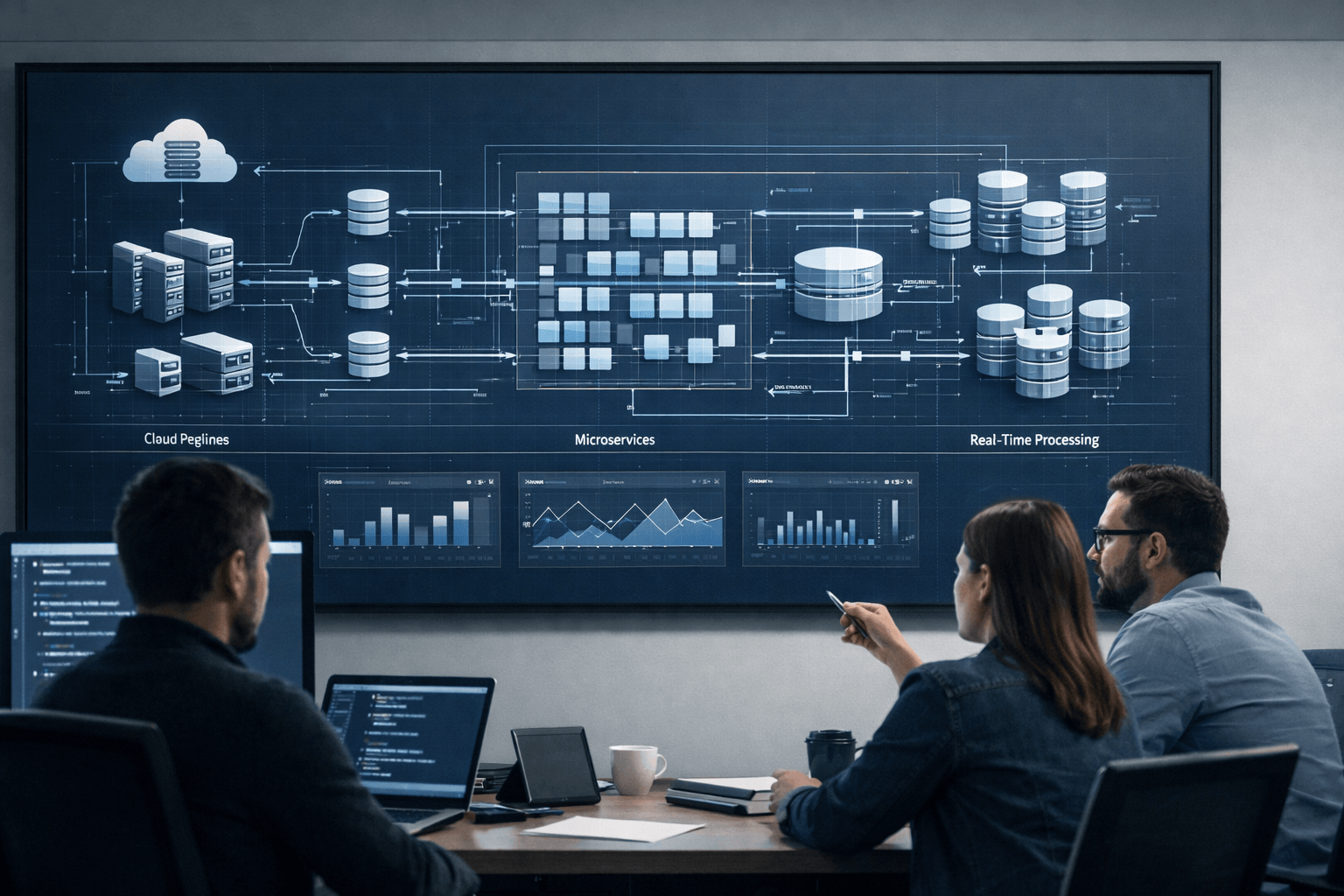

When performance matters: Python’s tradeoffs and rising challengers

Let’s be direct about Python’s weaknesses. Python excels in productivity but lags in speed and memory efficiency compared to C++, Mojo, and Rust. The Global Interpreter Lock (GIL) limits true parallelism in CPU-bound tasks. Memory overhead is real. For latency-critical inference at the edge, or for high-frequency trading systems that use ML, Python alone is not the answer.

But here is what most performance discussions miss. The actual model computation in PyTorch or TensorFlow does not run in Python. It runs in optimized C++ and CUDA kernels. Python is the orchestration layer, not the execution layer. That distinction matters enormously for how you architect your system.

“Python remains the lingua franca for AI. Senior engineers mitigate infrastructure challenges through hybrid architecture, not by abandoning the ecosystem.”

When to stick with Python:

- Your bottleneck is development speed, not runtime speed

- Your team needs to iterate on model architecture frequently

- You are integrating with cloud ML platforms (all of which have Python SDKs)

- You want to hire python developers from the largest available talent pool

When to consider hybrid approaches:

- Inference latency must be under 10ms at scale

- You are deploying to resource-constrained edge devices

- A specific pipeline component is a proven CPU or memory bottleneck

Languages like Mojo and Rust are gaining traction in specific niches. LLM code generation research shows that even AI tools are biased heavily toward Python, which means the tooling and community support for alternatives remains thin. Rust is excellent for systems programming and can complement Python at bottleneck points. Mojo promises Python-compatible syntax with C-level speed, but it is not yet production-mature for most teams.

The AI performance trends are moving toward hybrid architectures, not wholesale language replacement. Senior Python engineers understand this and architect accordingly. You can read more about the Python development advantages that make it the pragmatic choice for most startup AI stacks.

Expert strategies: Building an AI team with senior Python engineers

This is where the conversation gets practical. You understand the tools. You understand the tradeoffs. Now the question is: who builds this for you?

Startups that prioritize senior engineers for production-ready AI integration over junior developers with AI tools consistently ship faster and with fewer costly architectural mistakes. LLM-generated code is useful, but it does not replace the judgment that comes from shipping AI systems at scale. Someone still needs to review that code, integrate it into a secure and observable system, and make the architectural calls that determine whether your product survives its first real traffic spike.

What sets senior Python AI engineers apart:

- Systems thinking: they design for failure, not just for the happy path

- DevOps fluency: they own deployment, monitoring, and incident response

- Security awareness: they understand prompt injection, model poisoning, and data exposure risks

- MLOps experience: they build pipelines that are reproducible and auditable

- The ability to navigate the two-language problem: knowing when to drop to C++ or Rust for a specific bottleneck

Action steps for product leads hiring for AI projects:

- Define whether you need a generalist ML engineer or a specialist (LLMOps, computer vision, NLP)

- Assess systems architecture experience, not just model-building skills, in technical interviews

- Ask candidates to walk through how they would monitor a deployed model for drift and degradation

- Prioritize engineers who have shipped AI features to production, not just built demos or Kaggle notebooks

- Consider staff augmentation to hire python engineers quickly without the 3 to 6 month recruiting cycle

A common mistake is assuming that strong junior engineers plus AI coding tools equals a senior engineer. It does not. The gaps show up in production, under load, when something breaks at 2am. For a detailed breakdown of what to look for, the guide on senior Python developer skills is worth reading before your next technical interview.

If you want to hire a python ai developer or scale your team with python development services, the Python vs challengers benchmarks can also help you justify the stack decision to technical stakeholders. And if you are exploring how to hire python engineers through augmentation, the model works well for AI teams that need to move fast.

Pro Tip: When interviewing senior Python AI engineers, insist on system architecture and infrastructure experience. A candidate who has only trained models in notebooks is not ready to own a production AI feature.

What most AI product teams miss about Python’s role

Here is the honest take, after working with dozens of AI product teams at various stages of growth. Most founders focus on the wrong thing when evaluating Python. They debate syntax, benchmark speed, or ask whether they should switch to Mojo. That is the wrong conversation.

Python’s real power is compounding. Every year, more libraries are built on top of it. More engineers learn it. More LLM training data includes it. The ecosystem grows, and the cost of leaving it grows with it. Python remains the lingua franca for AI not because it is perfect, but because the network effects are now so strong that no challenger offers a comparable return on investment for most teams.

The risk is not Python itself. The risk is underestimating what it takes to use Python well at production scale. We see founders hire junior engineers or rely entirely on LLM-generated code, then wonder why their AI feature is brittle, slow, or insecure six months later. The language was never the bottleneck. The experience level was.

Future-proofing your AI stack does not mean chasing faster languages. It means building on Python’s dominant ecosystem while investing in the senior talent that can architect hybrid solutions when performance genuinely demands it. Faster languages can mean slower teams if they come without Python’s richness, tooling, and talent depth. The teams that win are the ones who treat Python web scaling benefits as a strategic asset, not an afterthought.

Enhance your AI product team with senior Python expertise

If this article clarified one thing, it is that the gap between a working prototype and a production-ready AI product is filled by experienced engineers, not better tools alone. The Python ecosystem gives your team an enormous head start. Senior engineers make sure you actually cross the finish line.

At Meduzzen, we help startup founders and product leads hire python developers who have shipped real AI products, not just built demos. Our pre-vetted engineers bring hands-on experience with PyTorch, LangChain, LLMOps, RAG pipelines, and production deployment across cloud platforms. Whether you need to augment your existing team or build a dedicated AI engineering function, our Python development specialists integrate fast and deliver with transparency. Explore our AI services for startups or reach out to discuss your team’s specific needs. We are ready to help you build something that lasts.

Frequently asked questions

Why is Python preferred for AI over faster languages like C++ or Rust?

Python leads in AI because of its vast library ecosystem, ease of prototyping, and a massive talent pool, even though C++ and Rust often outperform it in raw speed. The productivity and ecosystem advantages outweigh the performance gaps for the vast majority of AI product teams.

Is Python fast enough for production AI workloads?

Python is typically fast enough for prototyping and deployment because the heavy computation runs in optimized C++ and CUDA kernels underneath the Python layer. High-performance bottlenecks can be addressed with C++ backends or hybrid architecture using Mojo or Rust for specific components.

What distinguishes a senior Python AI engineer from a junior?

Seniors bring systems thinking, DevOps skills, and production-ready AI experience that junior engineers and AI coding tools cannot replicate. The difference shows up in deployment reliability, security, and the ability to architect for scale from day one.

Do LLMs and AI tools favor Python code generation?

Yes. LLMs generate Python in 80 to 97 percent of AI-related coding tasks, reflecting decades of Python-first documentation and training data that makes it the default output for AI-assisted development tools like Claude and OpenAI’s models.

Recommended

- Hire Python Developers | Cost, Skills & Hiring Models 2026

- What is Python development: a 2026 guide for startups

- Why startups hire Python engineers to scale faster in 2026

- Python in SaaS: Boost scalability and efficiency in 2026

- Python – Meduzzen

- Top AI prospecting tools for faster mineral detection