In this article

Software scalability solutions most startups build wrong

Business & Strategy

Apr 20, 2026

5 min read

Discover practical software scalability solutions for startups and CTOs. Learn frameworks, patterns, and implementation strategies to scale your tech stack without costly rebuilds.

TL;DR:

- Startups often outgrow their software architecture before achieving product-market fit, leading to costly rebuilds.

- Effective scalability combines technical patterns like microservices and organizational readiness to handle growth.

- Focus on practical metrics and incremental improvements rather than over-engineering for future uncertain loads.

Most startups outgrow their software before they ever find product-market fit. That’s not a cautionary tale. It’s a pattern we’ve seen repeat itself across FinTech, EdTech, Logistics, and Healthcare teams alike. The architecture that carries you through your first thousand users often collapses under ten thousand. And by the time the cracks show, the cost of rebuilding feels almost punishing. This guide is written for founders and CTOs who want to get ahead of that moment. We’ll walk you through what software scalability really means, where most teams go wrong, and how to put real solutions in place before growth forces your hand.

Key Takeaways

| Point | Details |

|---|---|

| Plan for growth | Early and realistic scalability planning prevents expensive system rebuilds down the road. |

| Prioritize practical frameworks | Use proven design patterns that match your startup’s stage rather than overengineering. |

| Balance speed and maintainability | Aim for solutions that support rapid adaptation without accumulating technical debt. |

| Continuously optimize | Regular evaluation and refactoring keep your stack scalable as usage patterns evolve. |

Understanding software scalability: Why it matters for startups

Software scalability is simply your system’s ability to handle more: more users, more data, more transactions, without falling apart or requiring a complete redesign. Think of it like building a road. A single-lane path works fine for a village. But when the city grows around it, you either planned for expansion or you’re tearing everything up to start over.

For startups, scalability is about more than just performance. It shapes your cost structure, your team’s velocity, and your ability to respond when opportunity arrives fast. When your infrastructure can’t keep up with demand, you’re not just dealing with slow load times. You’re losing users, burning engineering hours on firefighting, and quietly eroding the trust you’ve worked hard to build.

Here are the most common scenarios where startups hit a scalability wall:

- A sudden spike in signups after a product launch overwhelms the database

- A feature built for 500 concurrent users breaks at 5,000

- An API that worked fine in staging becomes a bottleneck in production

- Data pipelines slow to a crawl as usage scales up month over month

- A monolithic codebase becomes too tangled to ship new features safely

These aren’t edge cases. They’re the norm for fast-growing teams that deferred infrastructure decisions in favor of shipping speed. And the irony is real: moving fast early can force you to slow down painfully later.

One area where scalability complexity becomes especially visible is machine learning. Scalability challenges in ML systems are unique because they involve large datasets, distributed training loops, and inference pipelines that behave very differently under load. As researchers note, ML systems face unique scalability challenges, including large datasets and distributed training, and these challenges compound as models grow in size and deployment scope.

Beyond ML, the pitfalls apply broadly. The three most damaging mistakes we see are:

Underestimating future load. Teams design for where they are, not where they’re going. Six months of traction can render a carefully built system obsolete.

Overengineering too early. The opposite trap. Spending weeks on distributed infrastructure before you have meaningful traffic is a form of procrastination dressed up as planning.

Ignoring maintainability. A system that scales in theory but is impossible to debug, extend, or hand off to new engineers is a liability, not an asset. The best guide is a practical software engineering checklist for scaling that grounds decisions in where your product actually is.

“The goal isn’t to build for infinite scale. It’s to build so you can scale when it actually matters, without rewriting everything from scratch.”

Getting that balance right is the foundation of every decision that follows. Now that you know what’s at stake, let’s detail the main challenges you’ll encounter as you scale.

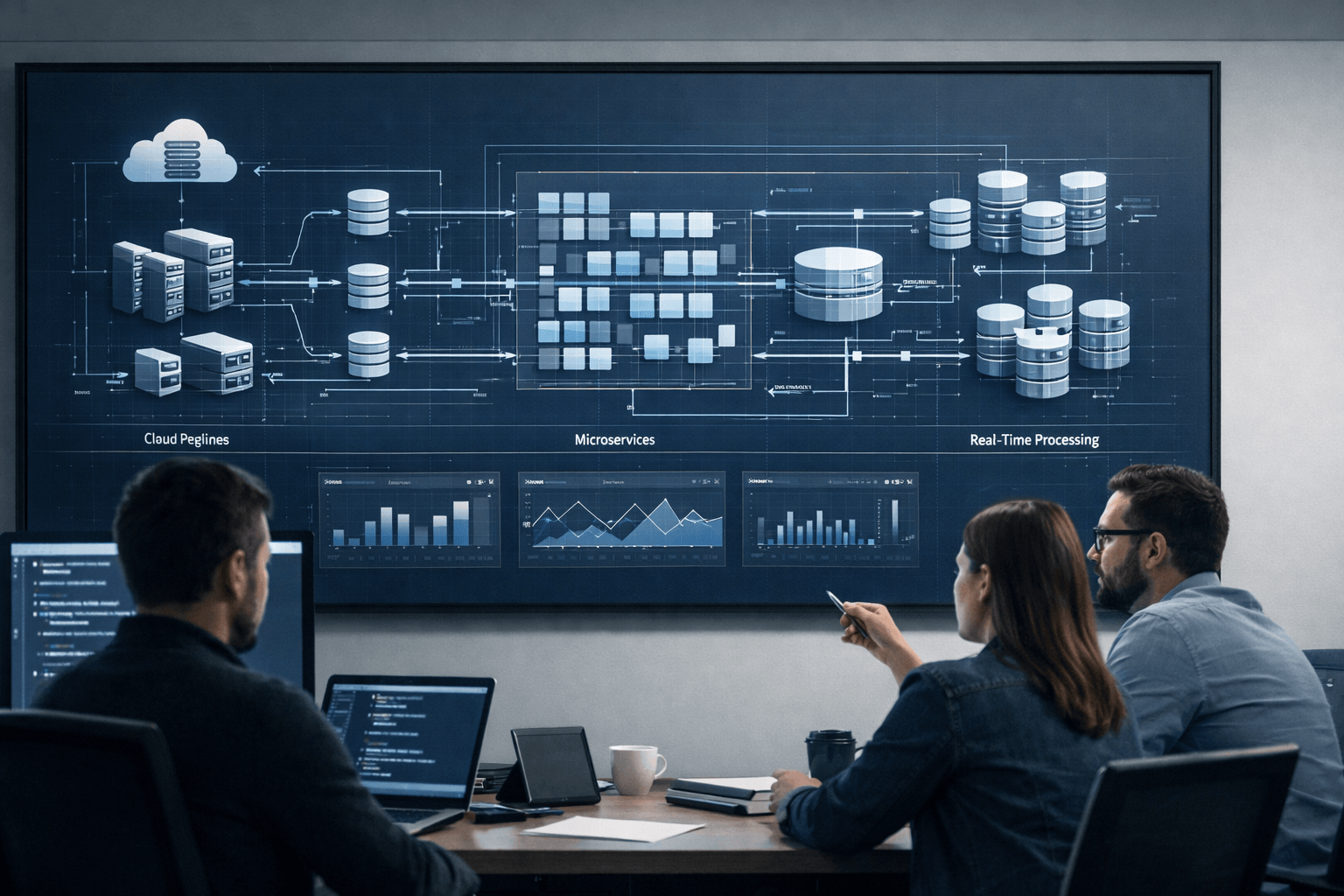

Common scalability challenges in contemporary tech stacks

Scalability covers not just handling high loads, but also maintainability and distributed system complexity, and that’s a useful frame for understanding what actually breaks under pressure. Most teams think of scalability as a purely technical problem. In practice, it’s also an organizational one.

Let’s walk through the core issues:

- Database growth. Relational databases are excellent until they’re not. As your data volume grows, query times degrade, indexes bloat, and what once took milliseconds starts taking seconds. Without a sharding or caching strategy, the database becomes the bottleneck for everything.

- Concurrency. More users hitting your system at the same time creates race conditions, locking issues, and resource contention. If your application wasn’t designed with concurrent access in mind, you’ll see failures that are difficult to reproduce and even harder to fix under pressure.

- Latency under load. Network hops, synchronous calls, and unoptimized queries compound when load increases. Users notice. And in competitive markets, they leave.

- Distributed system complexity. Once you move beyond a single server, you’re managing network partitions, eventual consistency, and failure modes that require deliberate design. This is where many teams underestimate the expertise required.

- Talent and maintainability. A system only one engineer understands is a risk. Scalable systems need to be maintainable by teams, not individuals. This is often the invisible bottleneck that slows scaling more than any technical constraint.

| Scaling approach | Strengths | Limitations |

|---|---|---|

| Vertical scaling | Simple to implement, no architecture changes | Hard ceiling on capacity, expensive at scale |

| Horizontal scaling | Near-unlimited growth potential, fault-tolerant | Requires distributed system design, more complexity |

Recognizing scaling pain points early matters. Use your monitoring to watch for patterns: rising p95 latency, increasing error rates during peak hours, and database CPU spikes are the early signals most teams ignore until they become incidents. Your software scaling checklist should include these metrics as standard.

Another under-discussed challenge is organizational readiness. Growing teams that don’t have clear ownership over system components introduce confusion that slows incident response and makes coordinated scaling changes risky. Scalable SaaS success depends as much on team structure as it does on architecture.

Pro Tip: Instrument your system for observability before you think you need it. Logs, traces, and metrics are not a luxury. They’re how you diagnose problems in distributed systems without spending days guessing. If you can’t see it, you can’t fix it.

The software scalability for CTOs conversation almost always starts with technology and ends with people. Having seen what’s commonly problematic, the next step is understanding proven solution frameworks.

Frameworks and patterns for scalable software design

Good software architecture is not about complexity. It’s about choosing the right level of structure for where your product is today, while keeping the door open for what comes next. As scalable distributed design research confirms, distributed training introduces tradeoffs between scalability and system maintainability, and that tradeoff is universal, not just for ML teams.

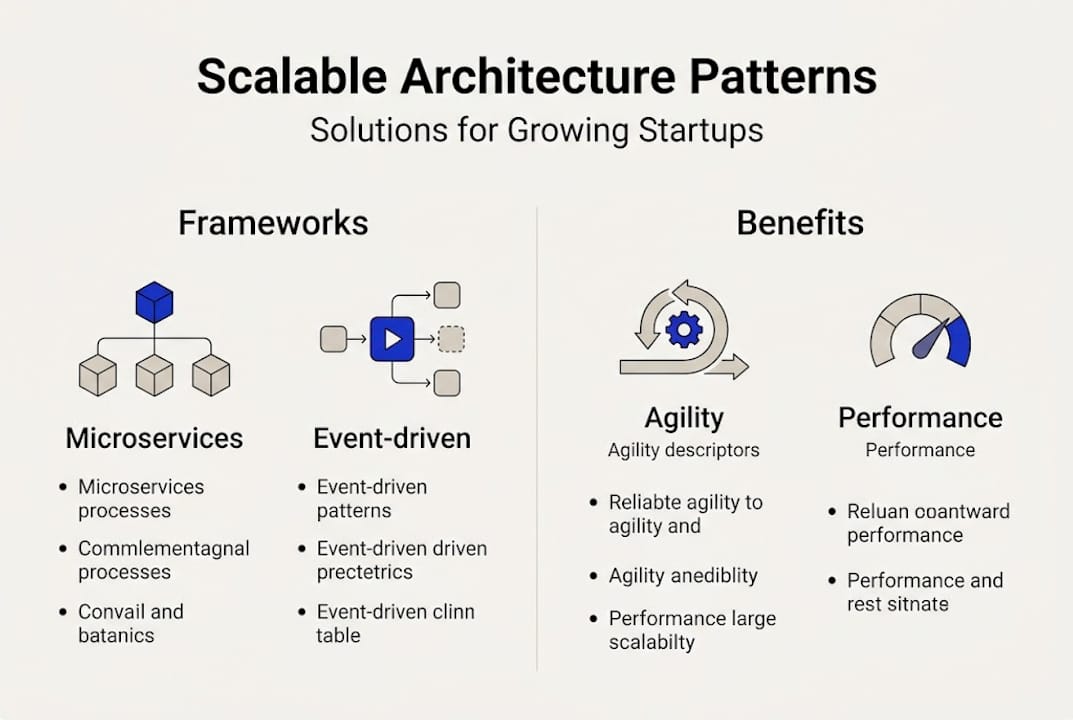

The three design patterns that matter most for scaling startups:

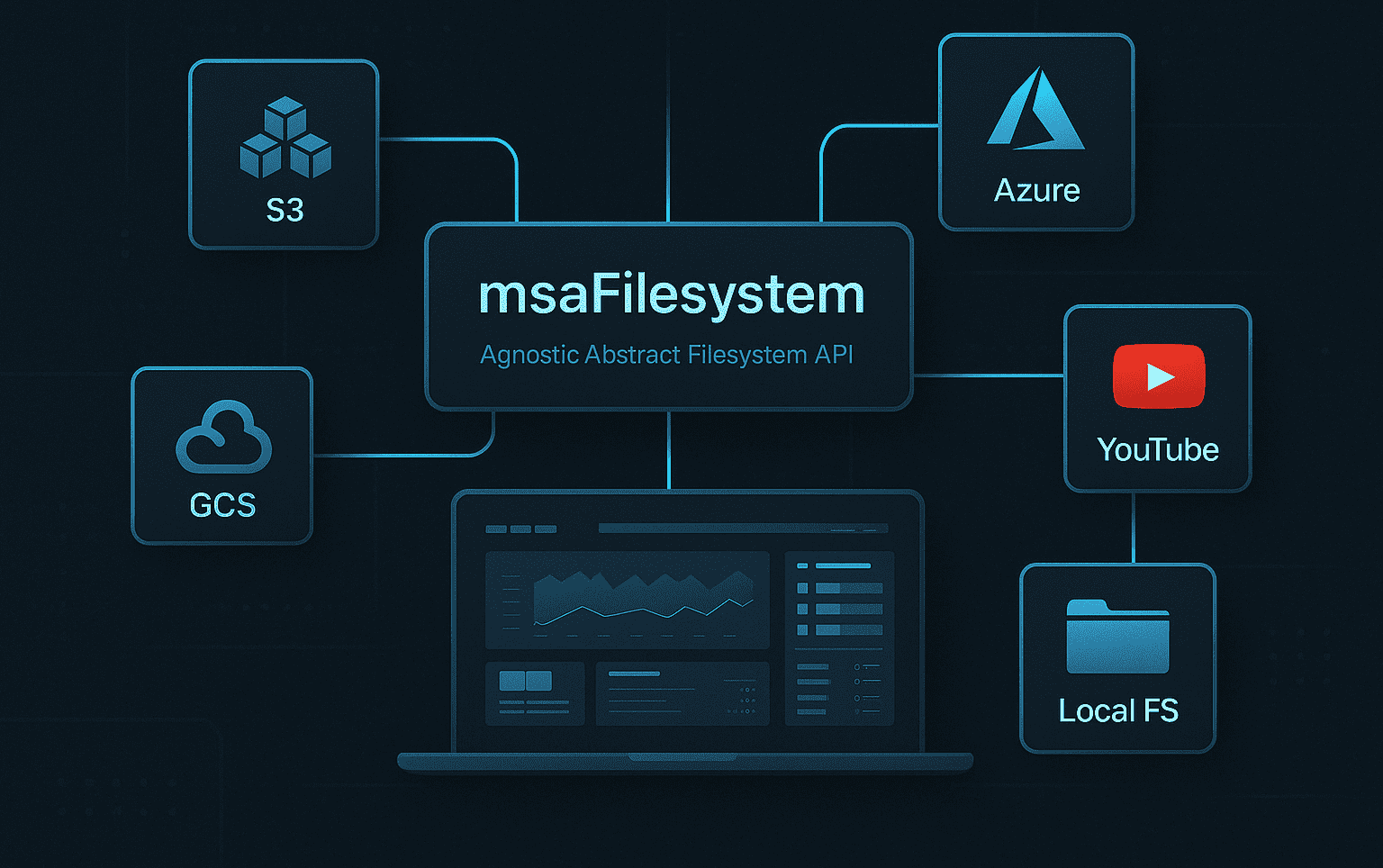

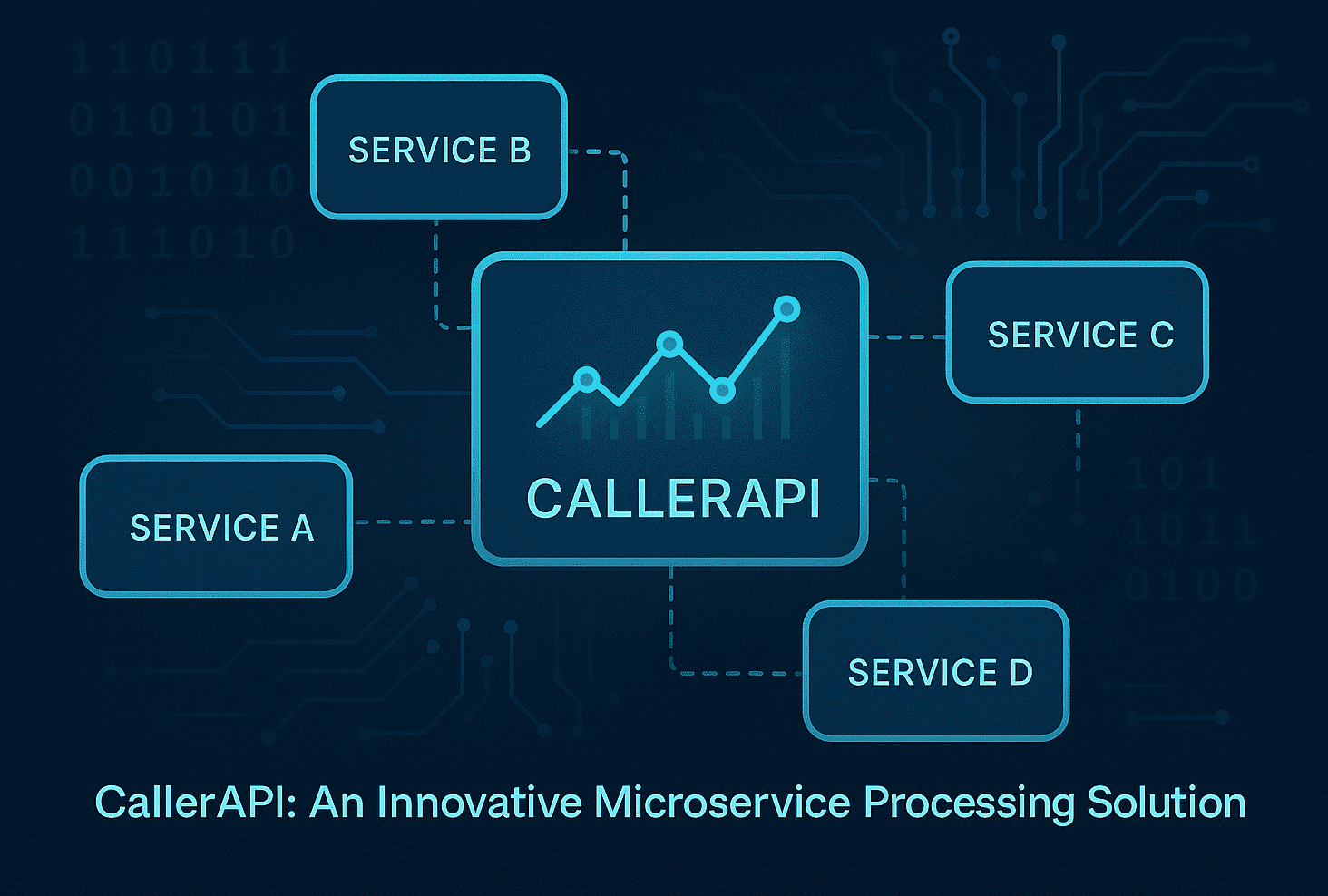

Microservices. Breaking a monolith into independent services lets teams deploy, scale, and maintain components separately. The benefits are real. So is the overhead. Microservices make sense when you have clear domain boundaries and the engineering maturity to manage them.

Event-driven architecture. Rather than services calling each other directly, they communicate through events. This decouples components, improves resilience, and makes it easier to scale individual parts of the system without cascading effects.

CQRS (Command Query Responsibility Segregation). Separating read and write operations lets you optimize each path independently. Read-heavy systems, which describe most SaaS products, benefit significantly from this pattern.

| Design pattern | Best for | Key tradeoff |

|---|---|---|

| Monolith | Early-stage, small teams | Hard to scale individual components |

| Microservices | Growth stage, clear service boundaries | Operational complexity increases |

| Event-driven | High-throughput, async workflows | Harder to debug and trace |

| CQRS | Read-heavy SaaS, data-intensive apps | Requires careful data synchronization |

Here’s how to think about patterns by stage:

- Early stage: Keep it simple. A well-structured monolith beats a premature microservices architecture. Focus on clean interfaces and separation of concerns.

- Growth stage: Identify the services with the most load or the most change, and extract them first. Don’t break everything apart at once.

- Scale stage: Introduce event-driven patterns for async workloads. Invest in DevOps for scalability to automate deployments, configuration management, and observability.

Structured frameworks reduce technical debt by forcing teams to make deliberate decisions about system boundaries. When you’ve defined what each component owns, you avoid the slow accumulation of tangled dependencies that make systems fragile over time. Teams building enterprise scalable solutions often find that the discipline of the framework matters as much as the choice of framework itself.

Pro Tip: Start with extensibility, not scale. Before you add horizontal scaling, ask whether your codebase can accept a new service without touching five existing files. That flexibility is the foundation all other scaling decisions rest on.

With the main frameworks in mind, let’s apply these lessons to real-life implementation strategies.

Implementing and optimizing your software scalability solutions

Knowing the right patterns is one thing. Executing them under real startup conditions: with limited time, limited budget, and a team stretched across three priorities at once, is another challenge entirely. Scalability implementation requires balancing scalability with maintainability tradeoffs, and that balance looks different for every team.

Here’s a practical sequence for evaluating when and how to scale:

- Measure before you move. Identify where your actual bottleneck is. Not where you think it is. Where your monitoring data says it is. Many teams optimize the wrong layer entirely.

- Define your load targets. What does your system need to handle in the next 6 and 12 months? Work backward from realistic projections, not best-case fantasies.

- Choose the smallest effective change. Can a caching layer fix the problem without a full architectural overhaul? Start there. Reserve bigger changes for when smaller ones run out of room.

- Test under simulated load. Load test before you go live. Failures in staging are learning opportunities. Failures in production are user-facing crises.

- Monitor continuously after deployment. Scaling changes often introduce new bottlenecks. Keep watching the metrics that tell you whether your intervention actually worked.

- Refactor proactively. Don’t wait for the next crisis. Build refactoring into your sprint cycle so technical debt doesn’t accumulate silently.

Key stat: The majority of scaling failures occur not during steady growth, but in the aftermath of sudden traffic surges: product launches, viral moments, or partner integrations that drive unexpected volume.

Cloud-native platforms accelerate this entire process. They let you provision capacity on demand, apply auto-scaling rules, and reduce the infrastructure overhead that would otherwise require a dedicated ops team. For teams looking at the best SaaS platforms for scaling, cloud-native options offer the fastest path from bottleneck to resolution.

Some quick wins worth pursuing early:

- Add a CDN (Content Delivery Network) to reduce latency for static assets

- Implement read replicas for your database to offload query load

- Cache frequently requested data at the application layer

- Use async job queues to move heavy processing out of the request cycle

The pitfalls are equally worth naming. Moving too fast without proper testing introduces new failure modes. Scaling infrastructure before fixing inefficient code wastes money. And neglecting documentation means the engineer who built the solution is the only one who can maintain it.

For teams serious about doing this well, cloud scalability services and enterprise-level enterprise scalability best practices offer a proven path forward that balances speed with durability. Now, let’s cut through the noise with a perspective on what actually matters. and what doesn’t. when scaling.

Why most advice on software scalability misses the mark

Here’s the uncomfortable truth we’ve arrived at after working with startups across a dozen industries: most scalability advice is written for a hypothetical company, not yours. It assumes you have a dedicated platform team, months of runway to refactor, and a clear roadmap. Most startups have none of that.

The “future-proof everything” philosophy sounds responsible. In practice, it leads teams to build distributed systems before they have distributed load, and to architect for a scale they may never reach. That’s not engineering discipline. That’s anxiety dressed up as planning.

What we’ve found actually works is more pragmatic. You scale what’s hurting. You instrument what you can’t see. You build extensibility into the seams of your system, not into every component. The real-world scalability insights that matter most often come from watching what breaks under load, not from predicting what might.

There’s also an organizational dimension that almost nobody talks about. The teams that scale well aren’t always the ones with the best architecture. They’re the ones where engineers have clear ownership, communication is fast, and decisions get made without weeks of committee review. Cultural readiness is infrastructure. Ignore it and even the best technical design will struggle to hold.

Focus your scaling energy where growth actively threatens performance. Everywhere else, keep it simple, keep it readable, and keep the lights on.

Empower your growth with expert scalability solutions

Scaling a product while building a team while serving customers is one of the hardest balancing acts in tech. You don’t always have the bandwidth to figure out the architecture and ship the features at the same time.

That’s where Meduzzen steps in. We work with startup founders and CTOs to build systems that grow with you. not against you. Our engineers bring deep experience in scalable web development, and our AI specialists help teams integrate AI-driven scalability patterns that handle high-load workloads with resilience. For teams running Python-based infrastructure, our Python engineering team and our Python scalability experts integrate directly into your workflow, bringing both technical depth and startup pace. Whether you need a dedicated team, targeted augmentation, or end-to-end product development, we’re built for the long game alongside you.

Frequently asked questions

What is the difference between vertical and horizontal scalability?

Vertical scaling means upgrading existing hardware to handle more load, while horizontal scaling adds more nodes or servers to distribute that load across multiple machines. Different scaling approaches offer unique benefits and tradeoffs depending on your system’s architecture and growth stage.

How soon should startups invest in scalability solutions?

Plan for scalability early by building extensibility into your architecture, but avoid heavy infrastructure investment until you’re close to product-market fit. Balancing timing and tradeoffs in scalability is critical to avoiding wasted resources on infrastructure you don’t yet need.

What is the biggest pitfall startups face with software scalability?

The most damaging pitfall is underestimating future complexity and load, which forces expensive and time-consuming rewrites after growth accelerates. Most scaling failures are due to overlooked maintainability and underestimated complexity in system design.

Are cloud solutions necessary for scalable software?

Cloud platforms make scaling faster and more cost-effective, but they’re not the only path; a well-designed architecture can scale on-premise too. Cloud technologies can significantly accelerate scalable solution deployment for teams with limited infrastructure bandwidth.

What is a real-world example of a scalability failure?

A SaaS startup that grew rapidly after a product launch found its monolithic system unable to handle concurrent users, forcing a complete rewrite that delayed new features for nearly a year. Startups often face rebuilds when scalability is deprioritized during the early stages of product development.