In this article

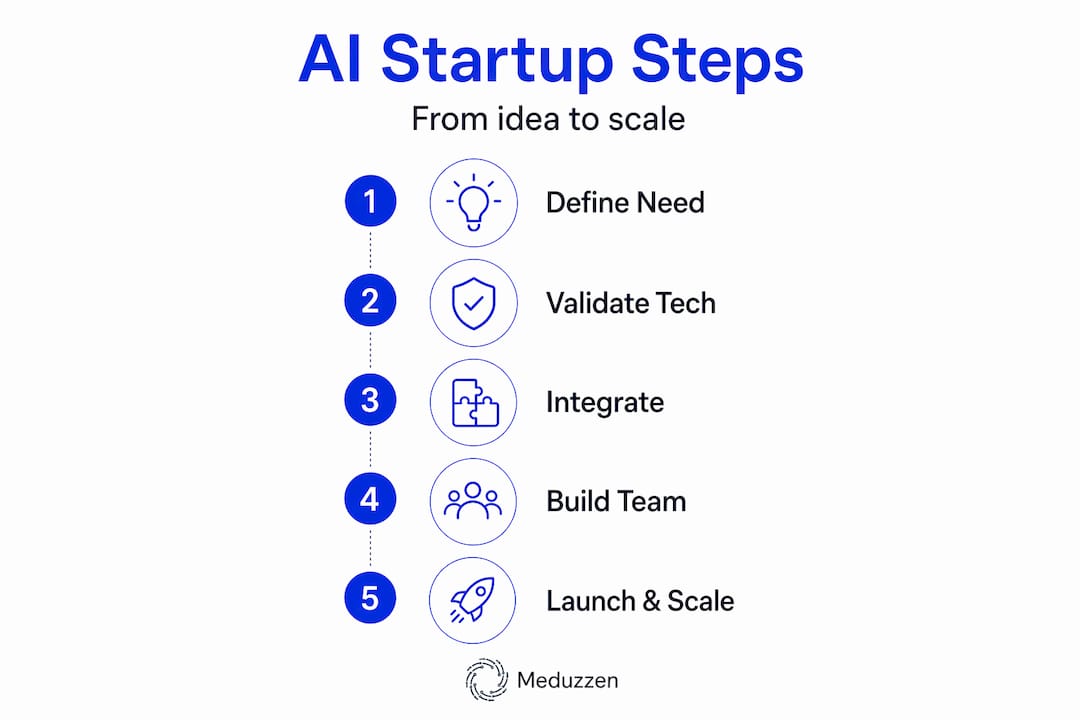

Build your AI startup: proven steps for success

AI & Automation

May 15, 2026

11 min read

Ready to build your AI startup? Discover proven steps to scale successfully, from architecture to team dynamics. Start your journey today!

TL;DR:

- Scaling an AI-driven product requires profound organizational redesign and technical discipline unlike SaaS; founders must prioritize readiness in data, model evaluation, and team skills before building. Deep integration into customer workflows creates high switching costs, demanding meticulous architecture, workflow redesign, and rigorous testing to ensure durability. Success hinges on embracing operational discipline, continuous evaluation, and team capacity to adapt, ensuring AI products deliver sustained value beyond initial models.

Scaling an AI-driven product is not the same as scaling a SaaS company. The playbooks diverge faster than most founders expect, and the gap between “we have a working model” and “we have a durable business” is wider than it looks from the outside. Most early teams underestimate the engineering depth required, the organizational redesign demanded, and the speed at which technical debt accumulates when AI is bolted onto legacy processes. This article walks through the honest steps: from readiness and architecture to team scaling, safe rollouts, and the operational discipline that separates AI startups that survive from those that stall.

Key Takeaways

| Point | Details |

|---|---|

| Deep integration required | AI startups must build deeper product integrations than generic SaaS for real enterprise impact. |

| Human talent still essential | AI coding agents need developer oversight and smart guardrails to succeed on complex tasks. |

| Safe deployment matters | Structured rollout strategies and prompt modularity reduce unpredictable regressions in AI products. |

| Business model innovation boost | Intense AI use correlates with higher innovation, especially for startups prioritizing rapid growth. |

Getting started: What founders need before building an AI startup

Every founder we talk to wants to move fast. That instinct is right. But moving fast without the right foundation is how you build something that collapses under its own weight six months later. Before you write a single line of production code, you need clarity on three things: your technical readiness, your business model, and your hiring posture.

Technical readiness means more than having access to a capable model. It means understanding your data pipeline, your inference costs, your latency constraints, and your evaluation strategy. Too many founders skip evaluation because it feels academic. It is not. Without a disciplined way to measure model quality, you will have no idea when your product degrades, and it will degrade.

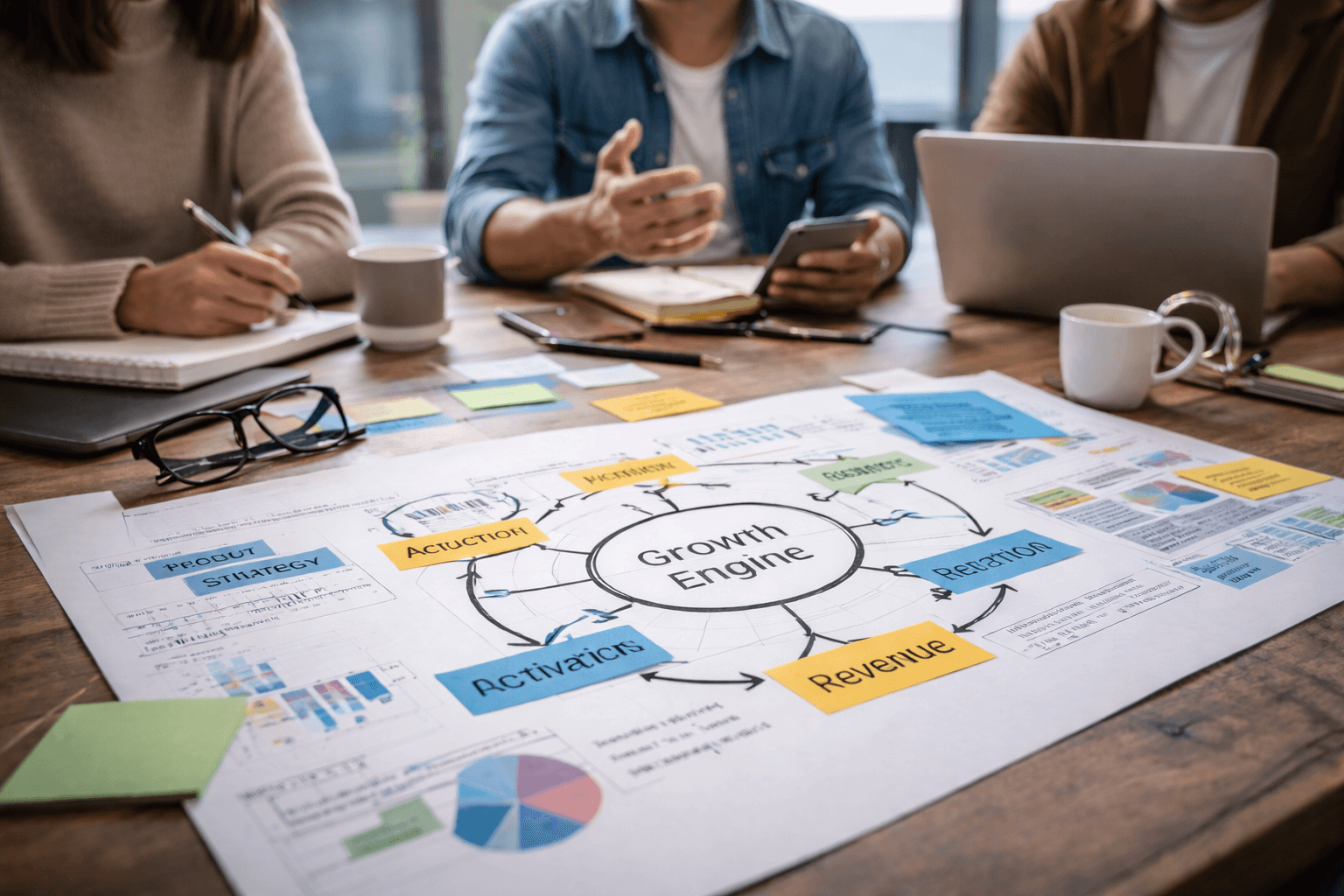

Business model readiness is where AI startups genuinely differ from their SaaS predecessors. Research on business model innovation in AI startups shows that startups with intense AI use exhibit significantly higher business model innovation than those with little or no AI exposure, with the strongest effects seen in companies that simultaneously pursue rapid growth and profitability. This is not an accident. AI forces you to rethink value delivery at a structural level, not just automate an existing workflow.

Hiring readiness is the third pillar. AI products require engineers who understand not just software development, but also data, model behavior, and probabilistic outputs. Understanding the key components of AI-powered software for startups helps teams align on what skills actually matter versus what sounds impressive in a job description.

Here is what your readiness checklist should cover before you build:

- A defined problem with measurable success criteria

- At least one dedicated person who understands ML infrastructure and data quality

- A clear sense of your inference cost structure and how it scales with usage

- An early customer segment willing to give honest, iterative feedback

- A documented hypothesis about how AI changes your unit economics compared to a traditional software approach

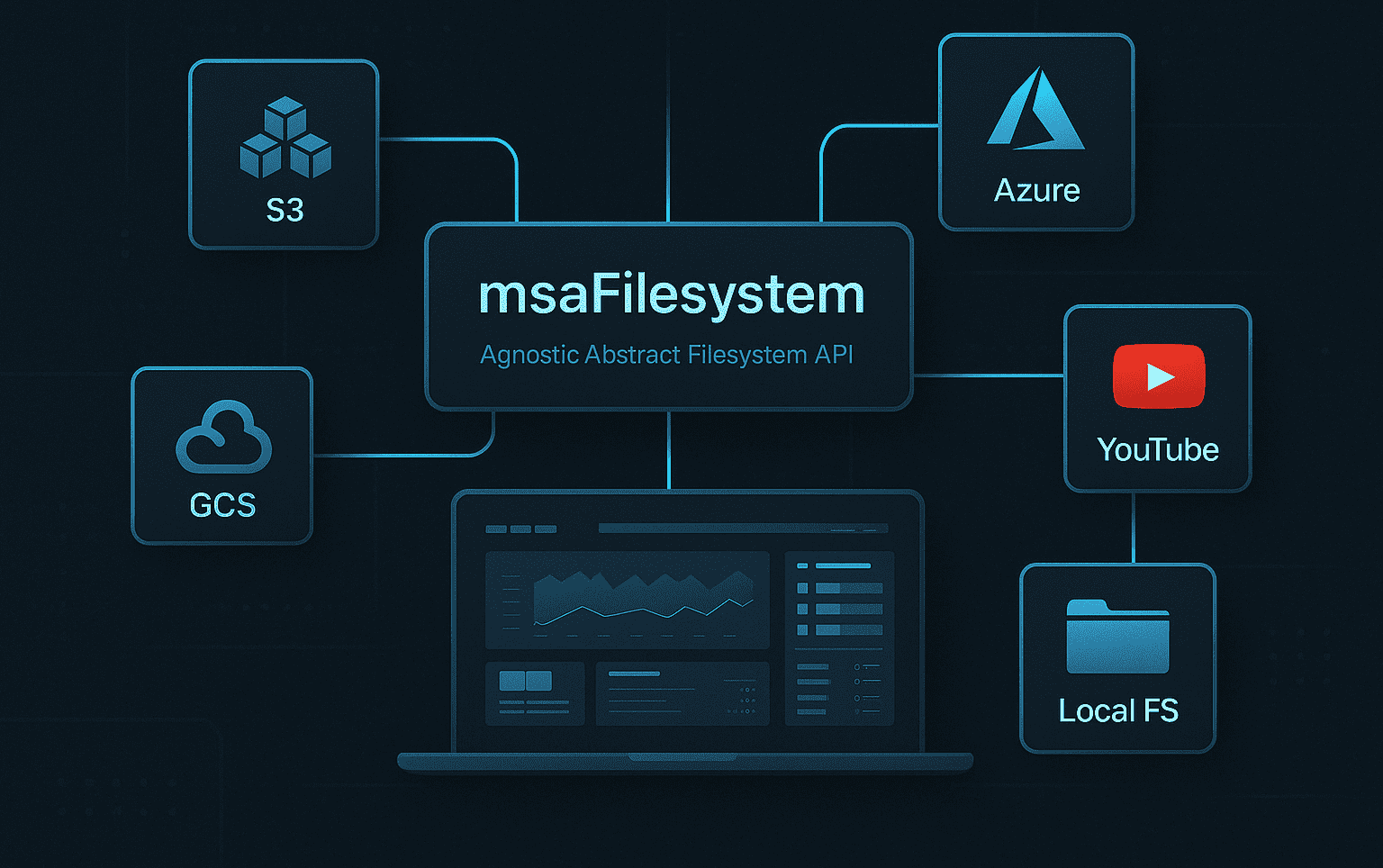

The role of Python in AI development deserves a specific mention here. Python remains the lingua franca of AI engineering because of its ecosystem depth. FastAPI, LangChain, PyTorch, and Hugging Face integrations all live natively in that world. Your stack choices at this stage will constrain or enable you for years. Choose them with your eyes open.

| Readiness area | Common gap | What to do |

|---|---|---|

| Technical | No evaluation framework | Build a test set before MVP |

| Business model | Copy-paste SaaS pricing | Model value delivered per AI action |

| Hiring | Generalist devs only | Hire at least one ML-aware engineer early |

| Data | Untested data pipeline | Run end-to-end data audits before launch |

Pro Tip: Validate your dual goals early. If you want both rapid growth and strong profitability, you need a business model where AI genuinely reduces marginal cost per customer. Do not assume that will happen automatically. Map the economics before you scale.

For a more grounded look at how to structure this journey from the start, the startup software development success guide is a useful reference that covers organizational and engineering alignment in parallel.

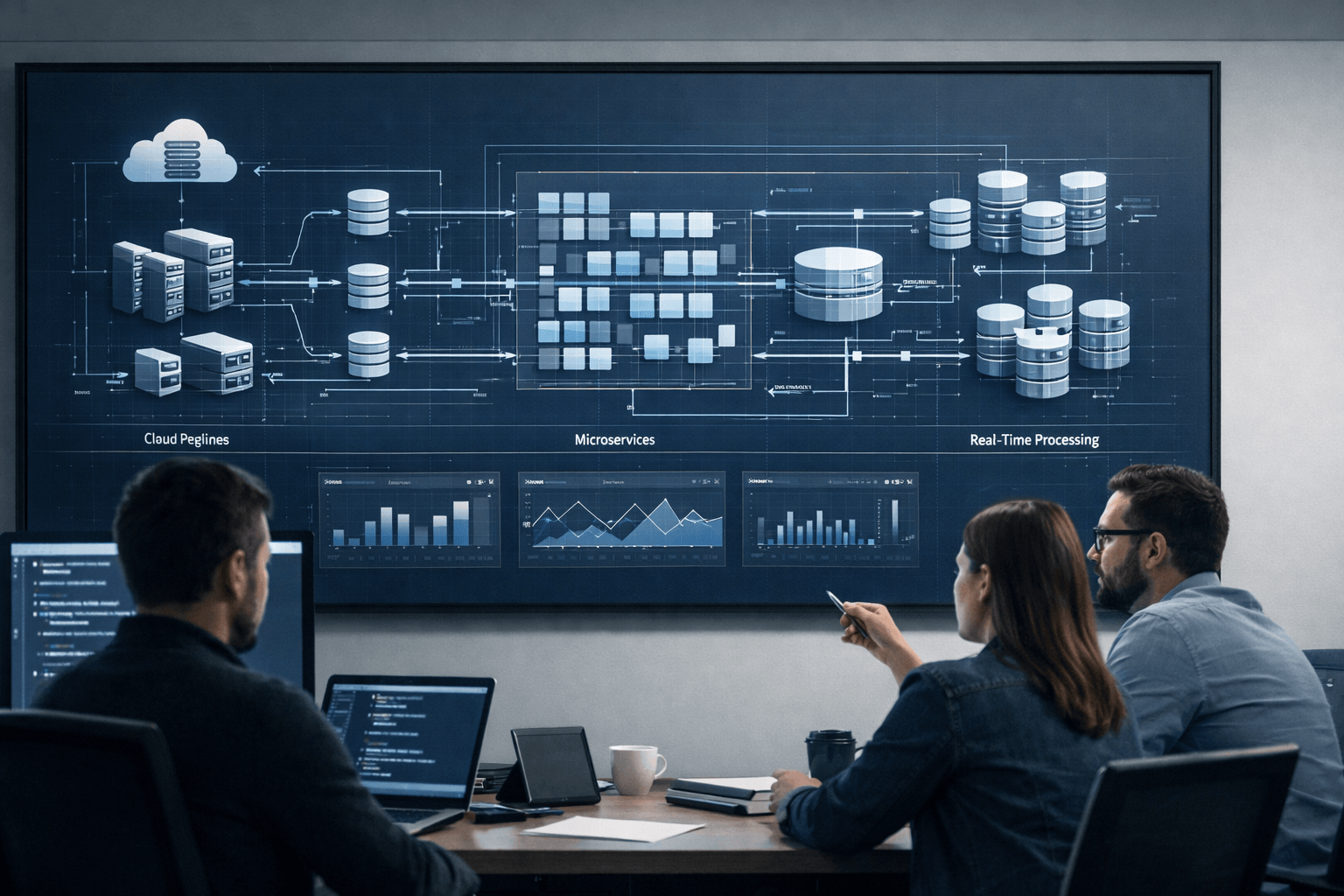

Architecting your AI product: Integration, workflow, and operational rigor

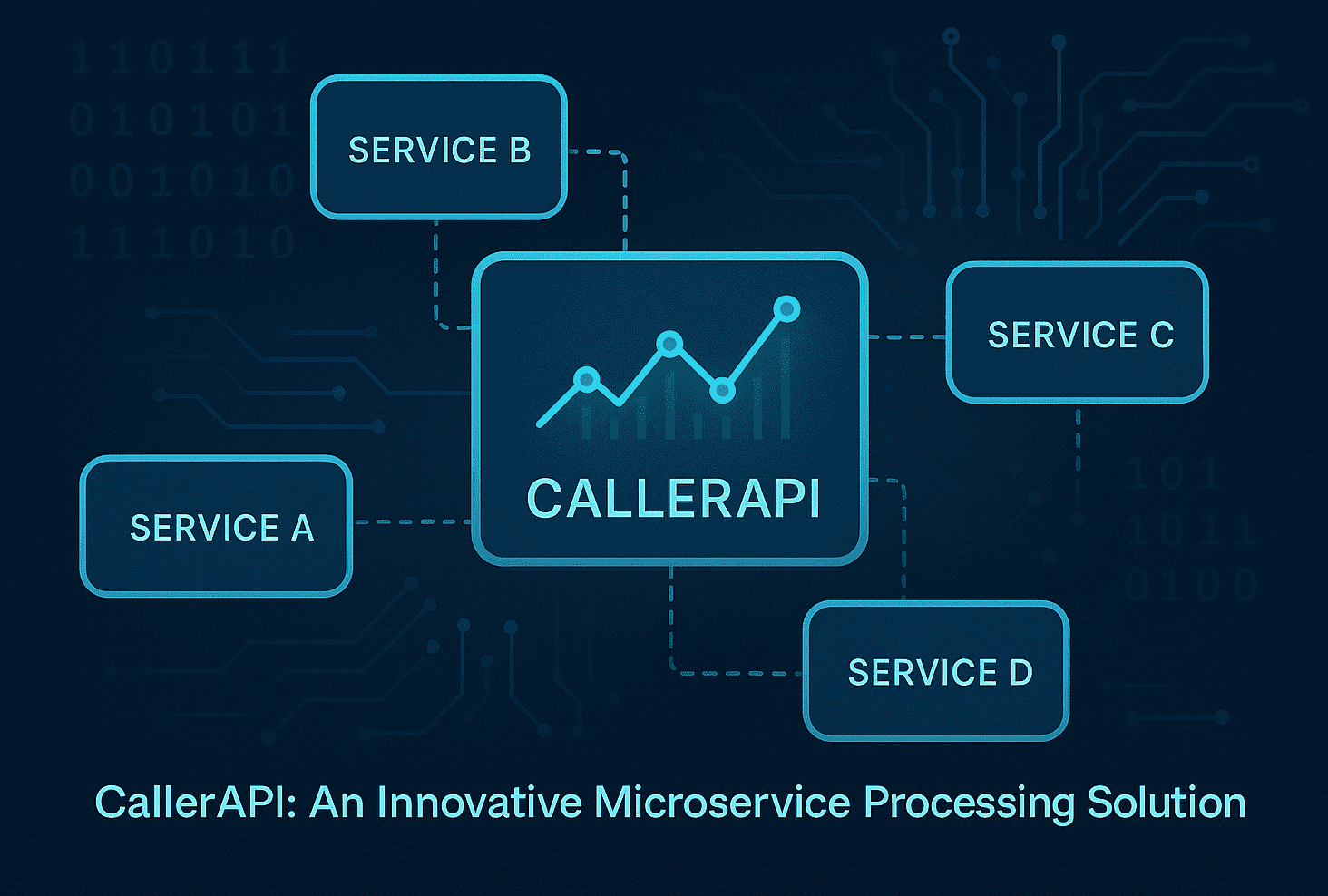

Here is something most SaaS founders learn the hard way when they move into AI: integration is not a feature. It is the foundation. A SaaS product can afford to be modular and surface-level in how it connects to a customer’s systems. An AI product usually cannot.

“Enterprise AI startups require substantial engineering work to integrate deeply with each customer’s policies, culture, and systems. Deep vertical integration drives product durability.” — Insights for enterprise AI builders

This distinction matters enormously when you are making architectural decisions. A generic SaaS tool can offer a clean API and let customers configure their own workflows. An AI product that genuinely changes how a business operates needs to be woven into that business’s fabric. That means understanding their data formats, their approval chains, their compliance requirements, and sometimes even their cultural norms around automation.

Workflow redesign is not a nice-to-have. It is your actual competitive edge. When you redesign a customer’s workflow around AI capabilities rather than simply inserting an AI tool into an existing process, you create switching costs that are genuinely hard to replicate. A good enterprise AI integration case study illustrates how deeply embedded data access and live web context can transform what an AI product actually delivers versus what a surface-level tool can offer.

Compare the two approaches:

| Dimension | Generic SaaS approach | Deep AI vertical approach |

|---|---|---|

| Integration depth | API endpoints, webhooks | Direct system, policy, and data integration |

| Workflow impact | Adds a step to existing flow | Redesigns the flow itself |

| Switching cost | Low: data export and move | High: entire workflow dependent on AI layer |

| Competitive durability | Moderate | Strong |

| Time to full value | Fast | Slower, but compounding |

| Engineering investment | Standard | Substantial, ongoing |

Building this kind of integration requires a step-by-step approach that keeps quality high without slowing momentum:

- Map the customer’s existing workflow in detail before writing any integration code. Understand every handoff, every decision point, and every data source.

- Identify the highest-leverage AI intervention points rather than trying to automate everything at once. Constraint sharpens creativity here.

- Design your data contracts explicitly. Define the exact schema of data flowing in and out of your AI layer, and version it from day one.

- Build your evaluation layer in parallel with your product layer. You need to know when the AI is wrong before your customer does.

- Plan for model updates from the start. Models will change. Your integration layer needs to absorb those changes without breaking the customer’s workflow.

For teams actively working on this challenge, the guide on building scalable AI SaaS offers a practical framework for keeping engineering coherent as complexity grows.

Building the engineering team: Scaling with dedicated developers and AI agents

Talent is where AI startups feel the most pressure. Demand for experienced AI engineers far outpaces supply, and the temptation to fill that gap with AI coding agents is real. The honest answer is: agents help, but they do not replace human judgment, and right now the limitations are measurable.

OpenAI’s guidance on building AI-native engineering teams emphasizes that coding agents can implement features end-to-end, but they require guardrails and models specifically tuned for high-signal bug detection. The guidance recommends an iterative expansion of agent responsibility, not a wholesale handover. That framing is important. Agents are a productivity multiplier for skilled engineers, not a substitute for them.

The empirical picture is sobering. SWE-Bench Pro benchmark data shows that on long-horizon enterprise software engineering tasks, Pass@1 rates remain below 25%, with GPT-5 achieving the highest score at just 23.3%. For production-grade enterprise work, developer oversight is not optional. It is essential.

What does a practical, well-structured AI engineering team actually look like? Here are the core elements:

- At least one senior engineer who understands both systems and ML and can make sound decisions at the interface between them

- Dedicated attention to prompt engineering and evaluation rather than treating these as afterthoughts

- Clear guardrails for agent usage, including defined P0 and P1 bug detection thresholds before any agent-generated code goes to production

- A code review process that accounts for AI-generated code patterns, which tend to look plausible but fail at edge cases

- Explicit protocols for model updates, so no model change gets deployed without a structured regression check

Tools like AI engineering platforms and autonomous agent platforms can accelerate development workflows meaningfully when layered on top of a team with strong engineering fundamentals. The mistake is using them as a shortcut around those fundamentals.

Knowing how to vet AI engineers is a skill in itself. The standard software engineering interview process does not surface the judgment, probabilistic thinking, and evaluation discipline that AI work demands. You need to assess how candidates reason about model failures, not just how they write code. Additionally, keeping up with AI bug detection trends in 2026 gives teams a concrete picture of where automated detection is reliable and where human review remains non-negotiable.

Pro Tip: When evaluating AI coding agents for your team, run them against a sample of your actual codebase and production edge cases, not just the curated benchmarks in marketing materials. Real benchmark data like SWE-Bench Pro is your most honest signal about what agents can and cannot handle. Require developer review on all AI-generated code touching critical paths until you have clear evidence it is safe to expand autonomy.

The right hiring posture depends on your stage. Early-stage startups often benefit most from staff augmentation, where experienced engineers can integrate quickly and deliver without the overhead of a full hiring cycle. As you scale, the mix shifts toward a more permanent core team surrounded by flexible capacity.

Launching and scaling your AI product: Safe rollouts and failure prevention

Launching an AI product is not like launching a traditional software feature. The failure modes are different. A buggy SaaS feature produces a clear error. A degraded AI output often looks perfectly reasonable until someone looks carefully. That asymmetry demands a different approach to rollout and monitoring.

Microsoft’s Marketplace guidance on AI app and agent best practices recommends a set of practices that, taken together, form a genuinely robust launch strategy. These include modular prompt architecture with versioning and rollback capabilities, caching strategies to manage performance and cost, and staged rollouts using feature flags to reduce regression risk. Each of these deserves real attention.

Here is a step-by-step launch framework built around these principles:

- Version your prompts like code. Every prompt change should be tracked, reviewed, and reversible. Treat prompt degradation as a production incident.

- Implement response caching for high-frequency, low-variability queries. This dramatically reduces inference costs and latency without sacrificing quality.

- Use feature flags to gate AI features by user cohort. Start with internal users, then trusted beta customers, then broader rollout. Each stage should have defined success metrics and rollback triggers.

- Build a shadow mode for new model versions. Run the new model in parallel with the production model, compare outputs, and only switch when quality metrics are clearly positive.

- Define your rollback criteria before you ship. Knowing in advance what a failure looks like prevents the kind of slow drift that happens when teams are reluctant to admit a launch is not working.

Here is a useful reference table for the key safeguards at each launch stage:

| Launch stage | Key safeguard | Success metric |

|---|---|---|

| Internal testing | Prompt versioning, evaluation suite | Zero critical regressions |

| Beta rollout | Feature flags, shadow mode | Quality parity with baseline |

| Broader release | Staged cohorts, caching | Performance and cost within targets |

| Full production | Monitoring, rollback plan | User satisfaction, no silent failures |

For teams working through this in real time, the AI model evaluation methods guide provides structured approaches for comparing model behavior across versions. And the master AI development process guide for 2026 covers how to build these practices into your engineering culture rather than treating them as one-off checklists.

It bears repeating: even the best available AI models struggle with complex, long-horizon tasks, with Pass@1 below 25% on enterprise-level benchmarks. Building a launch strategy that assumes perfect AI performance is not a strategy. It is a risk you have not priced yet.

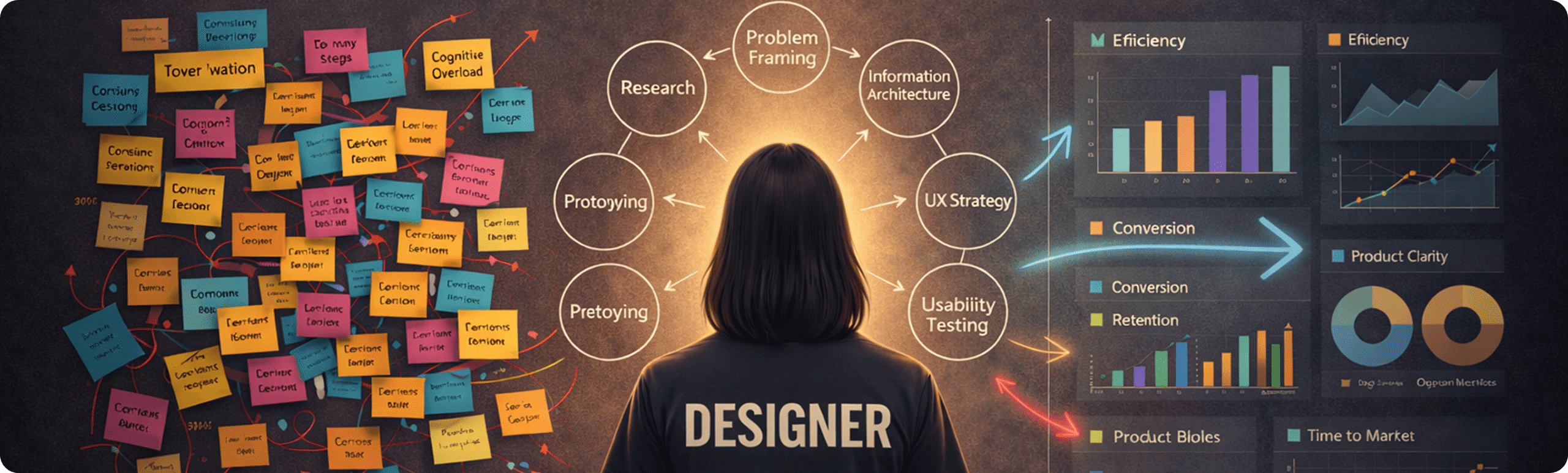

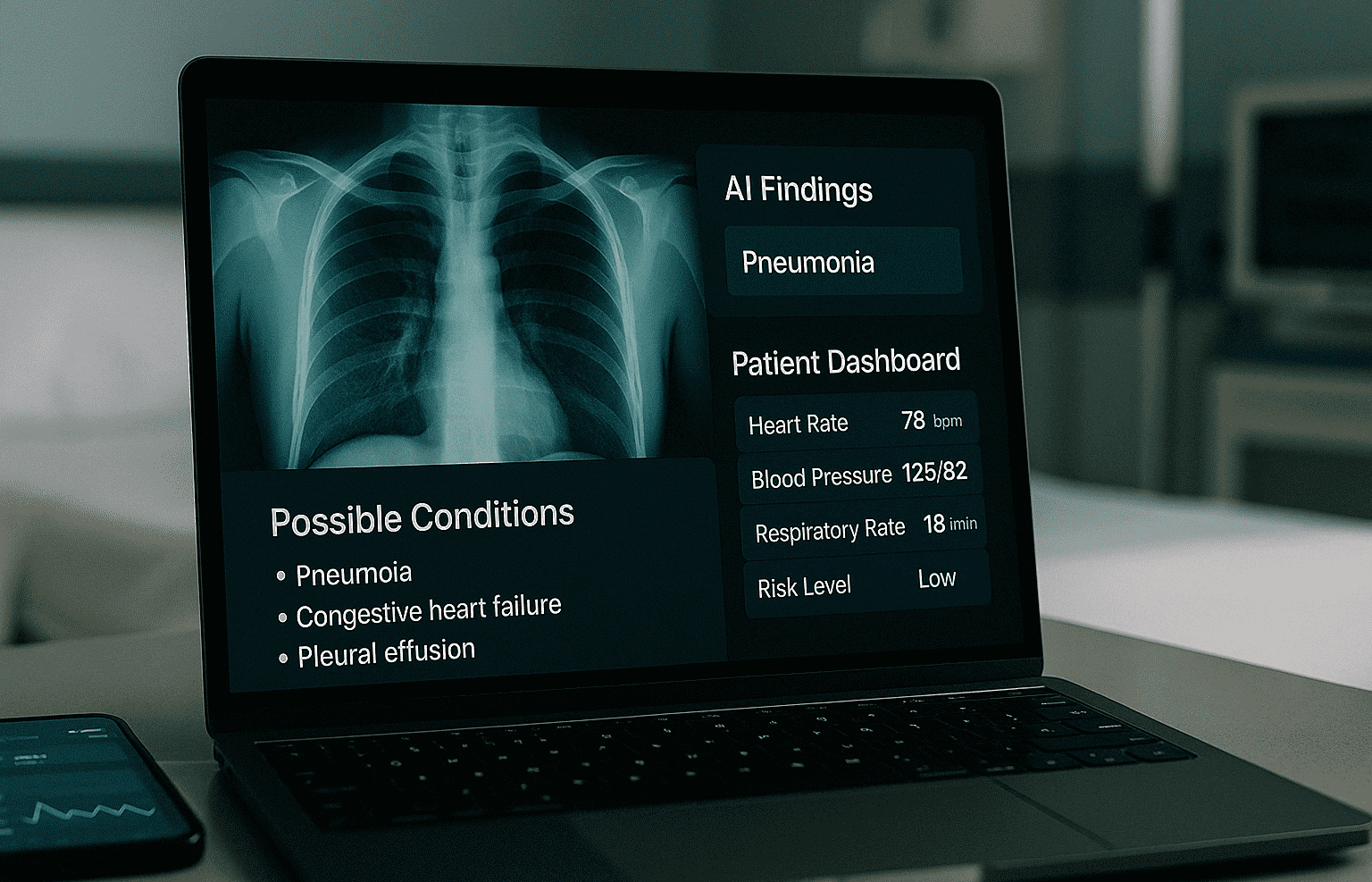

Our hard-won lessons: Why building an AI startup means redesigning everything

After working with dozens of engineering teams building AI-driven products across FinTech, Healthcare, Logistics, and EdTech, the single biggest lesson is this: the founders who succeed are not the ones with the most sophisticated models. They are the ones who redesign their operations, their workflows, and their team structures around what AI actually is, rather than what they wish it were.

There is a tempting shortcut that trips up many early-stage teams. They find a capable foundation model, wrap it in a thin product layer, and call that an AI product. For consumer use cases with low stakes, that sometimes works. For enterprise customers with complex systems, real accountability requirements, and established workflows, it almost never does. Multiple sources consistently warn against treating AI as a drop-in replacement for existing processes or simply bolting it onto legacy systems. The warning is worth taking seriously because the failure mode is slow and expensive. Teams spend months building on a foundation that was never sound, and by the time the cracks show, the cost of rebuilding is high.

The founders we have seen navigate this well share a few things in common. They invest in evaluation before they invest in features. They treat their data pipeline with the same seriousness they treat their model. They build rollback into their deployment process from day one rather than adding it after the first incident. And they accept that AI product development has a different rhythm than traditional software development, one where iteration means evaluating outputs as much as it means writing code.

There is something honest about this kind of operational discipline. It is not glamorous. It does not make for the most exciting investor demo. But it is what keeps the light on when things go wrong, and in AI development, things go wrong in ways that are sometimes invisible until they are not.

Staying close to AI development trends shaping 2026 matters here too. The field moves fast, and the difference between a team that adapts and one that does not is often less about technical skill and more about organizational habits around learning and evaluation.

The contrarian insight we would offer to any founder: your competitive moat in AI is not your model. Models are increasingly commoditized. Your moat is your workflow integration, your evaluation discipline, and your ability to redesign processes around what AI does well rather than forcing AI to do what your old processes required. That is where durable businesses get built.

Pro Tip: Before you scale, invest one sprint in operational discipline. Map your failure modes, define your rollback criteria, and build a lightweight evaluation suite. The cost of that sprint is trivial compared to the cost of discovering these gaps at scale.

Connect with experts to accelerate your AI startup journey

Knowing what to build is one thing. Having the right team to build it is another. At Meduzzen, we have spent over a decade helping startups and growing companies close that gap, not with generic advice, but with pre-vetted engineers who integrate into your team and deliver with the kind of technical discipline that AI products demand.

Whether you need AI services that drive business growth, a stronger foundation through our web development services, or experienced Python developers for your startup, we are built for exactly the kind of work this article describes: deep integration, rigorous evaluation, and engineering that compounds over time. Our 150+ engineers across Python, AI, DevOps, and Cloud have helped teams in FinTech, Healthcare, EdTech, and beyond build products that scale without breaking. If you are ready to move from planning to building, we would be glad to be part of that journey.

Frequently asked questions

What are the most critical team roles when starting an AI startup?

AI startups need strong business leadership, skilled AI engineers, and developers experienced with system integration and workflow management. Unlike standard SaaS teams, enterprise AI products require substantial engineering depth to connect deeply with customer policies, culture, and existing systems, which means generalist developers alone are rarely sufficient.

How much can AI agents currently automate in software development?

AI agents can handle a wide range of coding tasks, but they struggle significantly with complex, long-horizon problems. Empirical benchmarks show current models achieve Pass@1 rates below 25% on enterprise-level software engineering tasks, which makes developer oversight essential rather than optional for production-grade work.

How should founders manage unforeseen regressions in AI products?

Use versioning, feature flags, staged rollouts, and modular prompt architecture to catch and revert regressions before they reach all users. Microsoft’s Marketplace guidance specifically recommends these practices alongside caching strategies for managing cost and performance safely.

Can AI startups be more innovative than traditional tech startups?

Yes, and the research is clear on this. Startups with intense AI use exhibit significantly higher business model innovation than those with minimal AI exposure, particularly when they pursue rapid growth and profitability simultaneously, suggesting that AI genuinely unlocks new structural possibilities rather than just automating existing ones.