In this article

Startup product development: proven frameworks for fast, scalable success

Business & Strategy

May 14, 2026

8 min read

Unlock fast, scalable success with a proven startup product development strategy. Discover effective frameworks to build and measure your MVP!

TL;DR:

- Speed without a disciplined process often results in ineffective product development and high failure rates.

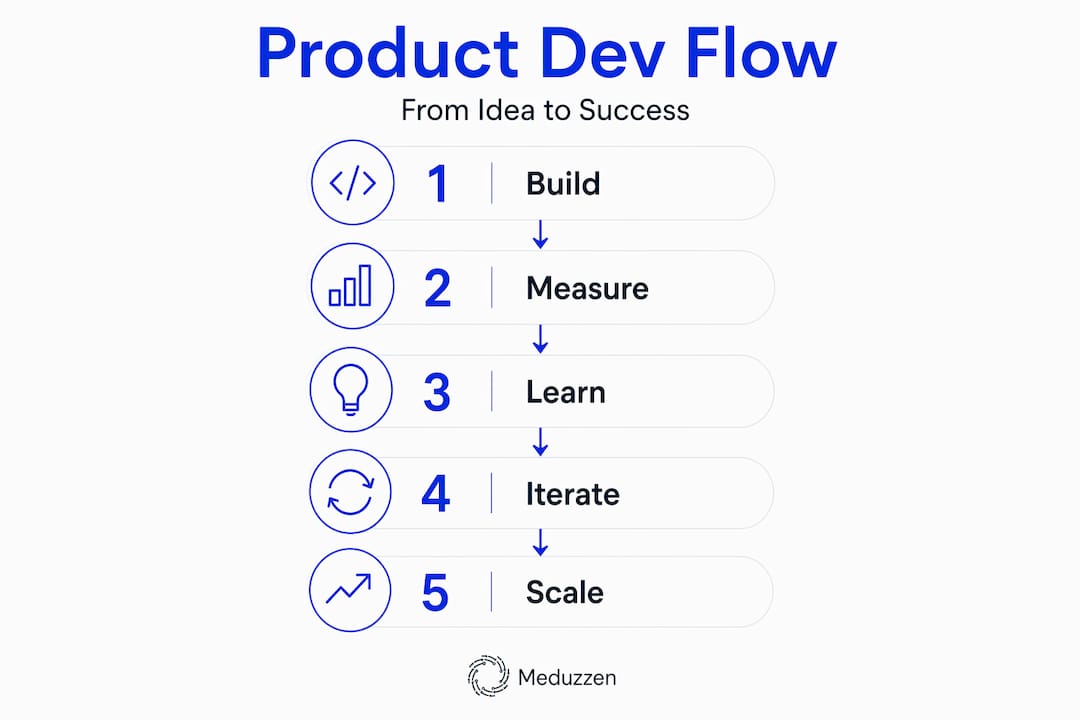

- Implementing the Build-Measure-Learn cycle with clear hypotheses, success metrics, and team involvement enables rapid, evidence-driven learning.

- European startups are now matching US velocity by leveraging AI in MVP building, emphasizing structured iteration and continuous strategy updates.

Most founders believe shipping fast means shipping rough. But speed without structure is just noise. 85% of European startups now use AI to build their MVPs, matching the velocity once reserved for Silicon Valley rocketships. Yet failure rates for first product versions remain stubbornly high. The gap isn’t effort or ambition. It’s the absence of a disciplined, evidence-driven process that connects what you build to what you actually need to learn. This guide gives you that process, from the first feedback loop to scaling with confidence.

Key Takeaways

| Point | Details |

|---|---|

| Test riskiest assumptions | Structure MVPs to explicitly validate the assumption most critical to your product’s success. |

| Tie strategy to execution | A good product strategy operates at the intersection of customer needs, value, and measurable outcomes. |

| Integrate discovery and delivery | High-velocity teams connect idea generation and engineering to iterate and ship faster. |

| Invest early in reliability | Start QA, observability, and deployment metrics from day one to avoid expensive mistakes. |

| Use metrics-driven roadmaps | Pre-commit to the key metric a feature will move to guide sharper decisions at scale. |

Understanding iterative product development: The Build-Measure-Learn loop

Every successful product starts with a question, not an answer. The Build-Measure-Learn loop, the backbone of Eric Ries’s Lean Startup methodology, is built on that idea. You build the smallest thing that can test your riskiest assumption, measure what happens, and let the data tell you what to build next. That’s the cycle. Simple in theory. Hard in practice.

Here’s where most teams go wrong: they treat the MVP as a miniature version of their dream product. They cut features, yes, but they keep the same mindset. An MVP isn’t a smaller product. It’s a learning experiment designed to validate or invalidate a specific belief about your customer. The moment you forget that, you’re building on sand.

The “Measure” step is the one teams skip most often. They ship, they watch the numbers, and they interpret results through the lens of what they hoped would happen. That’s confirmation bias, and it’s expensive. The fix is straightforward: define what success looks like before you write a single line of code. What number moves? By how much? In what timeframe?

“Simply calling something an ‘MVP’ is insufficient. The MVP should test the riskiest assumption and have a defined ‘measure’ step before building.”

Understanding why most MVPs fail often comes down to this single discipline gap. Teams that define their learning objective upfront consistently make better pivot-or-persevere decisions than those who measure reactively.

Core elements of a healthy Build-Measure-Learn cycle:

- Riskiest assumption first. Identify the one belief that, if wrong, kills the business. Build to test that.

- Pre-defined success criteria. Agree on the metric and threshold before building begins.

- Time-boxed iterations. Set a deadline for the experiment. Open-ended sprints drift.

- Honest retrospectives. Review what the data actually says, not what you wanted it to say.

| Loop stage | Common mistake | Better approach |

|---|---|---|

| Build | Building too many features | Build only what tests the core assumption |

| Measure | Measuring vanity metrics | Define one actionable metric in advance |

| Learn | Ignoring disconfirming data | Document what the data says, then decide |

Pro Tip: Write your hypothesis as a falsifiable statement before each sprint. “We believe X customer will do Y because Z.” If you can’t falsify it, you can’t learn from it.

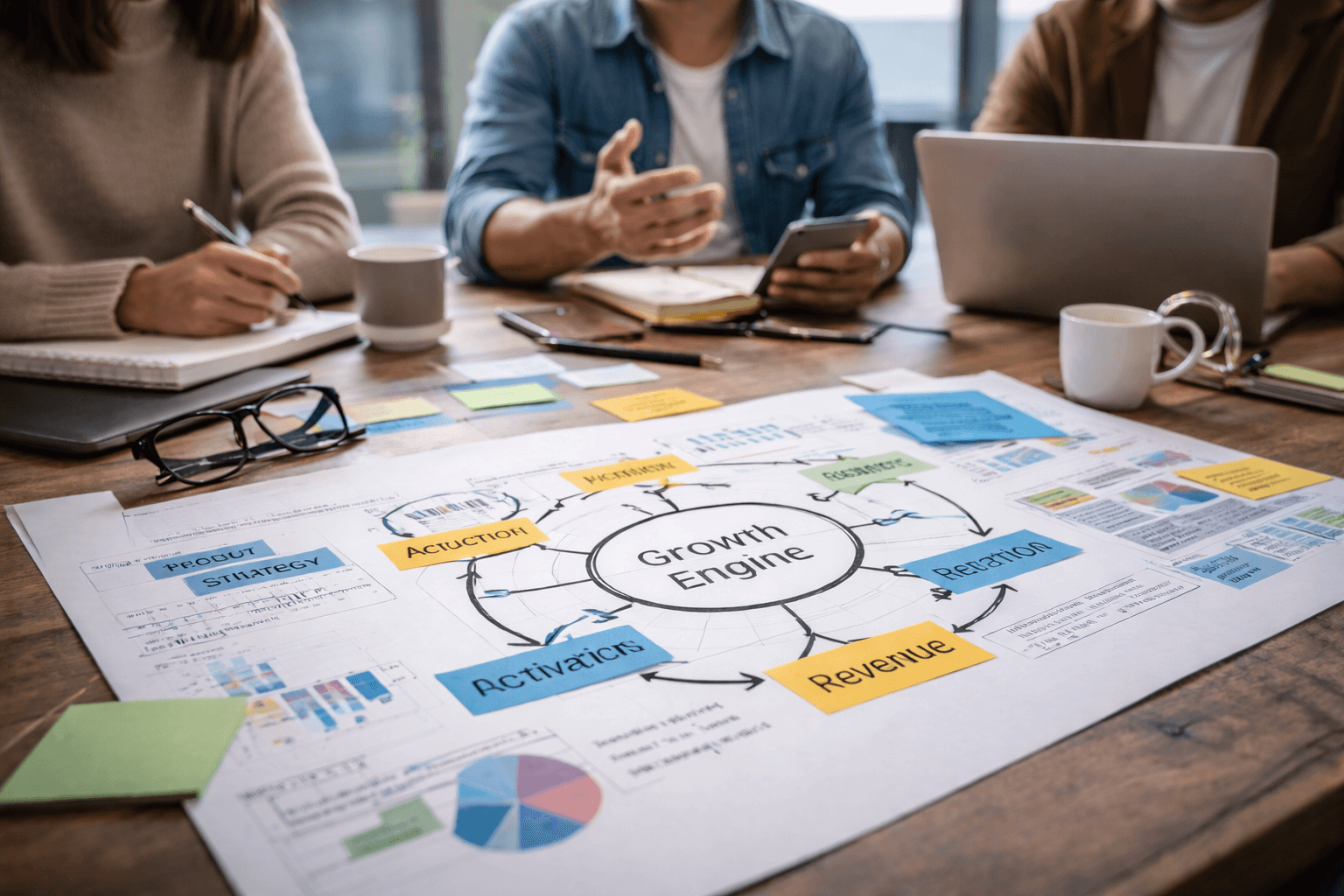

Crafting an evidence-driven product strategy: The 5-part framework

Iteration without direction is just spinning. Once you understand the Build-Measure-Learn loop, the next step is anchoring every cycle to a coherent product strategy. Strategy isn’t a slide deck you revisit once a quarter. It’s the filter through which every build decision gets made.

A solid product strategy template connects five elements: your ideal customer profile (ICP), your value proposition, your key product capabilities, your competitive positioning, and your success metrics. Miss any one of these and your roadmap becomes a wish list.

The 5-part framework in practice:

- ICP (Ideal Customer Profile). Who specifically are you building for? Not “SMBs” or “enterprise.” Name the job title, the industry, the pain point, the budget. The more specific, the better your build decisions.

- Value proposition. What does your product do that no one else does, or does better? This should be one clear sentence your team can repeat without looking at the docs.

- Key capabilities. Which product features directly deliver on your value proposition? These are non-negotiable. Everything else is a nice-to-have.

- Competitive positioning. Where do you sit relative to alternatives? This shapes pricing, messaging, and which capabilities to prioritize.

- Success metrics. What does winning look like in 90 days? In 12 months? Metrics should be specific, owned, and tied to the ICP’s behavior, not internal activity.

| Framework element | Weak version | Strong version |

|---|---|---|

| ICP | “SMB owners” | “Series A FinTech founders with 10-50 engineers” |

| Value proposition | “We make development faster” | “We cut time-to-first-deploy by 40% for lean teams” |

| Success metric | “Grow revenue” | “Reach $50K MRR within 6 months of launch” |

The power of this framework isn’t in the individual elements. It’s in how they connect. When your ICP, value proposition, and metrics align, every sprint has a clear purpose. When they don’t, teams argue about priorities because there’s no shared truth to appeal to.

Pro Tip: Treat your product strategy as a living document. The moment a Build-Measure-Learn cycle produces evidence that contradicts one of your five elements, update it. Strategies that don’t evolve become obstacles. Use your scaling frameworks to guide when and how to update each element.

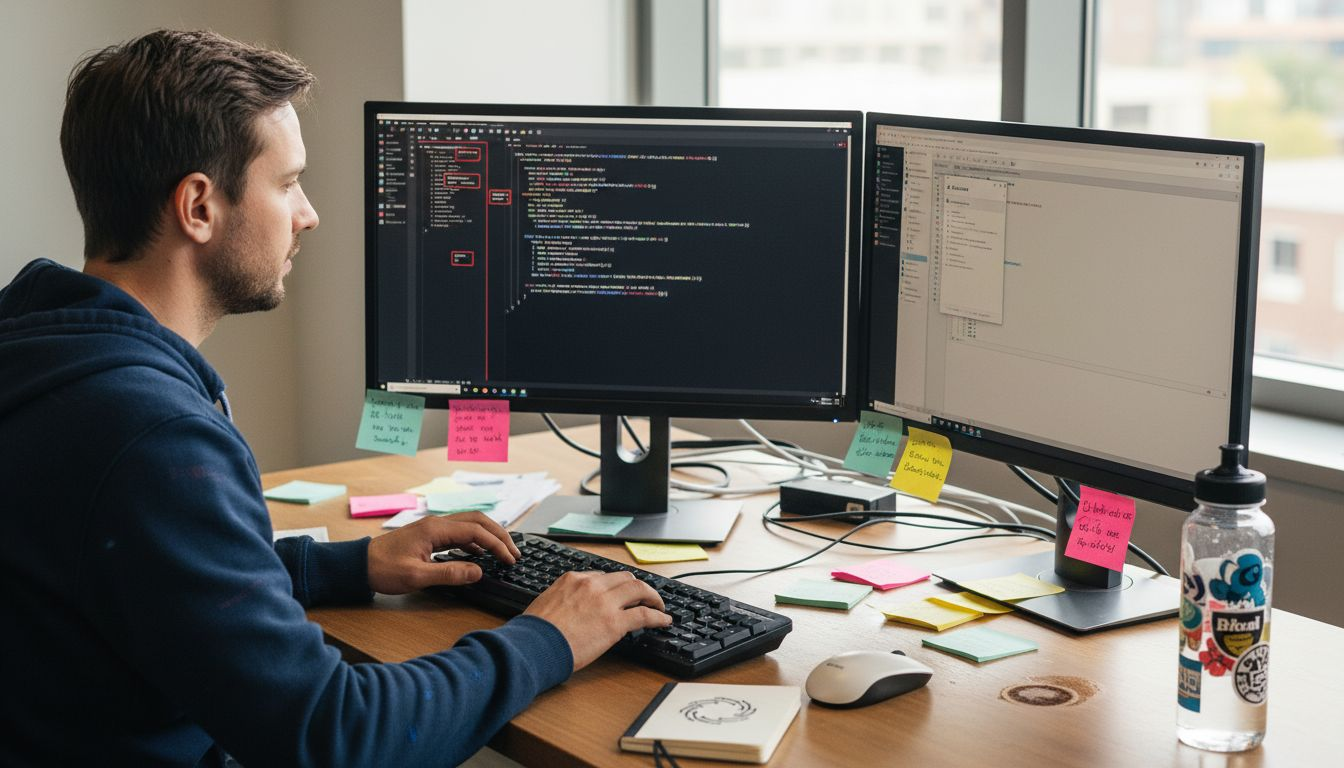

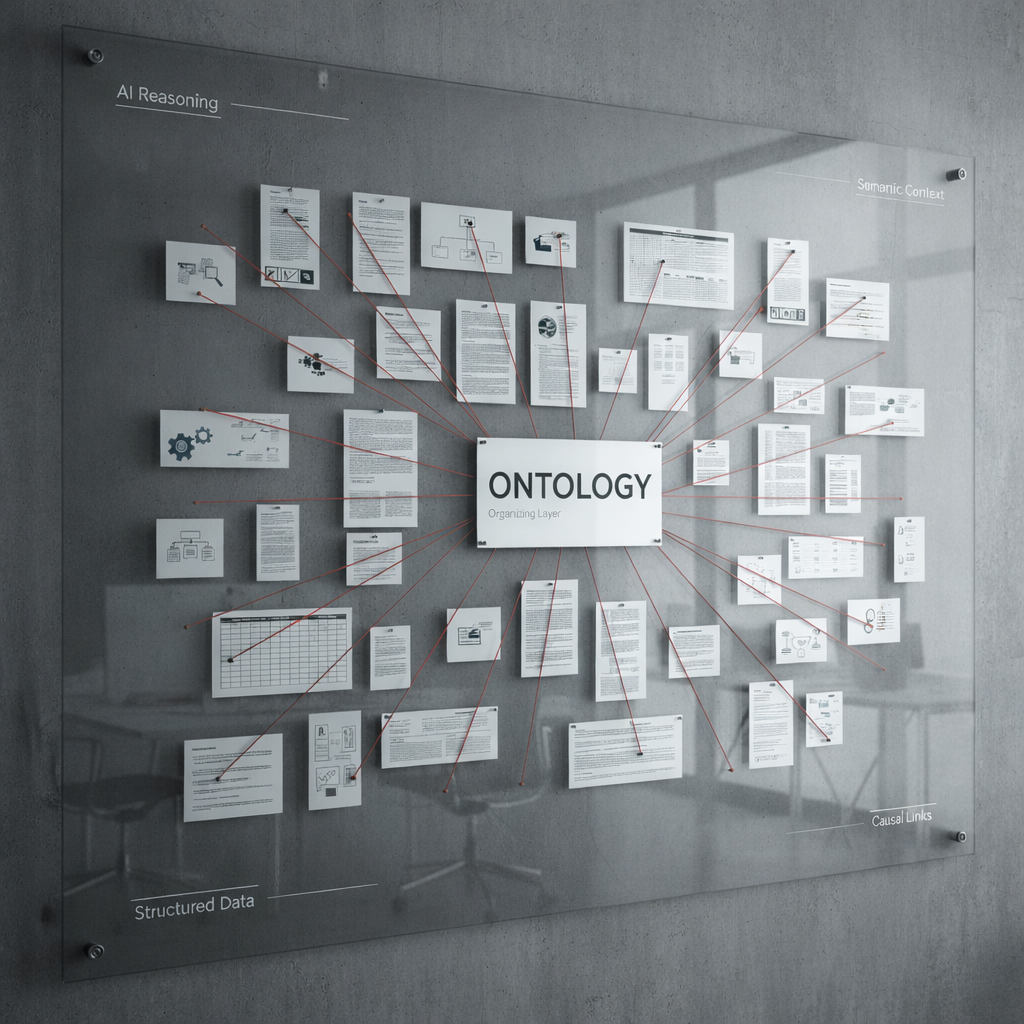

Integrating product discovery and delivery for startup teams

Most early-stage teams operate with a hidden wall between discovery (figuring out what to build and why) and delivery (actually building it). Product managers talk to customers. Engineers ship tickets. Designers prototype in isolation. The result is a product that technically works but doesn’t quite fit.

Dynamic product discovery breaks that wall down. It’s an approach where discovery and delivery run in parallel, with engineers and designers involved in customer conversations and product managers present in sprint planning. The goal is a team that collectively understands the problem, not just the solution.

Here’s what that looks like operationally:

- Weekly idea boards. A shared space (digital or physical) where anyone on the team can log customer insights, competitor observations, or feature ideas. No gatekeeping.

- Prioritization rituals. A recurring meeting, weekly or bi-weekly, where the team scores ideas against ICP fit, strategic value, and effort. Use a simple scoring matrix, not gut feel.

- Unified roadmap tools. Tools that connect discovery artifacts (customer quotes, experiment results) directly to delivery tickets. When an engineer can see why a feature was prioritized, they build it better.

- Cross-functional involvement. Engineers and designers in customer interviews. Product managers in code reviews. Not to micromanage, but to build shared context.

“Discovery and delivery should be integrated using approaches like dynamic product discovery, requiring team-wide participation and data-driven prioritization.”

The payoff is faster, more confident decisions. When the whole team understands the customer’s problem, there’s less back-and-forth, fewer misbuilt features, and a stronger collective instinct for what matters. Leveraging AI-powered product insights can further accelerate this process by surfacing patterns in user behavior that manual review would miss.

Pro Tip: Involve engineers in at least two customer discovery sessions per quarter. Their questions are often more precise than product managers’ questions, and the context they gain translates directly into better technical decisions.

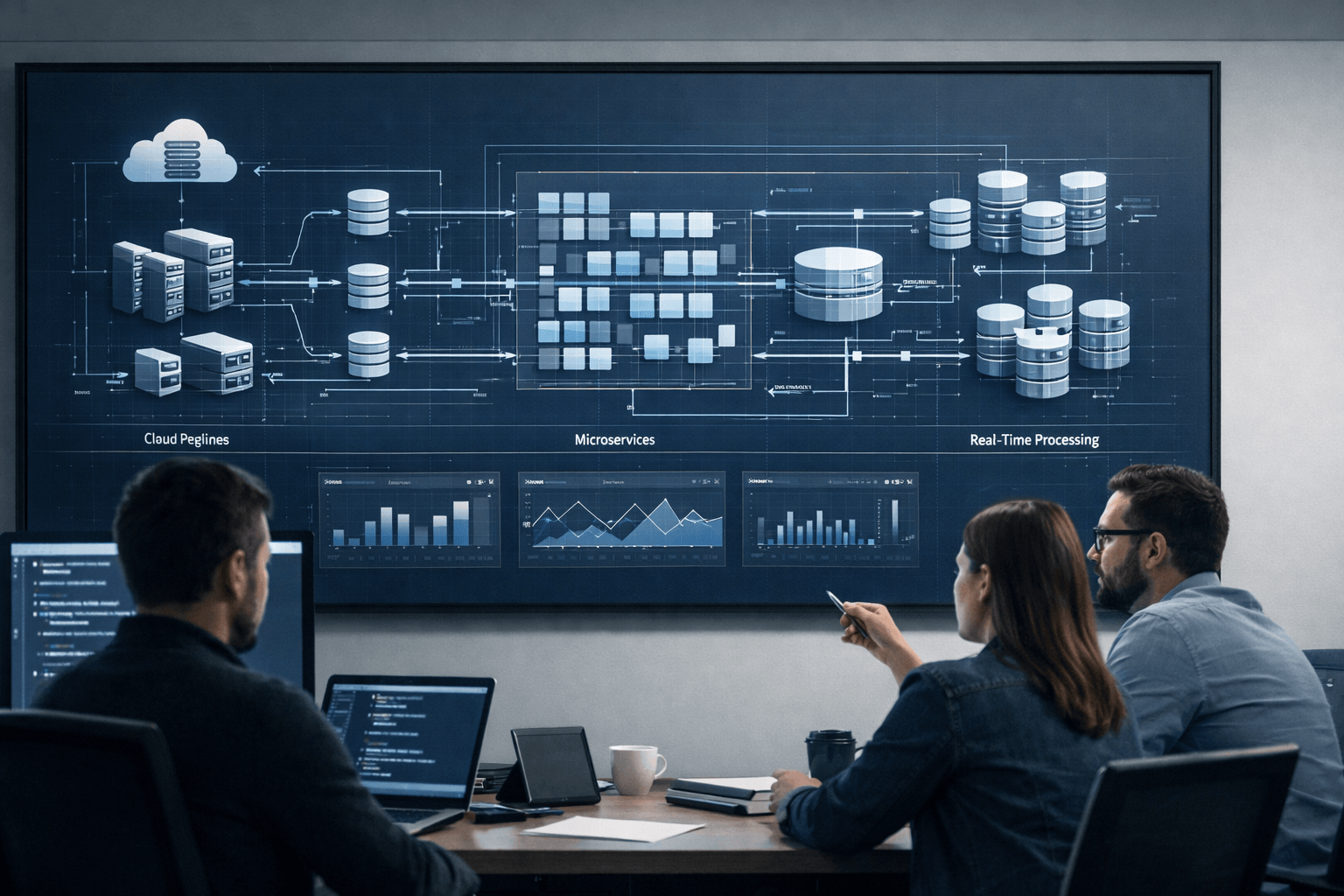

Building, shipping, and measuring: Operational best practices for fast learning

Strategy and process alignment get you to the starting line. What happens next, in the actual engineering workflow, determines whether you learn fast or learn slowly and expensively.

The most common mistake early-stage teams make is treating quality assurance (QA) and observability as things to add later, once the product is more stable. That logic is backwards. Skipping QA and observability early leads to costly mistakes that compound over time, making future iterations slower, not faster.

Operational best practices for fast, reliable shipping:

- Sprint-based QA from day one. Integrate testing into every sprint, not as a separate phase. Aim for a defect escape rate below 1.4 per release, which is typical for high-performing teams.

- Test coverage thresholds. Set a minimum coverage percentage (70-80% is a reasonable starting point) and enforce it in your CI/CD pipeline. Non-negotiable.

- Feature instrumentation before launch. Every new feature should have logging and analytics in place before it ships. If you can’t measure it, you can’t learn from it.

- Automated deployment pipelines. Manual deployments introduce variability. Automate early, even if the setup takes a sprint. The time investment pays back within weeks.

- Incident response protocols. Define how you handle production issues before one happens. A clear runbook reduces panic and speeds recovery.

| Practice | Skip it early | Do it early |

|---|---|---|

| QA integration | Bugs compound, velocity drops | Stable releases, faster iteration |

| Feature instrumentation | Blind to user behavior | Data-driven decisions from launch |

| Automated deployment | Manual errors, slow releases | Consistent, repeatable shipping |

Understanding full-cycle software development means treating every phase, from design to deployment to monitoring, as part of one continuous loop. Teams that fragment these phases lose context and slow down. Teams that unify them build faster and with more confidence.

Staying current on AI bug detection trends is also worth your attention. AI-assisted QA tools are reducing manual testing time significantly, and early adoption gives lean teams a real advantage.

Pro Tip: Instrument your three most critical user flows on day one. Activation, core action, and retention. These are the signals that tell you whether your product is working before you have enough users for statistical significance.

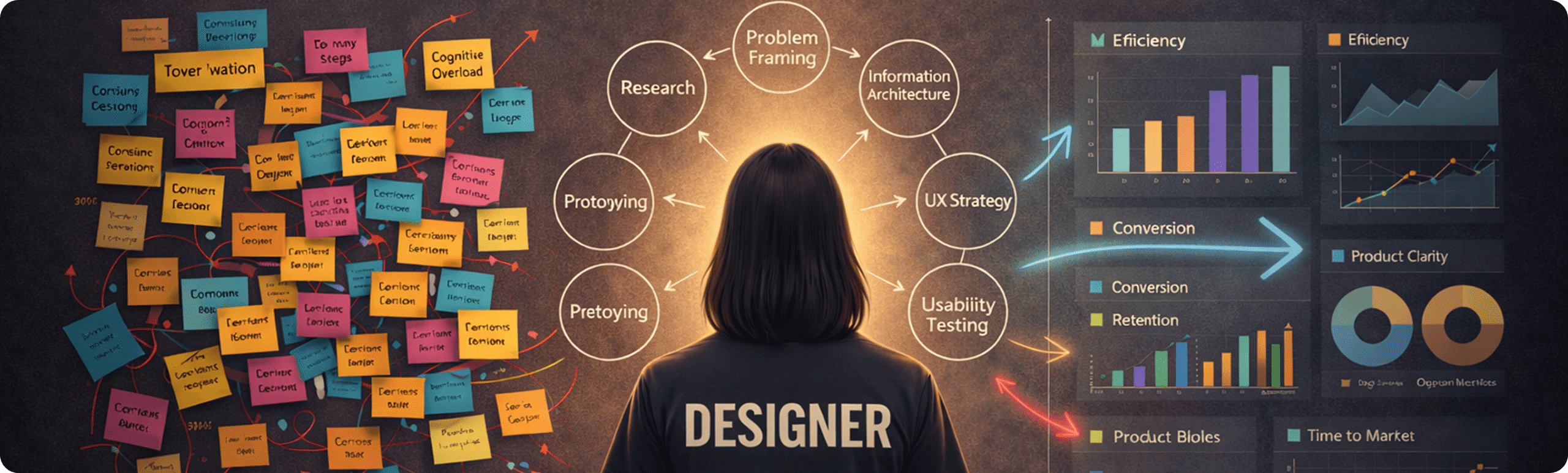

Data-driven scaling: How to use metrics to drive product and team velocity

Reaching product-market fit isn’t a moment. It’s a discipline. The teams that get there fastest are the ones who pre-commit to metrics before building, use roadmaps as active alignment tools, and leverage AI to compress iteration cycles.

The principle is simple but counterintuitive: start with the metric, then build the feature. Most teams do the opposite. They build a feature, then decide how to measure it. That sequence produces features that are hard to evaluate and even harder to iterate on. When you define the metric first, every build decision has a clear purpose.

The AI advantage here is real and growing. 85% of European startups used AI to build their MVPs in 2025, up from 30% five years earlier. AI tools are compressing the time from idea to testable product, which means more learning cycles per quarter. More cycles means faster convergence on what works.

Scaling practices that drive velocity:

- Metric ownership. Assign one person as the owner of each roadmap metric. Shared ownership means no ownership.

- Living roadmaps. Review and update your roadmap every two weeks. A roadmap that doesn’t change isn’t reflecting reality.

- AI-assisted iteration. Use AI tools for code generation, user research synthesis, and A/B test analysis. The goal isn’t to replace judgment. It’s to make judgment faster.

- Leading vs. lagging indicators. Track leading indicators (activation rate, feature adoption) alongside lagging ones (revenue, churn). Leading indicators tell you where you’re headed before the lagging ones confirm it.

| Metric type | Example | When it’s useful |

|---|---|---|

| Leading indicator | Day-7 activation rate | Early signal of retention potential |

| Lagging indicator | Monthly churn rate | Confirms long-term product-market fit |

| Vanity metric | Total signups | Feels good, rarely actionable |

Understanding scaling SaaS products means knowing when to push for growth and when to pause and consolidate learning. The development success guide offers a practical framework for making that call at each stage of growth.

Pro Tip: Assign one owner per roadmap metric and review ownership at the start of every planning cycle. When accountability is clear, progress is faster and debates are shorter.

The uncomfortable truth about startup product development

Here’s what the frameworks won’t tell you. Most product failures aren’t caused by bad ideas or weak engineering. They’re caused by teams that treat uncertainty as a problem to be eliminated rather than a condition to be navigated.

We’ve seen this pattern repeatedly. A founder builds what they think is an MVP. But when you look closely, it’s actually a miniature version of their full vision, complete with polished UI, multiple user roles, and a feature set that took four months to build. They call it an MVP because it’s not finished yet. But it was never designed to test anything specific. It was designed to impress.

That’s the trap. The desire for certainty, for a product that feels “ready,” leads teams to over-build before they’ve validated the core assumption. And when the market responds with indifference, there’s so much sunk cost that pivoting feels impossible.

True velocity comes from a different mindset. It means being willing to throw out work that doesn’t produce learning. It means shipping something that makes you slightly uncomfortable because it’s not perfect. It means updating your strategy document the week after a sprint, not the quarter after.

The teams that reach product-market fit fastest are rarely the ones with the best initial plan. They’re the ones with the most disciplined process for updating their assumptions. Constraint sharpens creativity. Imperfect ships produce real data. And real data, honestly interpreted, is the only thing that actually moves a product forward.

The uncomfortable truth is that success in startup product development is less about what you build and more about how quickly and honestly you learn from it.

Accelerate your product strategy with proven development partners

Executing these frameworks is hard enough with a full team. For many startups, the real constraint isn’t strategy. It’s engineering capacity and the technical depth needed to build, instrument, and scale quickly.

At Meduzzen, we work with startup founders and product leaders who are ready to move from frameworks to execution. Our pre-vetted engineers integrate directly into your team, bringing deep expertise in Python, AI, DevOps, cloud infrastructure, and modern web technologies. Whether you need a dedicated development team, staff augmentation to extend your existing engineers, or end-to-end product development from MVP to scale, we’ve helped teams in FinTech, Healthcare, EdTech, and Logistics do exactly that. With 150+ engineers and over 10 years of experience, we’re built for the kind of long-term, trust-based partnerships that produce real results. Explore our services and see how we can help you ship faster and learn smarter.

Frequently asked questions

What is the most important metric when developing an MVP?

The most important metric is the one that measures your riskiest assumption. As the Build-Measure-Learn loop makes clear, your MVP exists to test what must be true for your business to succeed, so measure that first.

How can startups avoid confirmation bias in product development?

Design the ‘measure’ step before building and commit to objective success criteria in advance. Confirmation bias only takes hold when the measurement plan is created after the results are already in.

Why integrate discovery and delivery rather than keep them separate?

Integrated discovery and delivery reduce miscommunication and improve how ideas are prioritized and shipped. Tightly connected teams make faster, better-informed decisions because everyone shares the same customer context.

Are European startups really matching US speed and innovation now?

Yes. European rocketships are now matching US velocity, with 85% of founders using AI to build their MVPs, a dramatic rise from 30% just five years ago.