In this article

Many AI projects fail because startups skip foundational steps like setting clear goals and preparing quality data. Building AI into your SaaS product isn’t plug-and-play. It demands systematic preparation, the right team, and a proven development process. This guide walks you through each phase from prerequisites to launch so you can build AI solutions that scale, deliver measurable results, and avoid costly mistakes.

Key takeaways

| Point | Details |

|---|---|

| Prerequisites set the foundation | Clear goals, quality data, cross-functional teams, and compliance readiness are non-negotiable before starting AI development. |

| Follow a stepwise development process | Define use case, prepare data, select models, integrate, test, and iterate to build scalable AI SaaS features. |

| Avoid common mistakes | Data bias, poor labeling, weak integration, and non-compliance derail projects; plan mitigation strategies early. |

| Measure success with KPIs | Track engagement uplift, error reduction, cost savings, and system uptime to validate AI impact and guide improvements. |

Prerequisites: what you need before starting

Before writing a single line of code, you need to lay the groundwork. Skipping this phase is why many AI projects stall or fail outright.

Start by setting clear AI project goals that align with your business vision. Define what problem you’re solving and how AI will improve your product. Vague objectives like “add AI” won’t cut it. You need measurable targets such as reducing support ticket volume by 20% or personalizing recommendations to boost engagement by 15%.

Next, establish a robust data strategy. At least 80% of AI development time is spent on data preparation including collection, cleaning, and labeling. Without high-quality data, your AI model will underperform. Plan how you’ll source data, ensure accuracy, and maintain labeling consistency. Consider privacy regulations from day one.

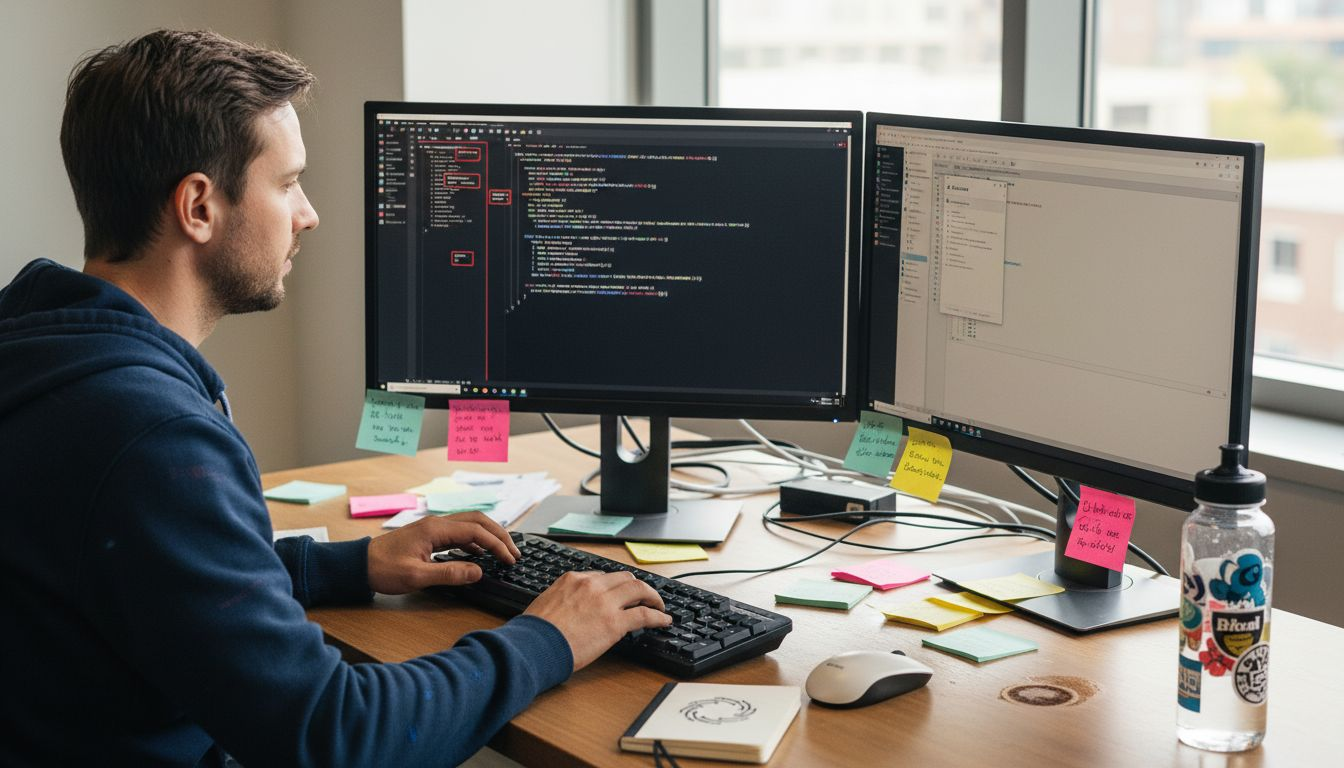

Build a cross-functional team that includes AI engineers, data scientists, product managers, and compliance experts. AI projects demand diverse skills. Engineers build models, data scientists refine algorithms, product managers translate business needs, and compliance experts navigate GDPR and privacy laws. No single role can handle it all.

Prepare for regulatory compliance early. GDPR, CCPA, and industry-specific regulations shape how you collect, store, and use data. Embedding compliance into your design prevents costly rework and legal risks later.

“The importance of data preparation for AI cannot be overstated. Clean, labeled data is the foundation of model accuracy and project success.”

Key preparation steps:

- Define measurable AI goals tied to business outcomes

- Build a data pipeline for collection, cleaning, and labeling

- Assemble a team covering AI, data, product, and compliance

- Map regulatory requirements and design for compliance

Step 1: define the AI use case and feasibility

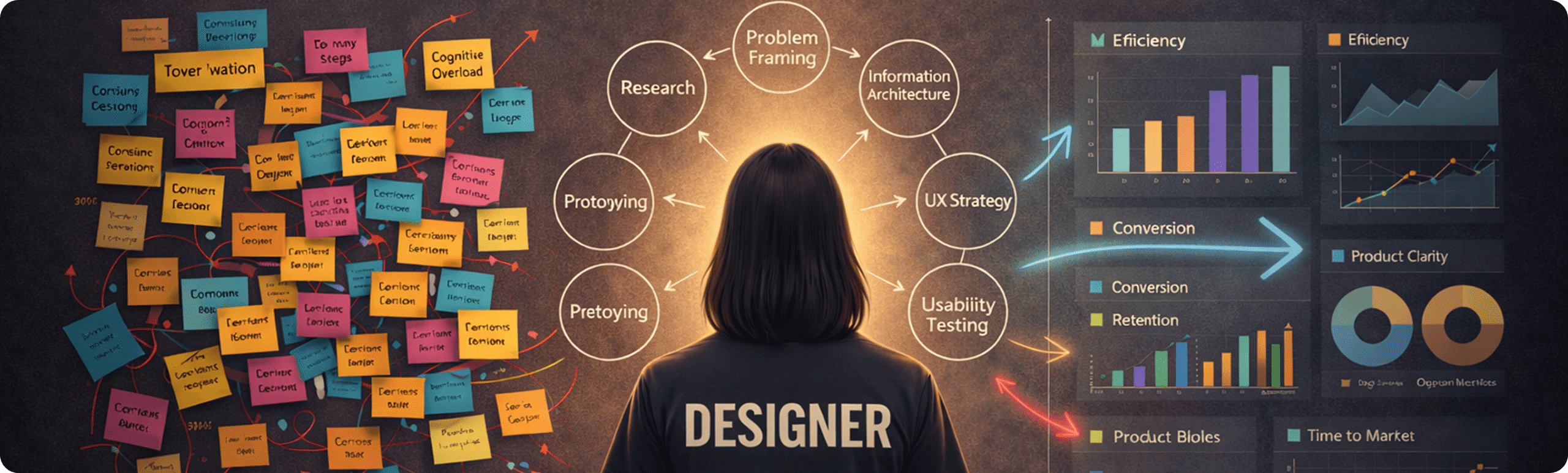

With your foundation in place, identify a practical AI use case that delivers real business value. Start by framing the problem. Focus on user pain points and business impact. For example, if customer churn is high, an AI-driven retention tool could predict at-risk users and trigger interventions.

Assess feasibility by evaluating data availability and quality. Do you have enough labeled data to train a model? If not, can you acquire or generate it through augmentation? Also consider technical resources. Does your team have the skills to build and maintain a custom model, or should you use third-party APIs?

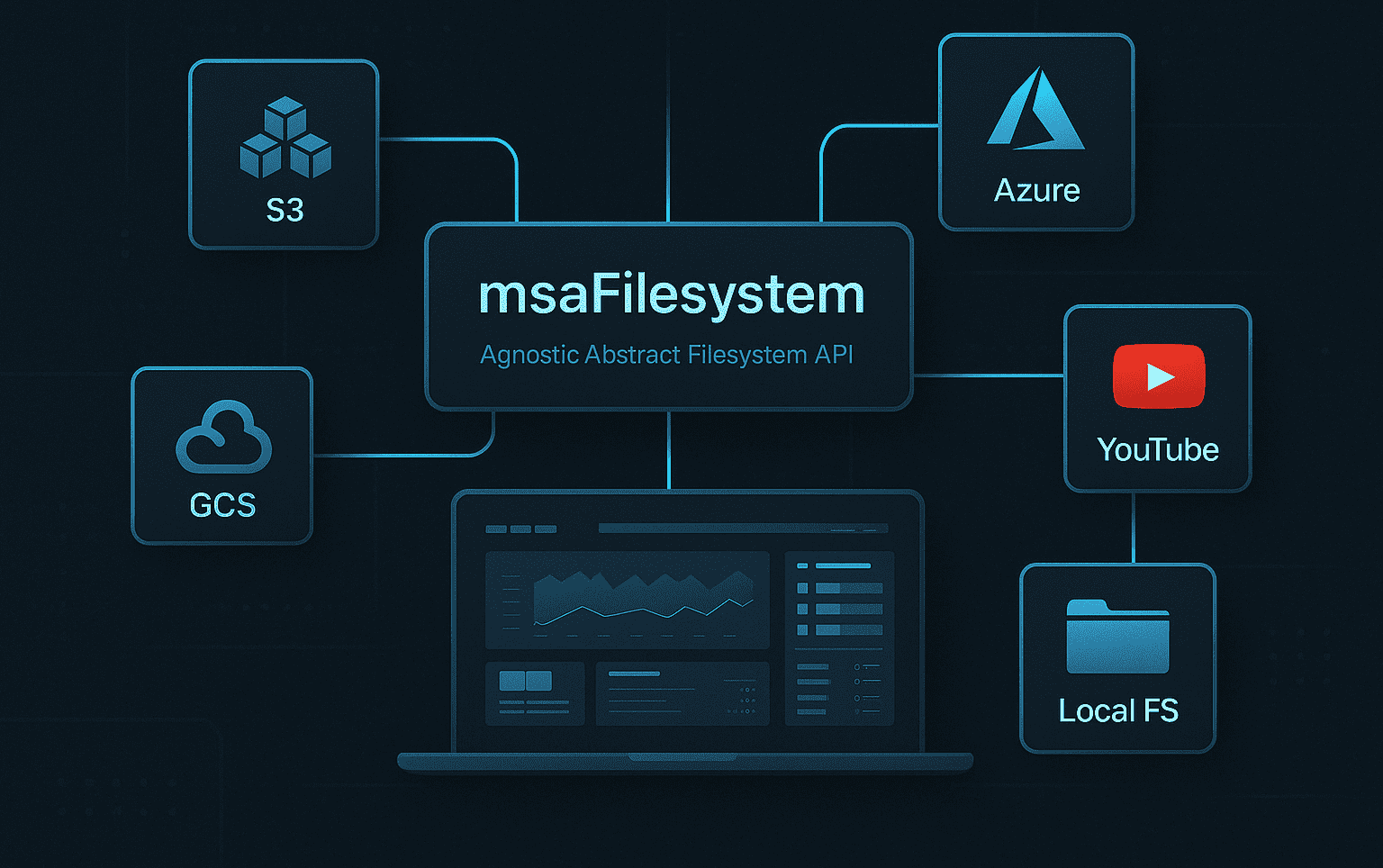

Decide between custom AI models and third-party APIs. Custom models offer control, tailored performance, and competitive differentiation. But they require significant engineering effort, ongoing maintenance, and infrastructure. APIs from providers like OpenAI or Google Cloud deliver speed and lower upfront cost. However, you sacrifice customization and may face vendor lock-in.

Consider your startup context. If rapid time-to-market is critical, APIs accelerate deployment. If you’re building a unique competitive advantage or need full control over data, custom models make sense. Many startups begin with APIs to validate the use case, then invest in custom solutions as they scale.

AI services for startups can help you navigate these tradeoffs and choose the right path for your product roadmap. Similarly, robust SaaS development services ensure AI features integrate seamlessly into your platform.

Defining your use case checklist:

- Identify a specific user problem with measurable business impact

- Verify data quality, quantity, and labeling readiness

- Evaluate team skills and infrastructure for custom vs. API approaches

- Align use case with startup priorities: speed, differentiation, or scalability

The AI use case importance lies in choosing problems where AI delivers clear, quantifiable value rather than adding AI for its own sake.

Step 2: data preparation and model selection

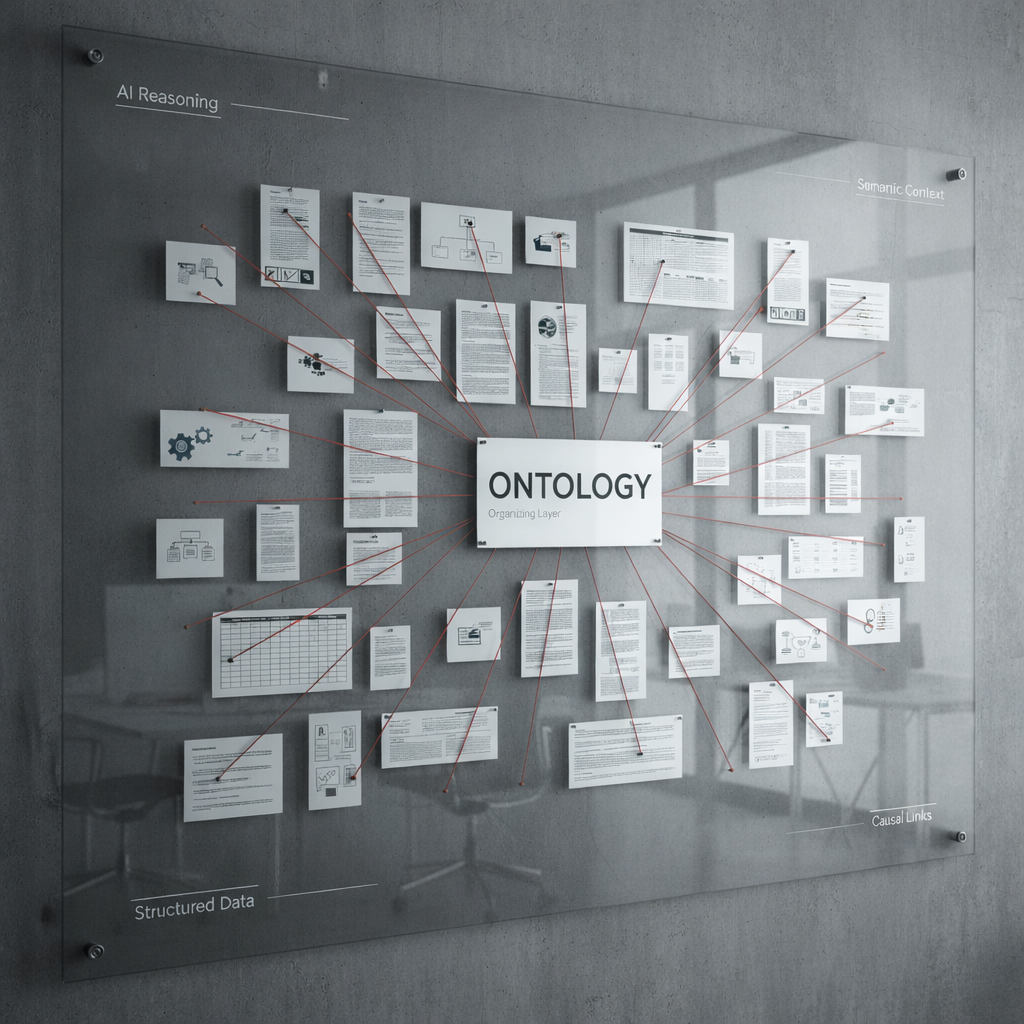

Once your use case is defined, shift focus to preparing high-quality data and selecting the right AI model. Data preparation is the most time-consuming phase, yet it determines model accuracy.

Start by collecting relevant data from your SaaS application, user interactions, third-party sources, or internal databases. Clean the data by removing duplicates, correcting errors, and handling missing values. Label data consistently, especially for supervised learning tasks. If your dataset is small, use data augmentation techniques like synthetic data generation or transfer learning to expand it.

Pro Tip: Automate data cleaning and labeling where possible using tools like Label Studio or AWS SageMaker Ground Truth to reduce manual effort and improve consistency.

At least 80% of AI development time is spent on data preparation. Investing here pays dividends in model performance and reduces debugging later.

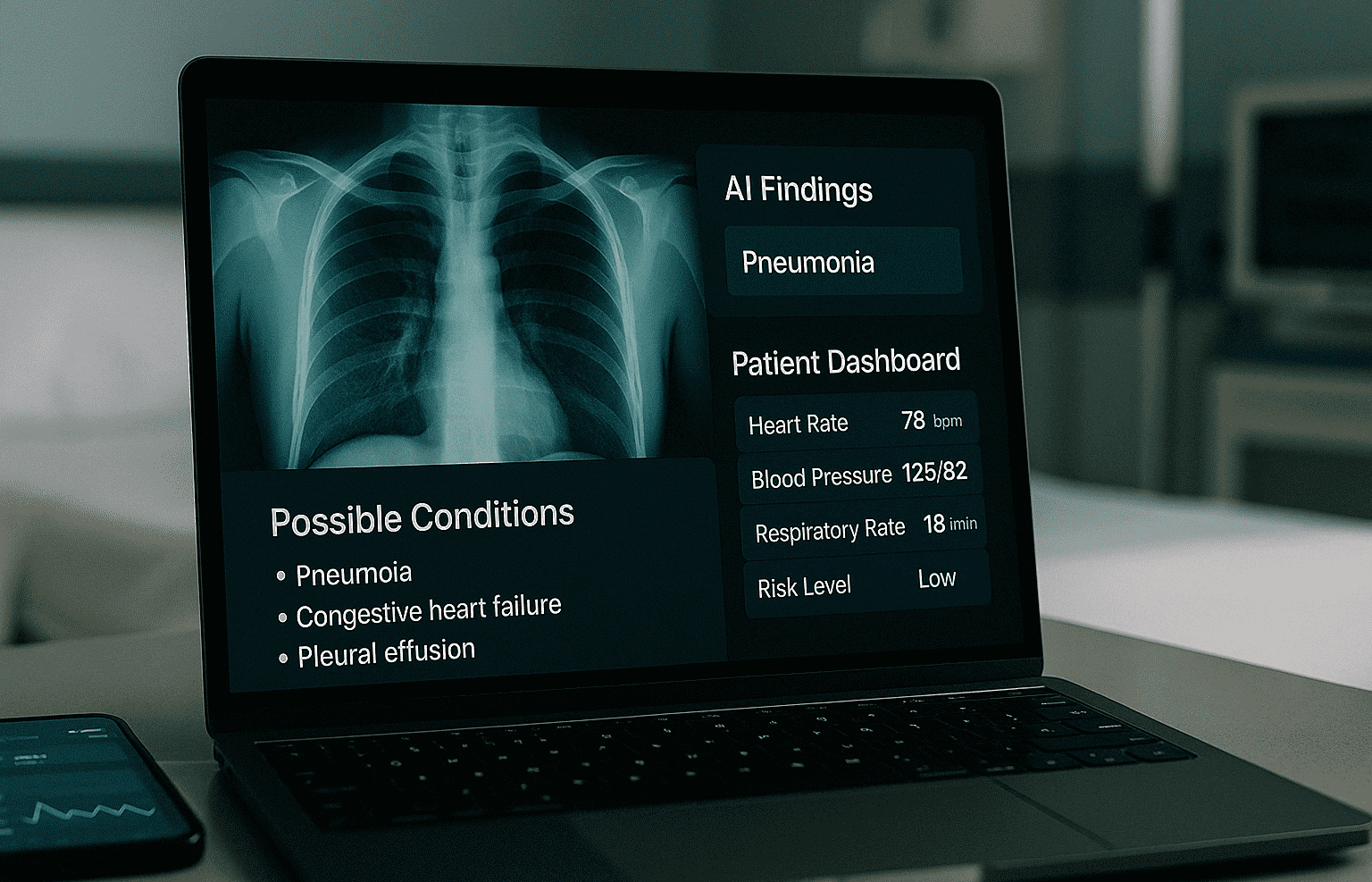

Next, select an AI model or API that fits your needs. For custom models, choose architectures based on your task: neural networks for image recognition, transformer models for natural language processing, or decision trees for structured data. Consider model size, training time, and inference speed. Lightweight models run faster but may sacrifice accuracy.

If using APIs, evaluate providers on cost, latency, data privacy, and feature completeness. Test multiple options with real data before committing.

Balance scalability, cost, and maintenance. Custom models scale with your infrastructure but require ongoing tuning and retraining. APIs scale automatically but costs rise with usage. Plan for both scenarios.

Data preparation workflow:

- Collect data from relevant sources and validate completeness

- Clean data by removing errors, duplicates, and outliers

- Label data consistently using annotation tools or crowdsourcing

- Augment limited datasets with synthetic data or transfer learning

- Split data into training, validation, and test sets for model evaluation

Data analytics consulting can streamline this process, ensuring you build on a solid data foundation. Understanding critical data preparation processes helps you avoid common pitfalls that derail AI projects.

Step 3: develop, integrate, and test AI solution

With data and model selection complete, build and integrate your AI solution into your SaaS platform. Use iterative prototyping to accelerate development and improve fit. Iterative prototyping can reduce AI project timelines by up to 30%. Start with a minimum viable model, test it with real users, gather feedback, and refine.

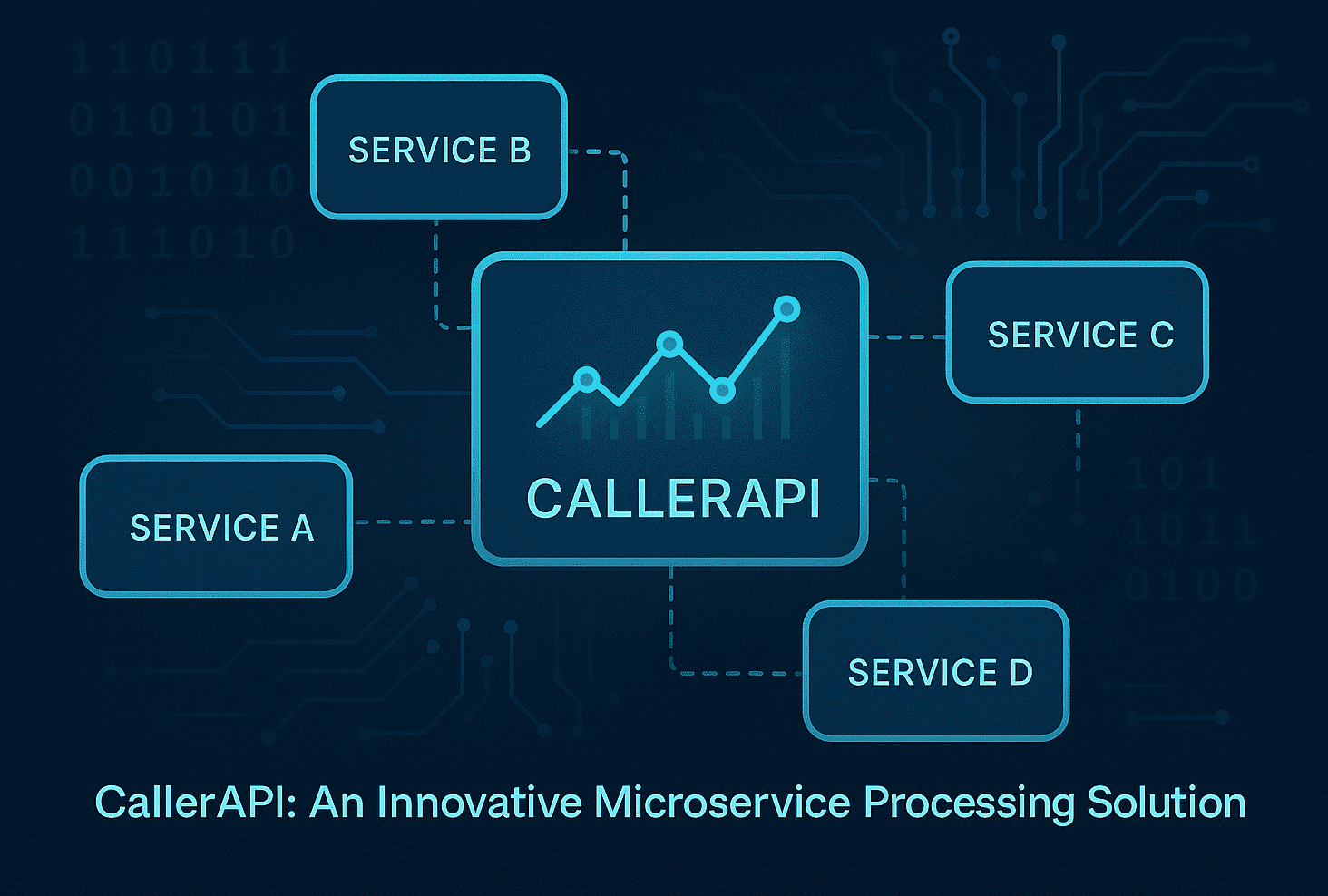

Integrate AI via robust APIs to maintain system stability. Design APIs that handle failures gracefully, include retry logic, and return meaningful error messages. Ensure your SaaS infrastructure can scale to support AI inference loads without degrading performance.

Monitor model performance continuously. Track metrics like accuracy, precision, recall, and latency. Retrain models regularly as new data arrives to prevent model drift. Set up automated pipelines for retraining and deployment.

Mitigate bias through detection tools and diverse training data. Bias creeps in when datasets don’t represent your user base or when labeling reflects human prejudice. Use fairness evaluation libraries like AI Fairness 360 to detect and correct bias before launch.

Pro Tip: Build a shadow deployment where your AI model runs in parallel with existing systems without affecting users. Compare outputs to validate accuracy and catch issues before full rollout.

Key integration steps:

- Prototype iteratively, starting with a simple model and adding complexity as needed

- Design APIs with error handling, rate limiting, and monitoring

- Automate model retraining pipelines to maintain accuracy over time

- Test bias and fairness using diverse datasets and evaluation tools

| Approach | Integration Complexity | Control & Customization | Maintenance Effort | Time to Market |

|---|---|---|---|---|

| Custom AI Model | High | Full control, tailored features | Ongoing retraining and tuning | 3-6 months |

| Third-Party API | Low | Limited customization | Minimal, handled by vendor | 1-2 months |

Cloud software integration ensures your AI features run reliably at scale. Follow SaaS technical best practices to optimize performance and user experience. Adopting iterative AI prototyping accelerates delivery and reduces risk.

Step 4: launch, measure, and iterate

After integration and testing, deploy your AI solution with defined KPIs to measure impact. Successful launches go beyond just pushing code. You need a measurement framework to validate performance and guide future improvements.

Deploy AI features gradually using phased rollouts or A/B testing. Start with a small user segment, monitor behavior and system performance, then expand. This minimizes risk and lets you catch issues early.

Track user engagement uplift and error reduction as primary success metrics. For example, measure if AI-powered recommendations increase click-through rates by 15% or if automated support resolves 30% more tickets without escalation. Monitor error rates and response times to ensure AI features don’t degrade user experience.

Use user feedback to guide continuous improvements. Collect qualitative feedback through surveys, support tickets, and user interviews. Combine this with quantitative data to identify what’s working and what needs refinement.

Plan iterations to maintain AI relevance and accuracy. AI models degrade over time as user behavior and data distributions shift. Schedule regular retraining cycles, review model performance dashboards, and update features based on evolving user needs.

Launch and measurement checklist:

- Deploy AI features using phased rollouts or A/B testing

- Define KPIs tied to business goals: engagement, conversion, support efficiency

- Monitor performance metrics daily during early rollout phases

- Establish feedback loops combining qualitative user input and quantitative data

- Schedule regular model retraining and feature updates

A well-executed SaaS growth strategy leverages AI to drive user retention and revenue growth. For inspiration on tracking AI performance, review AI success metrics example showing how analytics guide product decisions.

Common mistakes and troubleshooting

Even experienced teams encounter pitfalls. Recognizing these errors early saves time and resources.

Avoid data bias by diversifying training sets and using bias detection tools. Biased models produce unfair or inaccurate results, damaging user trust. Regularly audit training data for representation gaps and apply fairness constraints during model training.

Ensure thorough labeling and sufficient data quantity. Poor labeling creates noisy training signals that confuse models. Insufficient data leads to overfitting where models memorize training examples but fail on new inputs. Invest in quality labeling and gather enough data to generalize well.

Improve explainability to build stakeholder trust. Black-box AI models alienate users and regulators. Use interpretable models or explainability frameworks like SHAP or LIME to show how decisions are made. Transparency builds confidence and simplifies debugging.

Design robust integration to minimize downtime. Weak API connections, missing error handling, and inadequate load testing cause outages. Implement circuit breakers, retries, and fallback mechanisms so your SaaS platform remains stable even if AI services hiccup.

Embed compliance from the start to avoid legal risks. Retrofitting privacy controls and consent management after launch is expensive and risky. Design data pipelines and AI workflows to comply with GDPR, CCPA, and industry regulations from day one.

Pro Tip: Conduct regular AI audits reviewing data quality, model performance, bias metrics, and compliance posture. Scheduled reviews catch issues before they escalate into major problems.

Common pitfalls and fixes:

- Data bias: Diversify training data and apply fairness evaluation tools

- Poor labeling: Use annotation platforms and enforce labeling guidelines

- Lack of explainability: Integrate interpretability frameworks and document decision logic

- Weak integration: Build resilient APIs with error handling and monitoring

- Non-compliance: Design for privacy and regulatory requirements from project start

Expected results and outcomes

Setting realistic expectations helps you plan resources and evaluate success accurately. AI projects vary in complexity, but typical timelines and outcomes provide useful benchmarks.

AI MVP delivery ranges from 3 to 6 months depending on use case complexity, data readiness, and team experience. Simple recommendation engines or chatbots land closer to 3 months. Advanced predictive models or computer vision applications take 6 months or more.

Expect 10% to 25% user engagement uplift when AI features are well-targeted. Personalized recommendations, intelligent search, and predictive content delivery keep users active and satisfied. The exact uplift depends on baseline engagement and how well AI addresses user needs.

Reduce errors by 15% to 30%, enhancing user experience. AI-powered validation, anomaly detection, and automated workflows catch mistakes humans miss. Lower error rates translate to fewer support tickets and higher customer satisfaction.

Achieve operational cost savings of 10% or more by automating repetitive tasks. AI handles data entry, ticket routing, and routine decision-making, freeing your team for strategic work. Cost savings compound as you scale.

Improve system uptime by 25% with solid AI integration. Predictive maintenance, intelligent load balancing, and proactive issue detection prevent outages. Reliable systems boost user trust and reduce churn.

| Metric | Typical Range | Impact on Business |

|---|---|---|

| AI MVP Delivery Time | 3-6 months | Faster time-to-market with iterative prototyping |

| User Engagement Uplift | 10-25% | Higher retention and product stickiness |

| Error Reduction | 15-30% | Improved user experience and lower support costs |

| Operational Cost Savings | 10%+ | Automation reduces manual effort and scales efficiently |

| System Uptime Improvement | 25% | Greater reliability and user trust |

These benchmarks guide planning and help you set achievable goals. Your results will vary based on execution quality, use case fit, and market dynamics.

How Meduzzen can help build your AI SaaS solution

Building AI solutions that scale requires expertise across engineering, data science, and product development. Meduzzen offers tailored AI services and SaaS application development to accelerate your AI journey from concept to launch.

Our multidisciplinary teams bring deep experience in Python, machine learning frameworks, cloud infrastructure, and compliance. We integrate seamlessly into your workflow, ensuring fast onboarding and transparent communication. Whether you need a dedicated AI team or end-to-end custom cloud software development, we deliver scalable solutions that drive measurable business results. Partner with experts to avoid common pitfalls and achieve faster, more predictable AI deployment.

FAQ

What skills are essential for building AI solutions in SaaS?

AI development requires AI engineering, data science, product management, and compliance knowledge. Engineers build and deploy models, data scientists refine algorithms and analyze performance, product managers translate business needs into technical requirements, and compliance experts navigate privacy regulations. Cross-functional collaboration is key because AI projects touch every part of your SaaS platform from data pipelines to user interfaces. No single role can handle it all, so building a balanced team accelerates success.

How long does it typically take to build an AI MVP for a SaaS product?

AI MVPs generally take between 3 and 6 months depending on complexity, data readiness, and team experience. Simple use cases like chatbots or basic recommendation engines land closer to 3 months. Advanced predictive models or computer vision features take 6 months or more. Iterative prototyping can shorten timelines by validating core functionality early and refining based on feedback.

What are common pitfalls to avoid in AI SaaS development?

Avoid data bias, poor data labeling, lack of explainability, weak integration, and non-compliance. Biased training data produces unfair results, poor labeling creates noisy signals, black-box models alienate users, fragile integrations cause downtime, and ignoring regulations invites legal trouble. Plan for these early by diversifying data, enforcing labeling standards, using interpretability tools, designing resilient APIs, and embedding compliance from day one to ensure smoother development.

How do I measure success after launching AI features?

Track engagement uplift, error reduction, operational cost savings, and system uptime improvements as primary KPIs. Monitor whether AI features increase user activity, reduce support tickets, automate manual tasks, and improve reliability. Use user feedback loops combining qualitative surveys with quantitative analytics to guide iterative enhancements. Regular performance reviews ensure your AI remains relevant and continues delivering value as user needs evolve.