In this article

The essential software engineering checklist for scaling in 2026

Business & Strategy

Apr 2, 2026

11 min read

Discover the essential software engineering checklist for scaling in 2026. Covering AI adoption, platform engineering, code review, and delivery benchmarks for fast-growing teams.

Keeping pace with 2026 engineering standards feels like building stability out of chaos. AI is reshaping delivery pipelines faster than most teams can adapt, platform engineering has moved from buzzword to baseline expectation, and the gap between high-performing and struggling teams is widening fast. AI is cutting lead time in half for low performers while elite teams already ship multiple times per day. If your team is scaling and you want to stay competitive, you need more than good intentions. You need a concrete checklist that reflects where the industry actually is right now.

Key Takeaways

| Point | Details |

|---|---|

| Benchmark for success | Elite teams ship faster, focus more on the roadmap, and embrace platform engineering and AI automation. |

| Prioritize automation safely | Integrate AI and automation with quality gates to boost delivery but keep bugs in check. |

| Platform engineering unlocks scale | Standardized platforms are essential for translating AI and DevOps investments into team-wide gains. |

| Culture beats checklist | Checklists alone fail; adapt principles to your team’s unique context for real improvement. |

Set clear benchmarks: Speed, delivery, and AI adoption

Before any checklist item can stick, you need a north star. Without clear numbers, “improving delivery” stays vague and unmeasurable. The good news is that 2026 has given us sharper benchmarks than ever before.

Here is what the data says top teams are hitting right now:

| Metric | Elite teams | Bottom quartile |

|---|---|---|

| Deployment frequency | Multiple times per day | Less than once per month |

| Lead time for changes | Under 1 day | Over 30 days |

| Focus time on roadmap | Over 41% | Under 20% |

| Planned work completed | Over 66% | Under 40% |

Top teams ship in under 22.5 days, spend over 41% of their time on roadmap work, and complete more than 66% of planned items. Those numbers are not aspirational. They are the current baseline for teams that want to compete.

AI has shifted expectations significantly. 90% AI adoption among elite teams is now the norm, and the DORA 2025 report confirms that elite teams deploy multiple times per day. This means AI is no longer a differentiator. It is table stakes.

Here is what you should track weekly as a minimum:

- Deployment frequency: Are you shipping at least daily?

- Lead time for changes: From commit to production, is it under 24 hours?

- Change failure rate: Are fewer than 5% of deployments causing incidents?

- Time on roadmap: Is your team spending more than 40% of capacity on planned, strategic work?

These benchmarks apply whether you are building on modern web development tools or scaling through scalable workflow strategies. The numbers give your checklist its teeth.

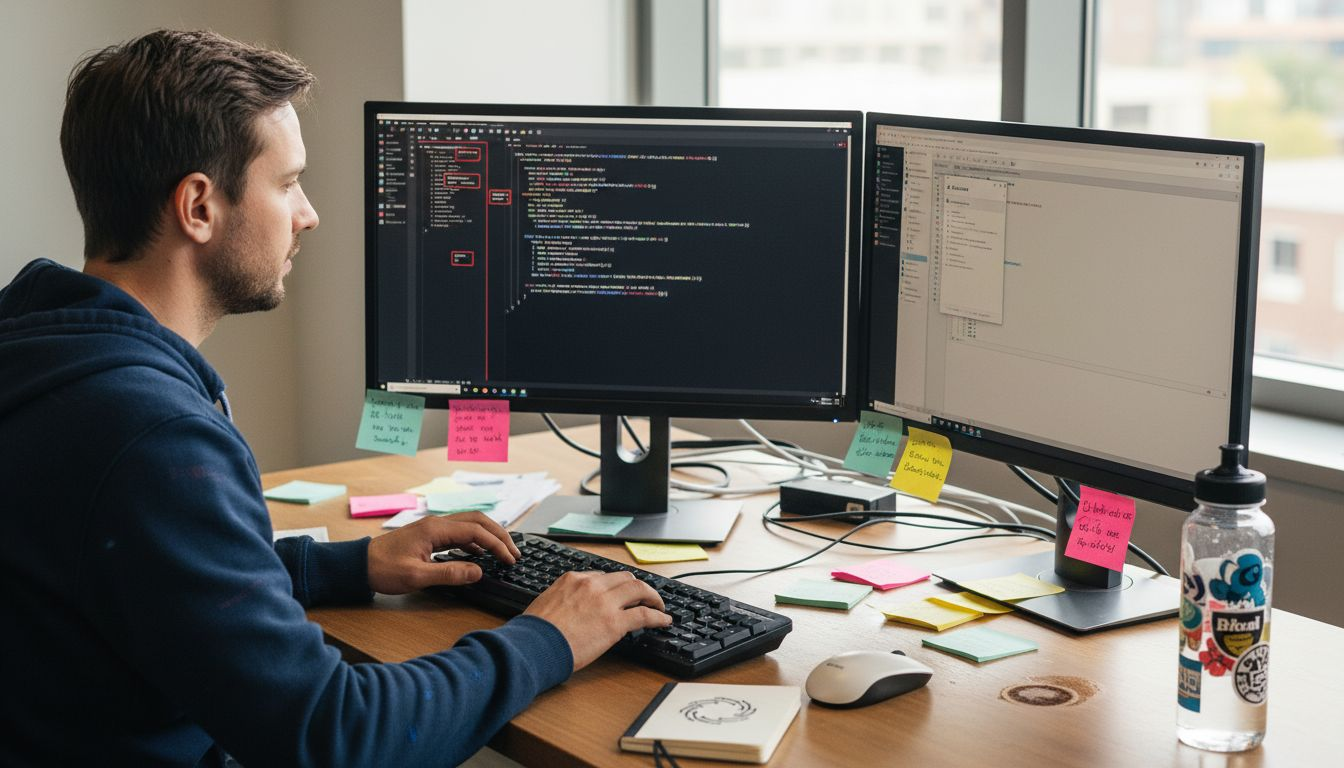

Checklist item 1: Adopt AI and automate your pipeline (without losing control)

With benchmarks as your target, the first and highest-leverage checklist item is automation and AI adoption. But this is where many teams stumble. They add AI tools without governance, and the pipeline gets faster but less stable.

Start by auditing your current pipeline for automation gaps. Common opportunities include:

- CI/CD automation: Are builds, tests, and deployments fully automated, or does someone still click a button?

- Automated testing coverage: Unit, integration, and end-to-end tests should run on every pull request.

- AI-assisted code generation: Tools like GitHub Copilot or Cursor can accelerate output, but they need guardrails.

- Automated security scanning: Static analysis and dependency checks should be part of every merge.

- Observability pipelines: Logs, traces, and metrics should flow automatically into your dashboards.

The risk is real. AI cuts lead time by 50% for low performers, but without proper management, it creates code review bottlenecks that slow teams down in unexpected ways. And the quality trade-off is measurable. AI-generated PRs have 1.7x more issues and 40% more critical bugs than human-written code.

This does not mean avoid AI. It means build the controls before you scale the usage. Your checklist for safe AI adoption should include:

- Governance policy: who approves AI tool usage and under what conditions?

- Human-in-the-loop quality gates: no AI-generated code merges without a senior review.

- Observability: can you trace a production issue back to an AI-generated change?

“The teams that win with AI are not the ones who adopt it fastest. They are the ones who build the feedback loops to catch what AI gets wrong.”

For deeper context on how AI is reshaping quality controls, the AI bug detection insights from our research team are worth reviewing. If you are building scalable AI SaaS, these controls become even more critical at scale.

Pro Tip: Treat AI adoption like a new team member. Onboard it carefully, review its work closely at first, and expand its responsibilities only as trust is earned.

Checklist item 2: Implement robust code review and quality gates

Checklist adoption means little without strong controls. Code review is where quality either gets protected or quietly abandoned under delivery pressure.

The data here is stark. Bottom-quartile teams spend 35+ hours waiting on code review, while elite teams keep PRs under 105 lines with cycle times under 48 hours. That is not a minor difference. It is the difference between a team that ships confidently and one that is always catching up.

Here is your code review checklist:

- PR size limits: Enforce a maximum of 200 lines per PR. Smaller PRs get reviewed faster and catch more bugs.

- Review SLAs: Set a 24-hour response expectation for all open PRs. Track it.

- Reviewer rotation: Avoid the same two people reviewing everything. Rotate to spread knowledge and reduce bottlenecks.

- Automated checks before human review: Linting, formatting, test coverage, and security scans should all pass before a human looks at the code.

- Bug fix monitoring: Track how often merged code introduces regressions. This tells you if your gates are actually working.

AI changes the review dynamic in ways many teams have not fully reckoned with. When developers generate code faster, the volume of PRs increases. But if review capacity stays the same, the bottleneck shifts from writing code to reviewing it. Elite teams solve this by investing in AI code review trends and tooling that pre-screens AI-generated changes before they reach a human reviewer.

Pro Tip: If your average PR cycle time exceeds 48 hours, the problem is almost never reviewer laziness. It is usually PR size, unclear ownership, or missing automation. Fix the system, not the people.

Quality gates are not optional at scale. They are the mechanism that keeps your pipeline honest as team size and velocity increase.

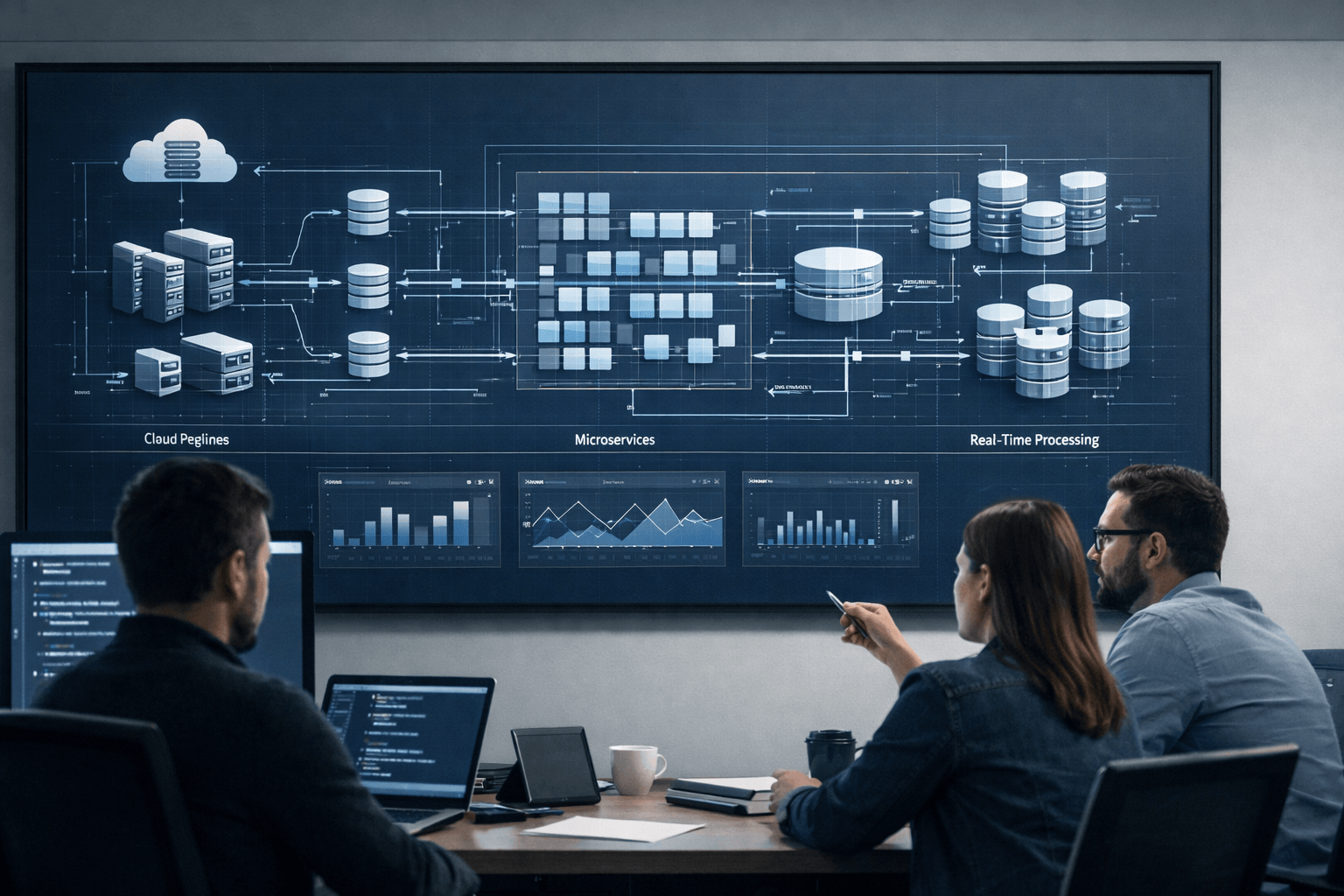

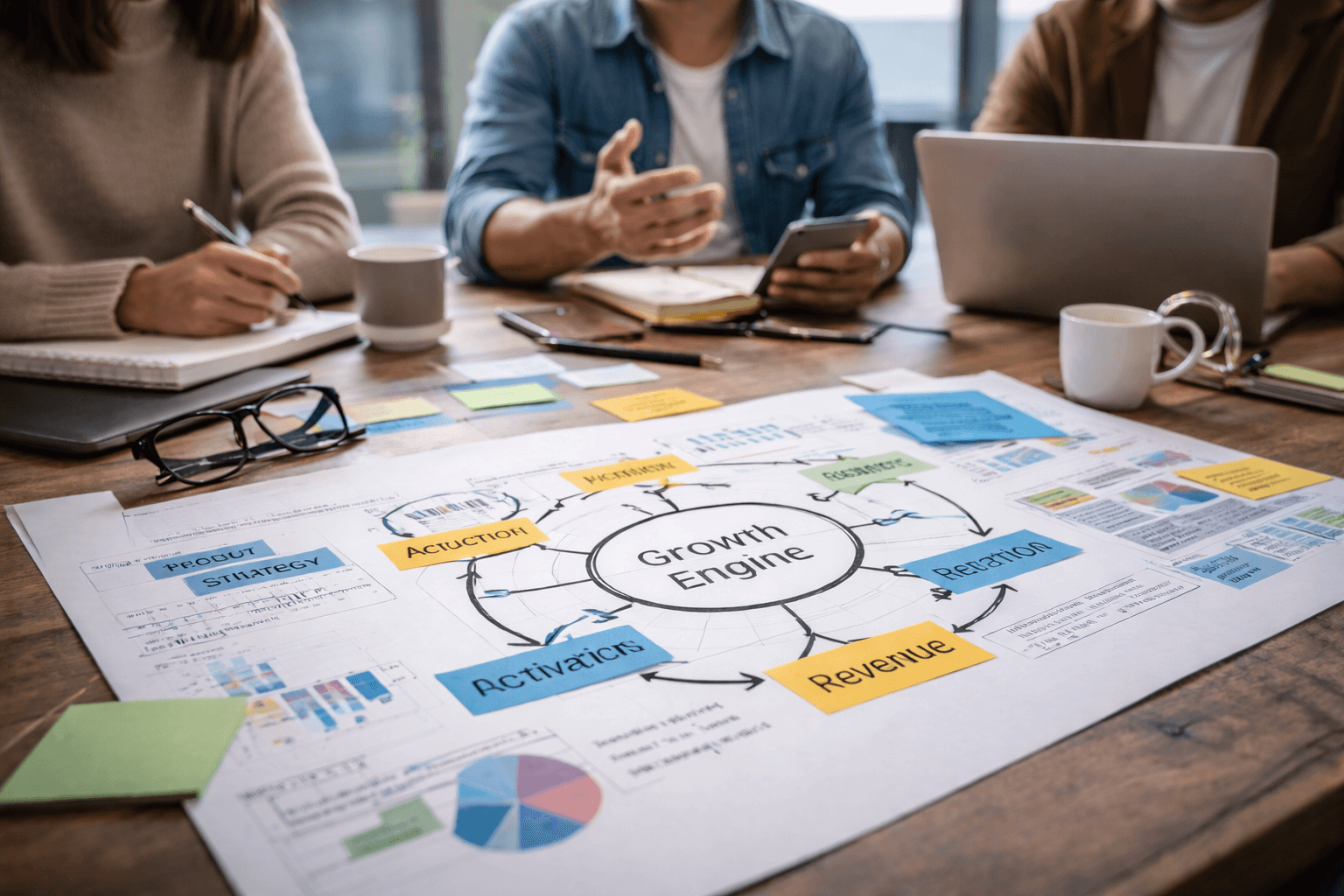

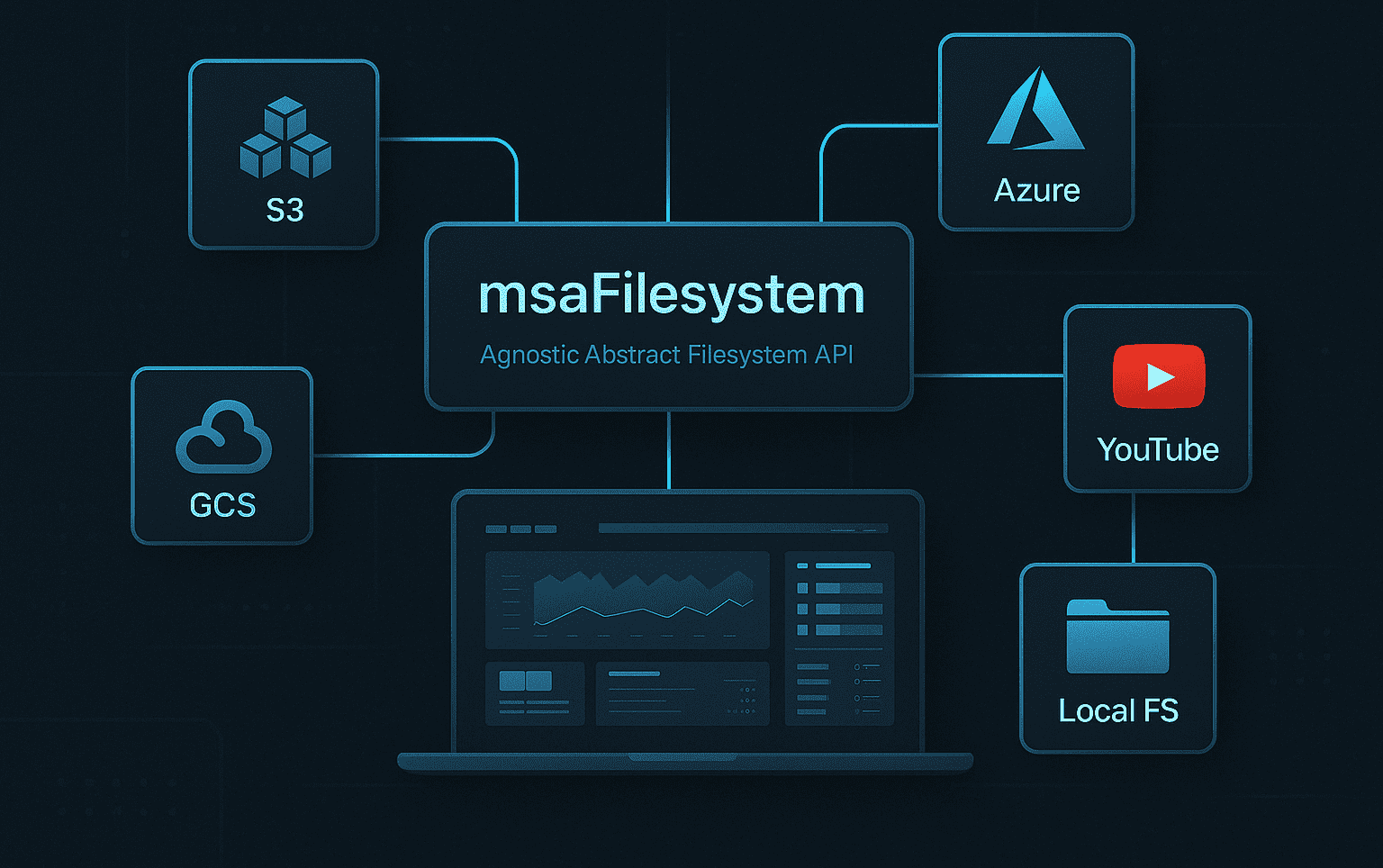

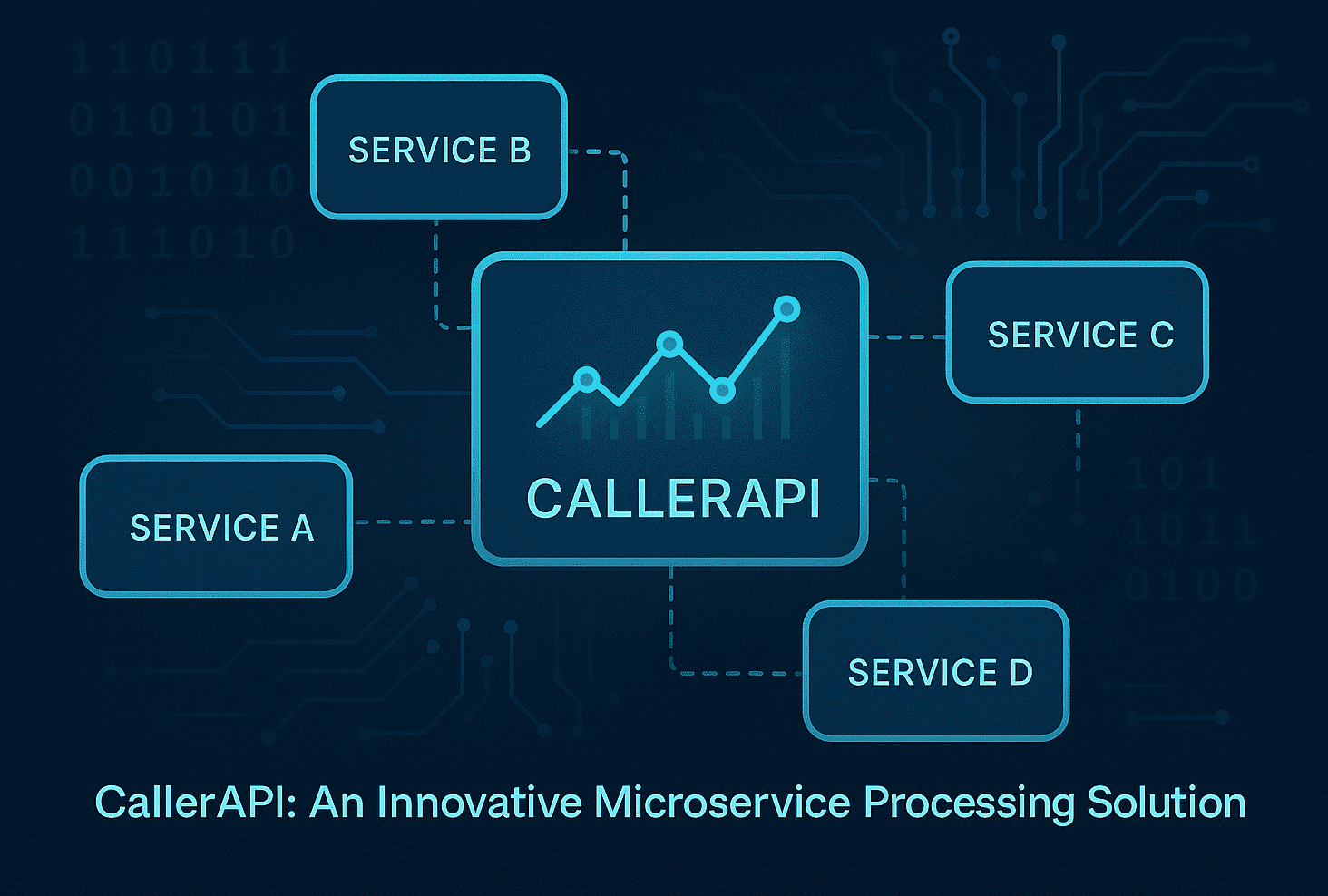

Checklist item 3: Use platform engineering and value stream management for scale

Once reviews and automation are in place, sustainable scaling comes through platform engineering. This is the infrastructure investment that pays compounding returns.

Platform engineering has 90% adoption among elite teams, and the DORA 2025 report draws a direct line between platform maturity and the ability to unlock AI value. Teams without internal platforms spend too much cognitive energy on infrastructure decisions that should be solved once and standardized.

Here is how to approach it:

- Audit your current developer experience: Where do engineers lose time to environment setup, deployment confusion, or inconsistent tooling?

- Build a golden path: Define the standard way to create, deploy, and monitor a service. Make it the path of least resistance.

- Invest in self-service infrastructure: Engineers should be able to spin up environments, run tests, and deploy to staging without filing a ticket.

- Measure cognitive load: Regularly ask your team how much time they spend on non-product work. That number should trend down.

| Approach | Speed | Reliability | Cognitive load | AI readiness |

|---|---|---|---|---|

| Platform engineering | High | High | Low | High |

| Ad hoc infrastructure | Variable | Low | High | Low |

| Outsourced ops | Medium | Medium | Medium | Medium |

The contrast is clear. Ad hoc approaches might feel faster in the early days, but they create compounding friction as teams grow. Platform engineering, done well, is what allows building scalable AI SaaS without rebuilding your foundation every six months. And avoiding the AI development failures that come from weak infrastructure is just as important as shipping fast.

Checklist item 4: Monitor, measure, and iterate across your stack

The habits that keep elite teams on top are not dramatic. They are consistent. Monitoring, learning, and adaptation are the unglamorous work that separates teams who sustain performance from those who peak and plateau.

Top teams spend over 41% of their time on roadmap work and complete more than 66% of planned items. That level of focus does not happen by accident. It comes from disciplined measurement and honest retrospectives.

Your monitoring and iteration checklist:

- Weekly: Review deployment frequency, lead time, and change failure rate. Flag any metric that moved in the wrong direction.

- Weekly: Check PR cycle times and review bottlenecks. Are any engineers blocked?

- Monthly: Run a roadmap retrospective. What was planned, what shipped, and what got derailed?

- Monthly: Review post-mortems from incidents. Are the same root causes recurring?

- Quarterly: Benchmark against industry data. Are your numbers improving relative to the market?

The traps here are common. Vanity metrics like lines of code written or number of commits tell you nothing meaningful. Measuring too late, after a quarter of poor delivery, means the feedback loop is too slow to course-correct. And the most painful trap: collecting data but never acting on it.

The AI-powered insights available today make it easier than ever to surface the right signals. Use them. But make sure someone on your team owns the action that follows each insight. Data without accountability is just noise.

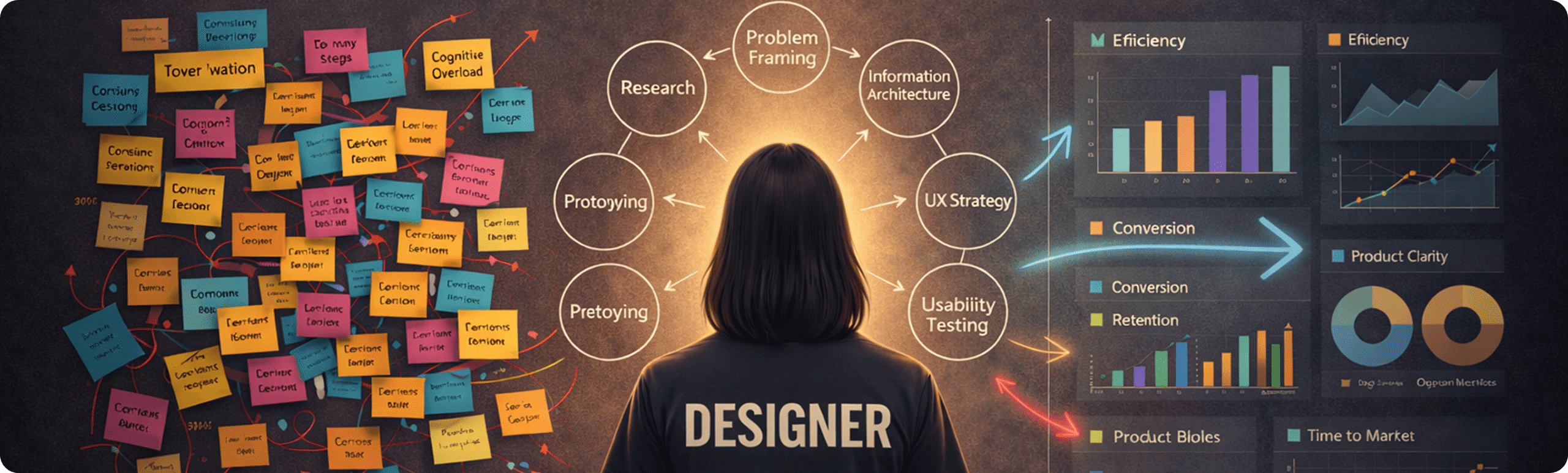

Why most engineering checklists fail in 2026 (and what actually works)

Here is the uncomfortable truth: most teams that follow a checklist still underperform. Not because the checklist is wrong, but because they treat it as compliance rather than a catalyst.

Box-checking creates mediocrity. A team that implements code review SLAs without understanding why they matter will game the metric, not improve the outcome. A team that adopts platform engineering because it is on the list, without adapting it to their stack and talent, will build something nobody uses.

The biggest gains we have seen come from teams that use the checklist as a conversation starter. They ask, “Which of these items creates the most friction for us specifically?” and start there. They adapt the benchmarks to their stage. A 10-person team and a 100-person team should not be running identical processes.

Elite teams treat the checklist as a living document, not a finish line. They revisit it when the context changes, when building scalable workflows demands new approaches, and when the data tells them something is not working. That mindset is what separates teams that grow through scaling from teams that get crushed by it.

If you want expert help scaling your engineering team

Scaling an engineering team in 2026 means navigating AI adoption, platform engineering, and delivery speed all at once. That is a lot to hold together, especially when your core focus needs to stay on the product.

At Meduzzen, we work with startup founders and CTOs who are building serious engineering practices and need experienced hands to move faster. Whether you need custom DevOps services to automate your pipeline, web development services to accelerate product delivery, or AI services for startups to adopt AI without the quality trade-offs, our pre-vetted engineers integrate directly into your team. We have helped teams across FinTech, Healthcare, and SaaS scale without losing control of quality or delivery. Let us help you build the engineering foundation your growth deserves.

Frequently asked questions

What is the most critical metric for software engineering teams in 2026?

Deployment frequency and lead time remain the key metrics. Elite teams deploy multiple times per day with lead times under one day, making these the clearest indicators of engineering health.

How does AI adoption impact software quality in 2026?

AI accelerates delivery but introduces quality risks if review processes are not updated. AI PRs carry 1.7x more issues and 40% more critical bugs, making stronger quality gates essential alongside any AI tooling.

Why is platform engineering crucial for scaling teams?

Platform engineering standardizes infrastructure and reduces cognitive load, enabling teams to scale without rebuilding foundations. 90% platform adoption correlates directly with the ability to unlock AI value across the organization.

What is the best way to keep the checklist updated?

Review checklist items quarterly, comparing your internal metrics against current industry benchmark reports to ensure each item still reflects your team’s stage and the market’s current expectations.