In this article

Master AI development process: 85% projects fail in 2026

Tech & Infrastructure

Mar 15, 2026

11 min read

Learn the AI development process that helps 15% of projects succeed. Avoid the data quality issues and model drift that cause 85% of AI initiatives to fail in 2026.

Tech startups pour resources into AI projects only to watch them stall at the pilot stage or fail to deliver promised value. The statistics are sobering: nearly 85% of AI projects fail to move beyond initial testing, and up to 95% fail to deliver their promised value. For product managers and founders navigating AI development trends 2026 brings, mastering the AI development process is no longer optional. This guide walks you through the structured approach needed to avoid common pitfalls and build AI systems that actually work.

Key takeaways

| Point | Details |

|---|---|

| High failure rates stem from poor data | Up to 87% of AI projects never reach production due to data quality issues and misaligned business goals |

| Data preparation dominates timelines | Collecting, cleaning, and organizing data consumes 60-80% of initial development resources |

| User needs drive success | Understanding real workflows and integrating AI contextually prevents the disconnect that kills most pilots |

| Model drift requires ongoing attention | 91% of machine learning models degrade over time without continuous monitoring and retraining |

Understanding why AI projects fail: common pitfalls and challenges

The gap between AI hype and reality creates a graveyard of abandoned projects. Nearly 85% of AI projects fail to escape the pilot stage, while 95% fail to deliver their promised business value. These aren’t just technical failures. They represent misaligned expectations, poor planning, and fundamental misunderstandings about what AI development actually requires.

Poor data quality tops the list of project killers. You can’t build reliable AI systems on messy, incomplete, or biased datasets. Model drift affects 91% of machine learning models, gradually degrading performance as real-world data patterns shift away from training assumptions. Most teams discover these issues too late, after investing months in model development.

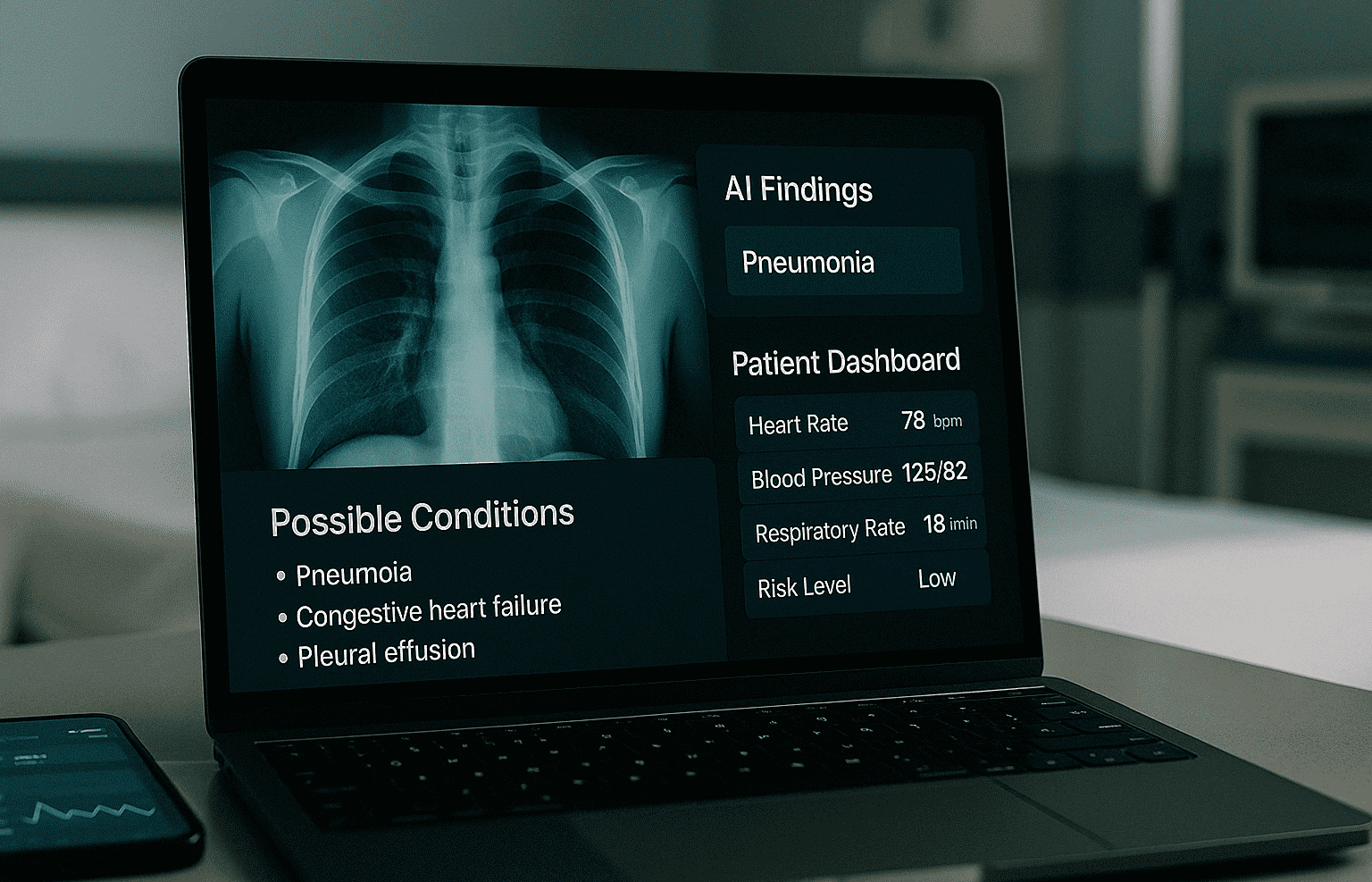

Misunderstanding user needs creates another common failure mode. Teams often focus on technical capabilities rather than actual workflow integration. They build sophisticated models that solve the wrong problems or require users to change established processes. This disconnect between AI outputs and real business needs guarantees rejection, no matter how impressive the underlying technology.

The most damaging pitfall involves treating AI like traditional software development. Startups apply waterfall or even agile methodologies without accounting for AI’s unique challenges:

- Data dependencies that shift project timelines unpredictably

- Model performance that varies based on data quality and distribution

- Evaluation requirements that demand continuous validation

- Deployment complexity involving monitoring and retraining infrastructure

The model is an implementation detail; the problem defines the architecture.

This quote captures why so many AI projects fail. Teams jump to solutions before fully understanding the problem space. They chase the latest models or techniques instead of asking whether AI even suits their specific challenge. The result is technically impressive systems that deliver zero business value.

Preparing for success: essential prerequisites in the AI development process

Successful AI development starts long before writing code. You need clear problem definition, quality data infrastructure, and the right team structure. These prerequisites consume the majority of project resources but determine whether your AI system ever reaches production.

Define the actual problem you’re solving. This sounds obvious but requires discipline. What specific business outcome do you need? What decisions will the AI system support? How will users interact with its outputs? Rushing past these questions to start building models guarantees misalignment. The problem definition shapes your entire architecture, from data requirements to model selection to deployment strategy.

Data preparation consumes 60-80% of initial development timelines. This isn’t inefficiency. It reflects the reality that AI quality depends entirely on data quality. You must identify relevant data sources, establish collection processes, clean inconsistencies, handle missing values, and validate accuracy. Skipping these steps means 87% of AI projects never reach production due to data quality issues.

Pro Tip: Start with a data audit before committing to any AI approach. Document what data you have, what’s missing, and what quality issues exist. This reality check often reveals whether your project is viable.

Building the right team matters as much as technical preparation. AI projects need cross-functional collaboration between business stakeholders, data engineers, ML specialists, and domain experts. Each group brings critical perspective:

| Role | Primary Responsibility |

|---|---|

| Business stakeholders | Define success metrics and ensure alignment with company goals |

| Data engineers | Build infrastructure for collection, storage, and processing at scale |

| ML specialists | Develop, train, and optimize models based on problem requirements |

| Domain experts | Validate outputs and ensure results make sense in context |

Many startups try to shortcut team requirements by hiring one or two generalists. This creates bottlenecks and knowledge gaps. You need dedicated focus on data analytics services and governance from day one. Establishing data quality standards, access controls, and documentation practices early prevents the chaos that derails projects later.

The preparation phase also requires honest assessment of your technical infrastructure. Can your current systems handle the data volumes AI requires? Do you have monitoring and logging capabilities? What about model versioning and experiment tracking? These operational concerns seem secondary but become critical when building scalable AI solutions that need to run reliably in production.

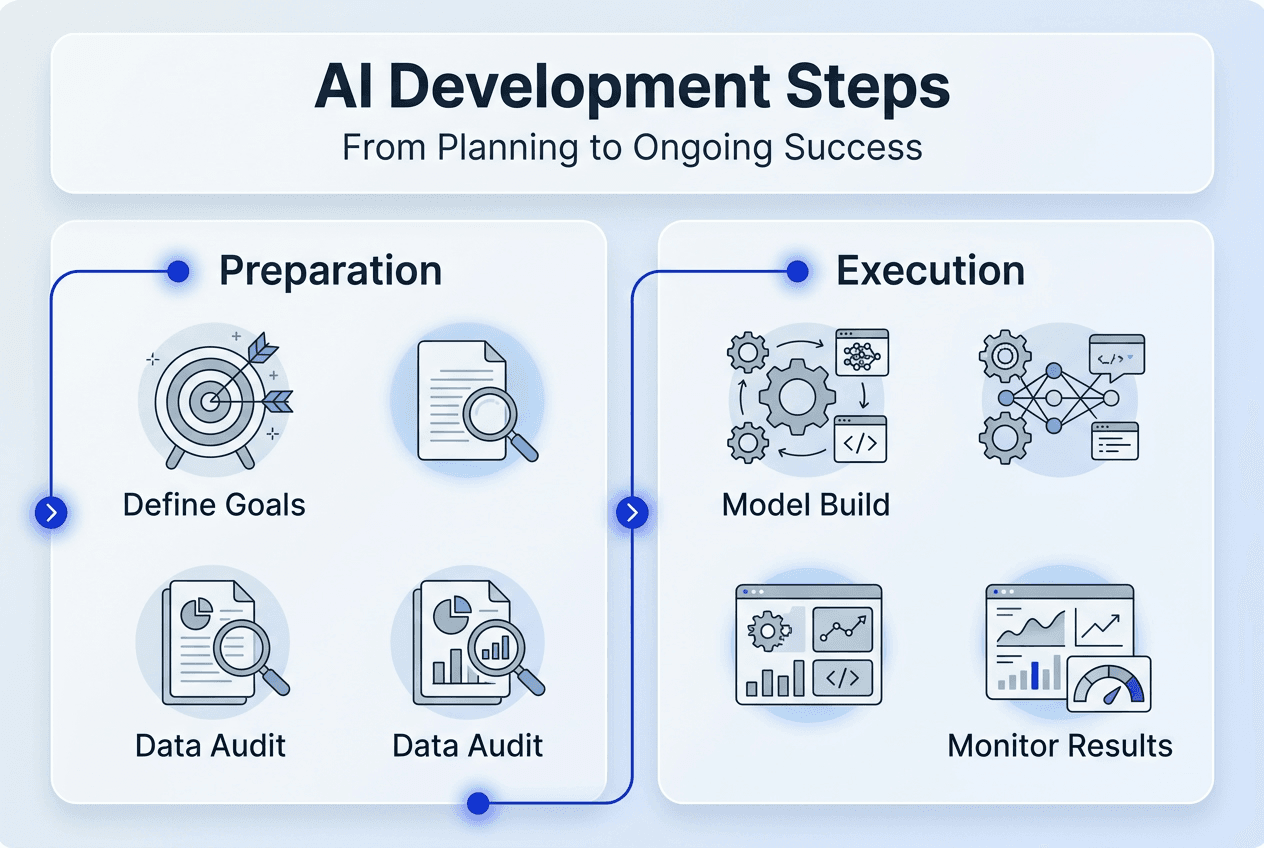

Executing the AI development process: step-by-step methodology

With preparation complete, you can execute the actual development process. This follows a structured workflow that maximizes your chances of creating valuable AI systems. Each step builds on previous work and validates assumptions before moving forward.

Start by clarifying your problem statement into specific technical requirements. The problem defines the architecture, not the other way around. What inputs will your system process? What outputs do users need? What accuracy or performance thresholds matter for business value? These questions shape everything from model selection to evaluation criteria.

The core development process follows these steps:

- Collect and organize relevant data from identified sources

- Clean data by handling missing values, outliers, and inconsistencies

- Engineer features that capture patterns relevant to your problem

- Split data into training, validation, and test sets

- Select and train initial models based on problem characteristics

- Evaluate model performance against business metrics

- Iterate on features, models, and hyperparameters to improve results

- Deploy the best-performing model with monitoring infrastructure

This sequence isn’t strictly linear. You’ll cycle between steps as you discover data issues or performance gaps. The key is maintaining clear evaluation criteria throughout. Establish your evaluation suite before first deployment so you can objectively measure progress and catch problems early.

Pro Tip: Prompt engineering is a starting point, not an architecture. If you’re building with large language models, don’t stop at prompt optimization. Consider fine-tuning, retrieval augmentation, or custom model development for production systems.

Different development approaches suit different AI projects:

| Approach | Best For | Key Characteristics |

|---|---|---|

| Waterfall | Well-defined problems with stable requirements | Sequential phases, extensive upfront planning, limited flexibility |

| Agile | Evolving requirements or exploratory projects | Iterative sprints, frequent stakeholder feedback, adaptive planning |

| Hybrid | Complex AI systems with both stable and uncertain elements | Waterfall for infrastructure, agile for model development |

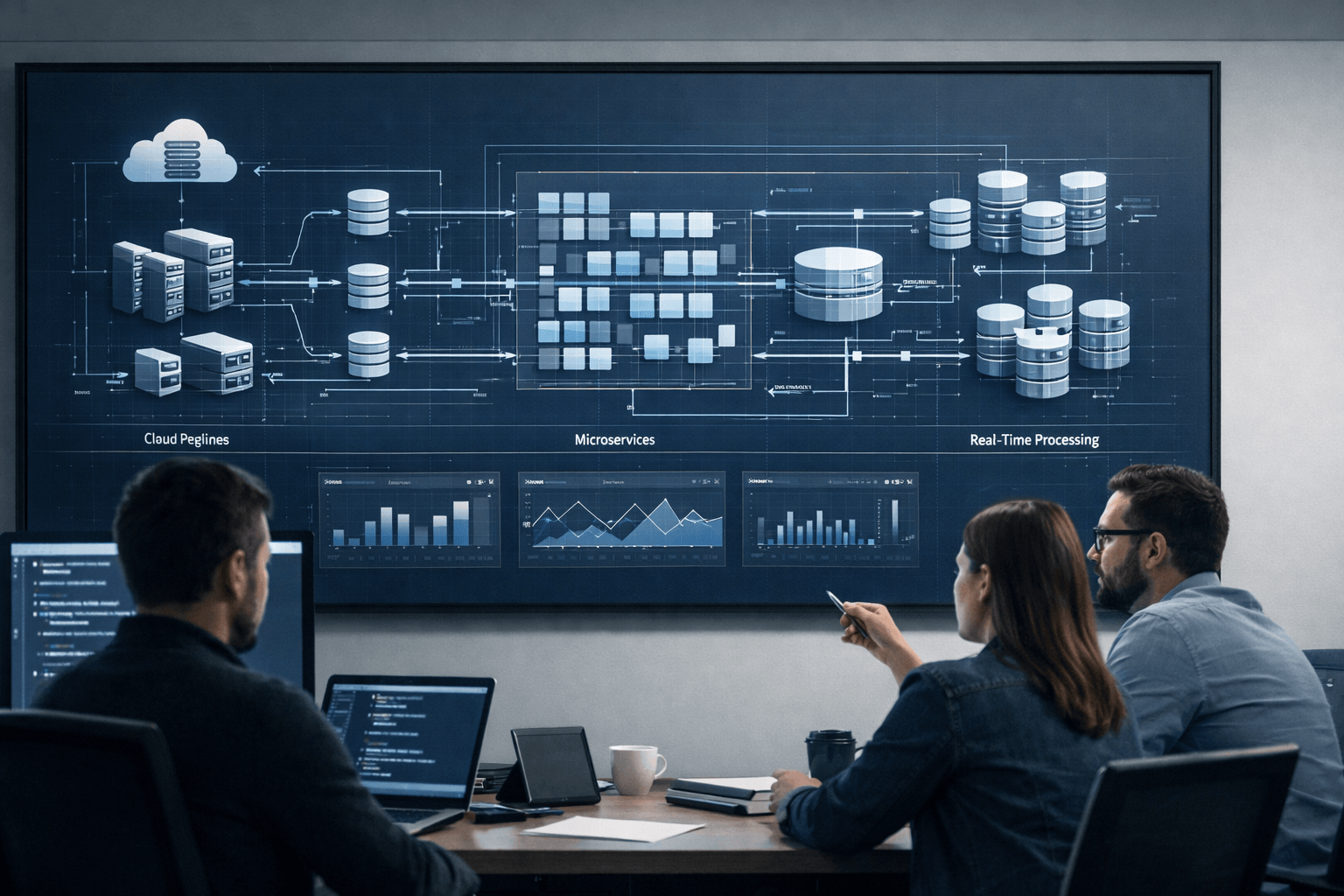

Most successful AI projects use hybrid approaches. Infrastructure decisions like data pipelines and deployment architecture benefit from upfront planning. Model development and feature engineering need iterative experimentation. Trying to force pure waterfall or pure agile onto AI development ignores the unique characteristics of machine learning.

The end-to-end development overview for AI systems must account for continuous learning. Unlike traditional software where code behavior is deterministic, AI models produce probabilistic outputs that shift with data changes. Your development process needs built-in mechanisms for monitoring performance, detecting drift, and triggering retraining when necessary.

Integration with existing systems deserves special attention during execution. Your AI model doesn’t operate in isolation. It needs to receive inputs from upstream systems, process them efficiently, and deliver outputs in formats downstream systems can consume. Planning these integration points early prevents painful rework when you discover incompatibilities during deployment. Leveraging artificial intelligence services with integration experience accelerates this critical phase.

Verifying and maintaining AI systems: avoiding model drift and ensuring ongoing value

Deployment isn’t the finish line for AI projects. It’s the start of an ongoing maintenance cycle that determines whether your system delivers sustained value. Model drift affects 91% of machine learning models, gradually degrading predictions as real-world conditions change. Without active monitoring and maintenance, even well-built AI systems become unreliable.

Model drift happens when the statistical properties of your input data change over time. Customer behavior shifts, market conditions evolve, or new product features alter usage patterns. Your model’s training data no longer represents current reality, so prediction accuracy drops. This degradation can be subtle and gradual, making it hard to detect without systematic monitoring.

Effective AI maintenance requires three core practices:

- Continuous performance monitoring tracking key metrics in production

- Automated alerting when accuracy or other metrics fall below thresholds

- Regular retraining cycles using fresh data to restore performance

- Data governance ensuring training data quality and relevance over time

These practices demand infrastructure investment beyond initial model development. You need logging systems that capture predictions and outcomes, dashboards that visualize performance trends, and pipelines that automate retraining workflows. Building this operational foundation early prevents the scramble that happens when drift causes visible problems.

Pro Tip: Establish baseline performance metrics during initial deployment and track them weekly. Small degradation trends spotted early are easier to address than sudden accuracy drops that damage user trust.

AI deployment demands organizational change, not just technical expertise. Your team needs processes for reviewing model outputs, handling edge cases, and incorporating user feedback. Stakeholders must understand AI limitations and know when to override automated decisions. This change management often determines success more than technical sophistication.

Aligning AI systems with evolving business objectives requires regular review cycles. What made sense six months ago might not match current priorities. Customer needs shift, competitive landscapes change, and new opportunities emerge. Your AI roadmap should include quarterly assessments asking whether current systems still solve the right problems. Being willing to sunset underperforming projects or pivot to new use cases keeps AI investments productive.

The verification process extends beyond technical metrics to business impact measurement. Are users actually adopting the AI features? Do predictions lead to better decisions? What’s the ROI compared to initial projections? These questions connect technical performance to business value, ensuring your AI development process serves real organizational goals. Implementing scalable AI solution strategies from the start makes this ongoing optimization manageable as your systems grow.

Explore Meduzzen’s AI and software development services

Mastering the AI development process requires both technical expertise and practical experience navigating the pitfalls that derail most projects. Meduzzen brings over 10 years of software development experience and a team of 150+ engineers who specialize in turning AI concepts into production systems that deliver measurable business value.

Our artificial intelligence services cover the full development lifecycle, from initial problem definition and data preparation through deployment and ongoing maintenance. We’ve helped startups and growing businesses across FinTech, Healthcare, EdTech, and other industries build scalable AI solutions that integrate seamlessly with existing workflows. Whether you need dedicated AI engineers to augment your team or end-to-end development for a new AI product, our web services and custom cloud development expertise ensures your project succeeds. Explore how Meduzzen can accelerate your AI initiatives with proven processes and pre-vetted technical talent.

Frequently asked questions

What makes AI development different from traditional software development?

AI development centers on data quality, model training, and continuous evaluation rather than fixed code logic. Traditional software follows deterministic rules you write explicitly, while AI systems learn patterns from data and produce probabilistic outputs. This fundamental difference means AI projects require ongoing monitoring to detect model drift and regular retraining to maintain accuracy as conditions change.

How can startups reduce the risk of AI project failure?

Focus on real user needs and clear business objectives before selecting technical solutions. Invest heavily in data preparation and quality assurance, as poor data causes most project failures. Establish evaluation suites and monitoring infrastructure early in the development process rather than treating them as afterthoughts. This foundation helps you catch problems before they derail production deployment.

What is model drift and why is it important to monitor?

Model drift occurs when AI prediction accuracy degrades over time because real-world data patterns change. Your model’s training data no longer represents current conditions, so outputs become less reliable. Regular monitoring detects drift early through performance metrics, allowing you to retrain models with fresh data before accuracy drops enough to impact business value.

When should an AI project move from pilot to production?

Move to production only after evaluation metrics consistently demonstrate business value and model stability across diverse scenarios. Your data infrastructure, governance processes, and integration with existing workflows must be fully established. Rushing to production without this foundation leads to the operational chaos and quality issues that cause most AI deployments to fail.