In this article

Choosing the right AI strategy in 2026 means looking past model hype to focus on what truly drives results: robust architecture, operational resilience, and real-world performance. With AI agents becoming digital coworkers and evaluation shifting from benchmarks to scenario testing, tech leaders need clear criteria to separate innovation from noise. This guide provides a practical framework and highlights the top AI trends shaping strategic decisions for startups and growing businesses in 2026.

Key takeaways

| Point | Details |

|---|---|

| Architecture over models | AI system design patterns determine success more than model selection, emphasizing modularity and reliability. |

| AI agents as coworkers | AI agents will collaborate as digital team members, enabling small teams to execute large-scale operations efficiently. |

| Beyond benchmarks | Evaluation now includes portfolio testing across capability, cost, latency, and safety rather than relying on leaderboard scores. |

| Risk management priority | AI can amplify operational and compliance risks, requiring disciplined governance frameworks and security controls. |

| Healthcare transformation | Generative AI expands from diagnostics to symptom triage and treatment planning, transforming patient care workflows. |

How to evaluate AI development trends in 2026: key criteria for tech leaders

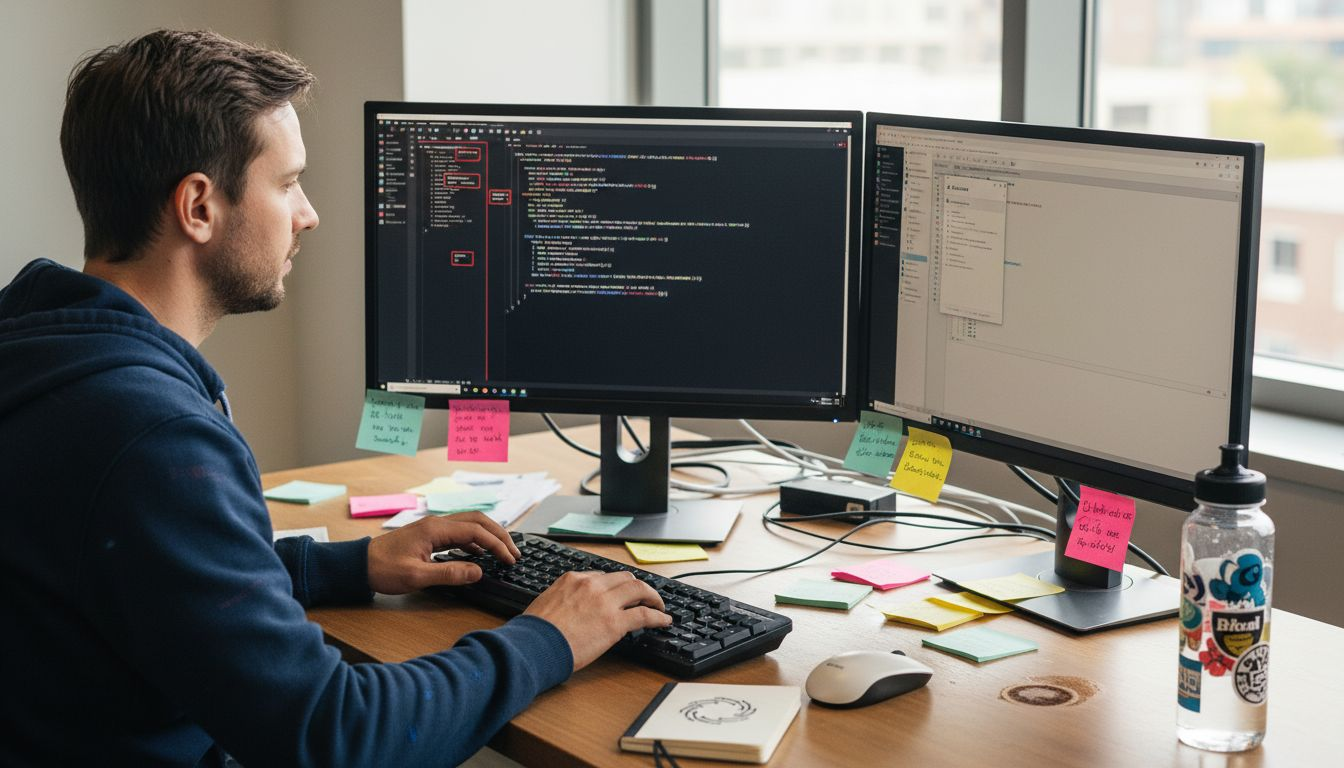

Effective AI adoption in 2026 requires moving beyond choosing the latest model and focusing on the underlying system architecture. Architecture determines AI success more than model selection, making it essential to evaluate solutions based on how they’re built, not just what models they use.

When assessing AI technologies, prioritize these architectural criteria:

- Modularity: Can the system adapt to new models or integrate with existing workflows without complete rebuilds?

- Scalability: Will it handle growth in users, data volume, and computational demands efficiently?

- Reliability: Does it maintain consistent performance under realistic operational constraints, not just in controlled test environments?

- Cost efficiency: What are the total ownership costs, including inference, maintenance, and infrastructure?

Security and risk management form another critical evaluation layer. AI solutions must include identity and access controls for agents, data quality validation, and compliance safeguards. Without these, even technically impressive systems create vulnerabilities.

Usability and integration capabilities matter equally. Your team needs AI tools that work within existing tech stacks and workflows. Solutions requiring extensive retraining or custom development slow adoption and reduce ROI.

Pro Tip: Favor AI platforms designed for collaboration with human teams rather than full automation. Systems built for human-AI partnership deliver better outcomes because they preserve human judgment where it matters most while automating repetitive tasks.

Finally, evaluate vendors based on their approach to building AI solutions for scalable SaaS. Look for partners who understand that AI success comes from thoughtful system design, rigorous testing, and continuous operational improvement rather than chasing the newest model release.

Top AI development trends to watch in 2026

Several transformative AI trends are reshaping how startups and growing businesses approach technology strategy in 2026. Understanding these trends helps you allocate resources effectively and identify opportunities for competitive advantage.

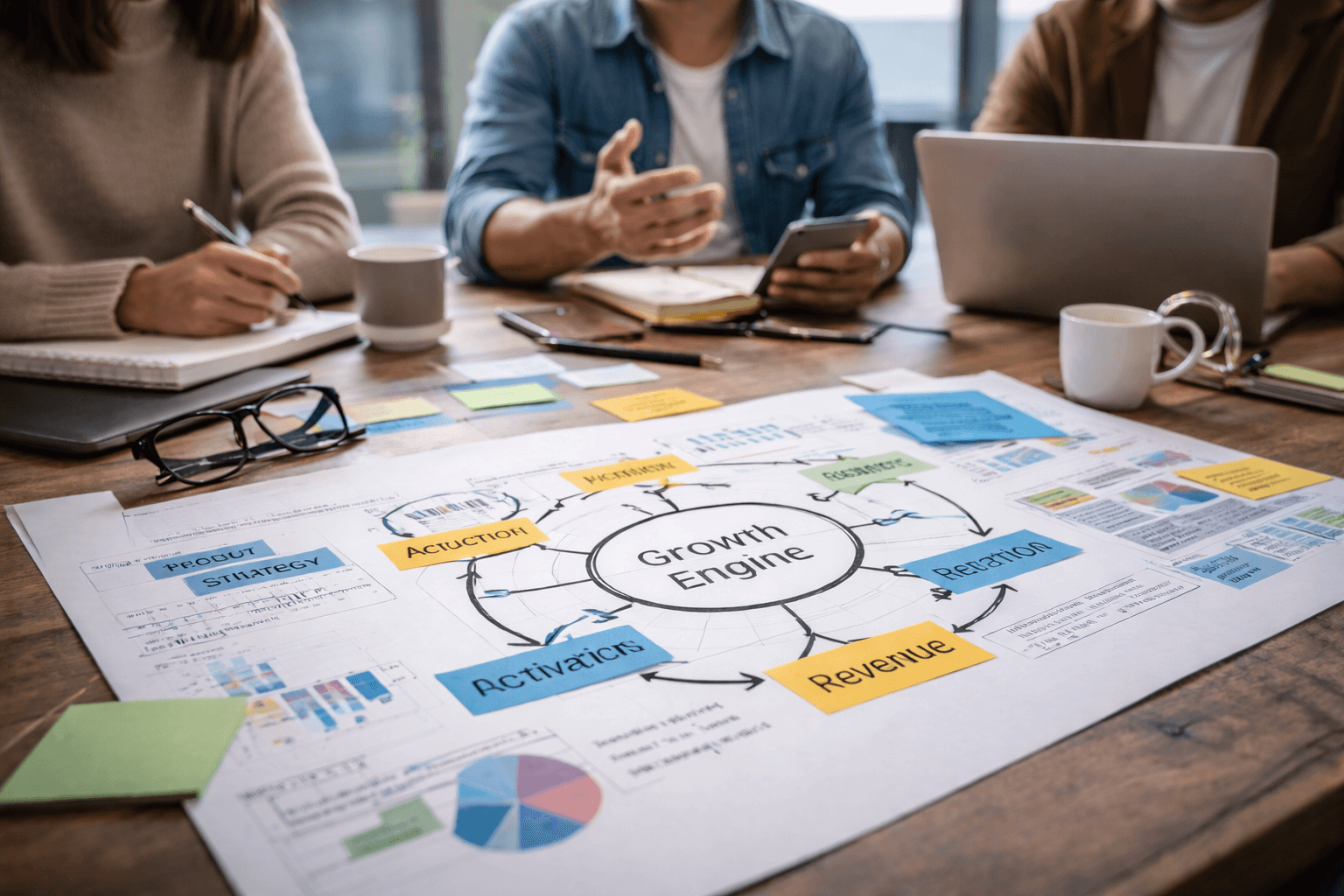

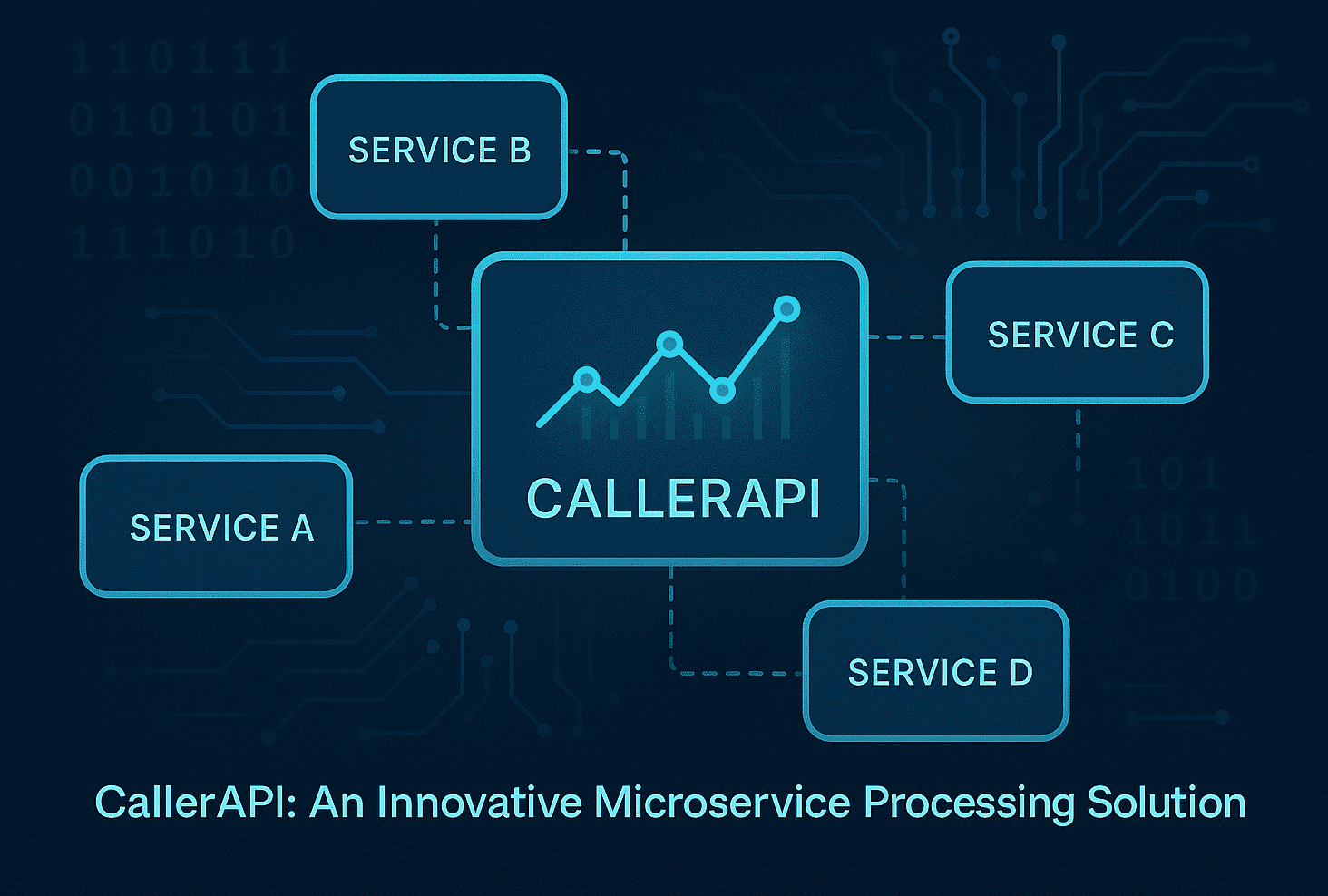

AI agents as collaborative digital coworkers represent the most significant shift. Rather than simple automation tools, AI agents will become digital coworkers enabling small teams to launch global campaigns and manage complex operations. These agents handle multi-step workflows, coordinate with other agents, and make contextual decisions that previously required human intervention.

For startups, this means a three-person team can now execute marketing, customer support, and operations at scales that once required dozens of employees. The key is designing workflows where agents complement human creativity and strategic thinking.

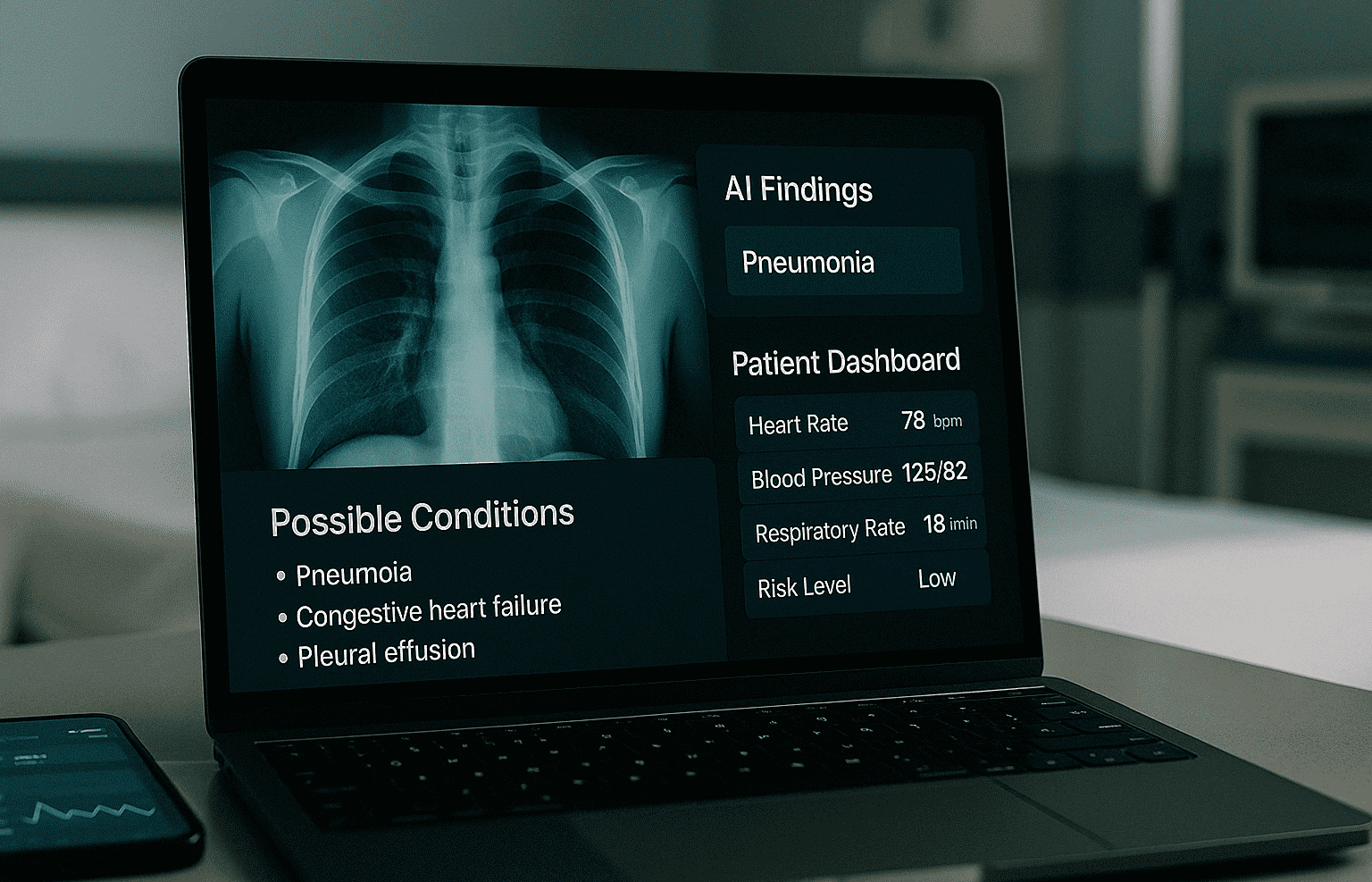

Generative AI in healthcare continues expanding beyond diagnostics. AI in healthcare extends beyond diagnostics to symptom triage and treatment planning, transforming patient engagement and clinical workflows. Companies building AI assistants for healthcare are creating systems that provide personalized health guidance while maintaining strict privacy and compliance standards.

This trend matters even for non-healthcare businesses because it demonstrates how AI can handle sensitive, high-stakes decisions when properly architected. The lessons from AI in healthcare trends apply to any industry requiring trust and accountability.

Security-first AI architecture has become mandatory rather than optional. Systems now need built-in identity and access controls for AI agents, audit trails, and mechanisms to detect and respond to agent misbehavior. As AI agents gain autonomy, they become potential attack vectors and compliance risks.

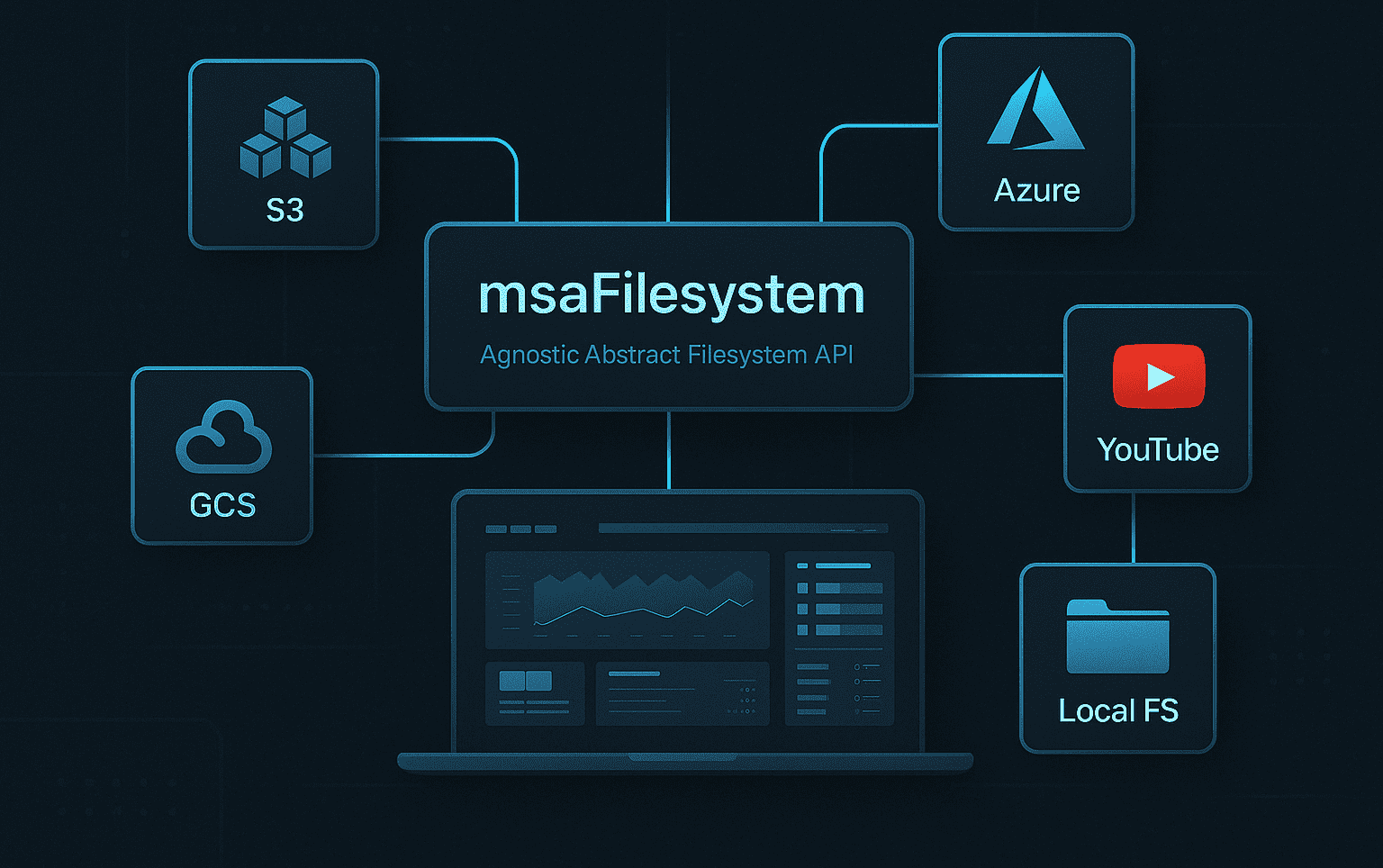

Request routing innovations reduce operational costs dramatically. By intelligently routing requests to the most appropriate model or service based on complexity, cost, and performance requirements, organizations cut expenses by over 60% while maintaining user experience quality. This approach recognizes that not every query needs the most powerful model.

Adaptive AI architectures enable organizations to respond quickly to new requirements. Rather than building rigid systems around specific models or tasks, successful implementations create flexible frameworks that accommodate new capabilities, integrate emerging models, and scale globally without architectural overhauls.

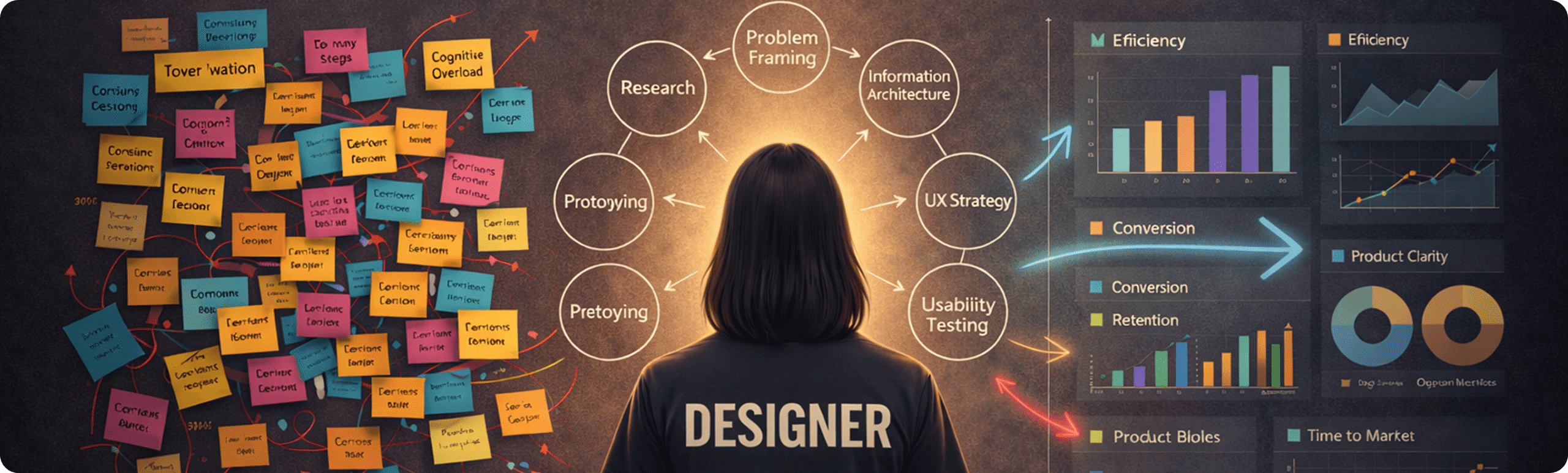

Evaluating AI models beyond benchmarks: the shift in 2026 evaluation practices

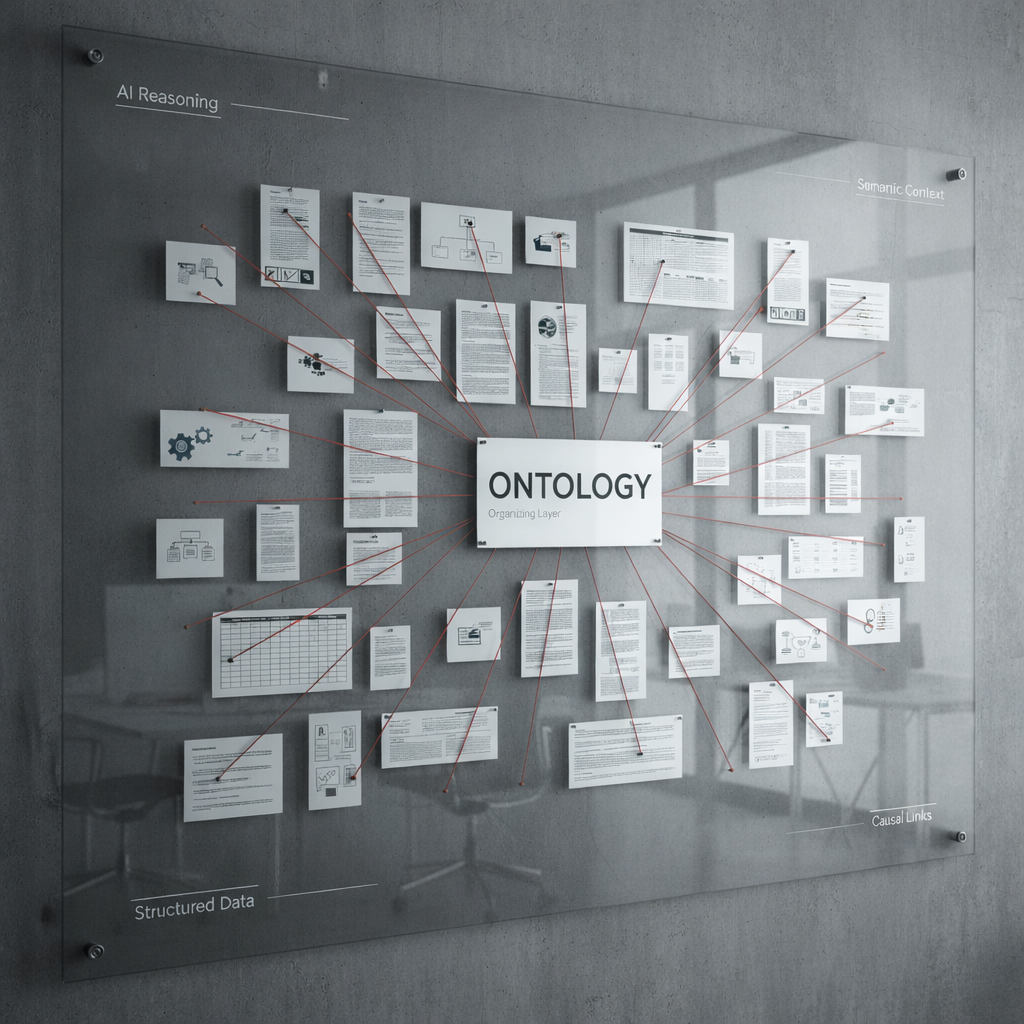

Traditional AI benchmarks no longer provide sufficient guidance for real-world deployment decisions. Leaderboard scores measure performance on curated test sets but miss critical aspects of operational reliability, cost efficiency, and failure modes.

The Car Wash Test illustrates this problem perfectly. The Car Wash Test reveals overvaluation of polished averages and undervaluation of grounded reasoning. Models scoring high on benchmarks often struggle with practical questions requiring common sense and contextual understanding.

Modern evaluation practice is shifting to portfolio evaluation including capability, reliability, cost-latency, and safety tests. This multifaceted approach provides a realistic picture of how AI systems perform under operational conditions.

A comprehensive evaluation portfolio includes:

- Capability tests: Can the system handle the full range of tasks your business requires, including edge cases?

- Reliability assessments: How consistently does it perform across different inputs, user types, and usage patterns?

- Cost-latency analysis: What are the trade-offs between speed, quality, and operational costs for typical workloads?

- Safety evaluations: What are the failure modes, and how does the system behave when it encounters inputs outside its training distribution?

This evaluation approach helps identify which AI solutions match your specific operational context. A model excelling at creative writing might underperform at structured data extraction, even if both score similarly on general benchmarks.

| Evaluation Type | Traditional Benchmark | Portfolio Approach |

|---|---|---|

| Focus | Average performance on test sets | Real-world scenario resilience |

| Metrics | Single accuracy score | Capability, cost, latency, safety |

| Use case fit | Generic comparisons | Context-specific validation |

| Failure visibility | Hidden in averages | Explicitly tested and documented |

Pro Tip: Test AI systems using scenarios directly sampled from your actual operational environment rather than relying on vendor-provided benchmarks. This reveals performance characteristics that matter for your specific use case.

This shift toward scenario-based evaluation connects directly to AI trends in software bug detection, where contextual testing outperforms generic quality metrics.

Mitigating AI risks and ensuring trustworthy AI implementation in 2026

AI systems can amplify mistakes at industrial scale, transforming small errors into major operational, legal, and reputational crises. In 2026, the risk is not whether to use AI, but how to prevent AI from amplifying operational and legal exposures.

Adverse AI outcomes cluster into eight categories including decision harm, compliance breach, and model drift. Understanding these risk categories helps you build appropriate safeguards before deployment.

Effective risk management requires a structured approach:

- Governance frameworks: Establish clear ownership, accountability structures, and decision-making protocols for AI systems.

- Data quality standards: Implement validation pipelines ensuring training and operational data meet quality, bias, and representativeness requirements.

- Secure deployments: Use containerization, access controls, and monitoring to prevent unauthorized access or manipulation.

- Continuous monitoring: Track model performance, data drift, and operational metrics to detect degradation before it impacts users.

- Incident response plans: Prepare procedures for quickly identifying, isolating, and remediating AI system failures.

Trust forms the foundation for successful AI adoption. Customers, regulators, and employees need confidence that AI systems behave predictably and align with organizational values.

| Failure Mode | Risk Impact | Mitigation Strategy |

|---|---|---|

| Model drift | Degraded accuracy over time | Continuous monitoring and retraining pipelines |

| Bias amplification | Discriminatory outcomes | Diverse training data and fairness audits |

| Security breach | Data exposure or manipulation | Encryption, access controls, audit logging |

| Compliance violation | Legal penalties and reputation damage | Regulatory alignment reviews and documentation |

| Hallucinations | Incorrect information delivered confidently | Validation layers and human review for high-stakes decisions |

AI without disciplined risk management is a liability multiplier. Tech leaders who treat AI as a strategic technology requiring governance and operational discipline turn potential vulnerabilities into competitive advantages.

Building trustworthy AI systems means integrating quality assurance throughout development, not adding it afterward. Approaches from AI operational risk management show how proactive testing and validation prevent costly failures.

Organizations that master AI risk mitigation in 2026 differentiate themselves through reliability and customer trust, capturing market share from competitors whose AI systems create friction or failures.

Explore Meduzzen’s AI software development services

Navigating AI trends and implementing secure, scalable solutions requires expertise in both cutting-edge technology and practical system design. Meduzzen’s artificial intelligence services help startups and growing businesses build AI systems that deliver measurable results while managing risk effectively.

Our team specializes in architecting AI solutions that prioritize operational reliability and business outcomes over model hype. Whether you need custom cloud software development services for scalable AI infrastructure or end-to-end product development integrating AI capabilities, we provide pre-vetted engineers who understand modern AI architecture patterns.

With over 150 engineers experienced in Python, AI, DevOps, and Cloud technologies, we deliver AI-powered solutions across FinTech, Healthcare, Logistics, and other industries. Our web services ensure seamless integration of AI capabilities into your existing platforms, maintaining performance and user experience while adding intelligence.

Frequently asked questions

What architectural elements most influence AI success in 2026?

Modular design, request routing efficiency, and built-in security frameworks determine AI success more than model selection. Systems with flexible architectures adapt to new models and requirements without complete rebuilds, reducing long-term costs and technical debt.

How do AI agents function as digital coworkers?

AI agents handle multi-step workflows, coordinate with other agents and humans, and make contextual decisions within defined parameters. They augment small teams by automating repetitive tasks while escalating complex decisions to human judgment, enabling startups to operate at enterprise scale.

Why are traditional AI benchmarks insufficient?

Benchmarks measure average performance on curated tests but hide failure modes and context-specific weaknesses. Portfolio evaluation across capability, cost, latency, and safety provides realistic insight into how systems perform under your actual operational conditions.

What AI risks should tech leaders prioritize?

Operational risks from model drift and hallucinations, compliance risks from regulatory violations, security risks from unauthorized access, and reputational risks from biased outcomes require immediate attention. Governance frameworks and continuous monitoring prevent these risks from becoming business crises.

How does evaluation shift impact AI vendor selection?

Vendors must demonstrate real-world performance under conditions matching your environment rather than citing benchmark scores. Request scenario-based testing, cost-latency analysis, and documented failure modes before committing to AI platforms or partnerships.