In this article

Modern software engineering practices most teams get wrong

Business & Strategy

Apr 18, 2026

10 min read

Learn how modern software engineering practices like CI/CD, DORA metrics, and trunk-based development help startups build faster, more scalable engineering teams.

TL;DR:

- Modern software engineering practices focus on continuous, collaborative delivery using CI/CD, trunk-based development, and AI support.

- Tracking DORA metrics and team health signals helps startups measure and improve deployment speed, stability, and developer satisfaction.

- Effective team structures evolve with growth, emphasizing senior talent, clear ownership, automation, and disciplined habits over tools.

Most startups pour serious money into the latest tools, frameworks, and cloud services, expecting velocity to follow automatically. It rarely does. The teams that consistently ship faster, break less, and scale without chaos aren’t just better equipped. They practice differently. Modern software engineering practices now emphasize AI-augmented delivery, trunk-based development, CI/CD pipelines, and DevSecOps as the baseline for any startup serious about sustainable growth. This guide walks through what those practices actually look like in the real world, why metrics like DORA matter more than gut feel, and how to build a team structure that holds together as you scale.

Key Takeaways

| Point | Details |

|---|---|

| DORA metrics drive performance | Tracking deployment frequency, lead time, and failure rates gives startups a proven edge in team velocity and product quality. |

| Team structure must match scale | Engineering orgs should evolve from flat teams to pods and platform squads as they grow to avoid bottlenecks and coordination issues. |

| Automation is non-negotiable | Trunk-based development, CI/CD pipelines, and automation are essential to scale efficiently without losing reliability. |

| AI amplifies strong practices | AI and platform engineering only drive outsized gains when layered on solid team habits and workflows. |

| Continuous improvement wins | Regularly experimenting and measuring both product and team health outperforms chasing benchmarks or copying big company playbooks. |

Understanding modern software engineering practices

The word “modern” gets thrown around loosely in engineering circles. But when we talk about modern software engineering practices, we mean something specific: a shift from slow, siloed, release-heavy workflows toward continuous, collaborative, and feedback-driven delivery.

Traditional development models, think waterfall or even early Agile implementations, treated software delivery as a series of handoffs. Design handed off to development. Development handed off to QA. QA handed off to ops. Each boundary introduced delay, miscommunication, and blame. The result was software that arrived late, broke in production, and frustrated everyone involved.

Modern practices dissolve those walls. Development methodologies have evolved to prioritize cross-functional ownership, where the team that builds a feature also owns its deployment and reliability. That shift alone changes how engineers think about quality.

Here’s what defines the modern engineering baseline today:

- Trunk-based development: Engineers commit to a shared main branch frequently, reducing merge conflicts and integration debt

- CI/CD pipelines: Automated build, test, and deploy cycles that catch problems early and ship faster

- DevSecOps: Security baked into every stage of delivery, not bolted on at the end

- AI-augmented SDLC: AI tools supporting code review, test generation, and anomaly detection across the development lifecycle

- Platform engineering: Internal developer platforms that reduce cognitive load and standardize how teams build and deploy

The contrast with legacy approaches is stark. A traditional team might deploy once a month after a painful release cycle. A modern team using these practices deploys multiple times per day with confidence.

“The goal isn’t to adopt every new tool. It’s to build habits and systems that make delivering quality software the path of least resistance.”

For startups, this matters enormously. You don’t have the luxury of a six-month release cycle. Your competitive edge depends on learning fast, iterating quickly, and keeping your systems stable enough to support growth. Our engineering checklist for scaling breaks down exactly which practices to prioritize at each stage of growth, if you want a concrete starting point.

The empirical case for modern practices is strong. Teams that adopt trunk-based development and CI/CD consistently report shorter lead times, fewer production incidents, and higher developer satisfaction. These aren’t soft benefits. They translate directly into faster product iteration and lower operational costs.

What’s worth noting is that modern practices aren’t about perfection from day one. They’re about building the right habits early, so that as your team grows from five engineers to fifty, the foundation holds.

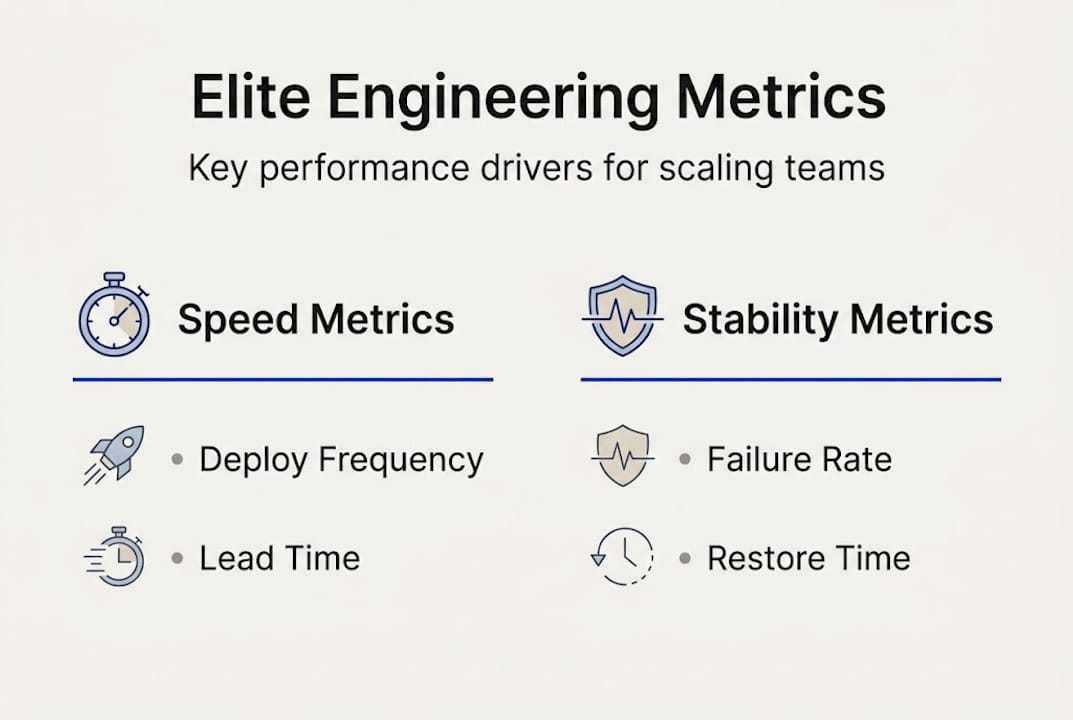

Key metrics and frameworks for elite engineering teams

You can’t improve what you don’t measure. That’s a cliché, but in engineering it’s a survival principle. The DORA (DevOps Research and Assessment) metrics have become the industry standard for measuring software delivery performance, and for good reason: they’re simple, actionable, and directly tied to business outcomes.

According to State of DevOps 2024, elite teams achieve deployment frequency multiple times per day, lead time for changes under one hour, a change failure rate between 0 and 15%, and time to restore service under one day. Those numbers aren’t aspirational. They’re achievable with the right practices in place.

Here’s how the four core DORA metrics break down in practice:

| Metric | Elite performance | Low performance |

|---|---|---|

| Deployment frequency | Multiple times/day | Less than once/month |

| Lead time for changes | Less than 1 hour | 1 to 6 months |

| Change failure rate | 0 to 15% | 46 to 60% |

| Time to restore service | Less than 1 hour | 1 week or more |

The gap between elite and low-performing teams is not incremental. It’s generational. A low-performing team deploying once a month simply cannot compete with one shipping dozens of times daily.

But DORA metrics alone don’t tell the whole story. The same DevOps research emphasizes that teams should also track developer experience (DX) and team health signals like burnout rates, onboarding time, and psychological safety. A team hitting elite DORA numbers while burning out its engineers is building on sand.

Key health metrics to track alongside DORA:

- Developer satisfaction scores (quarterly pulse surveys work well)

- Onboarding time to first meaningful contribution (target: under two weeks)

- Meeting load vs. deep work time ratio

- Incident response fatigue (how often are engineers paged outside business hours)

Our DevOps service strategies are built around exactly this balance: delivery speed without burning out the people doing the work.

Pro Tip: Don’t benchmark obsessively against industry averages. The State of DevOps 2024 recommends running small, time-boxed experiments to improve your own baseline. A 10% improvement in your lead time matters more than hitting someone else’s elite threshold.

The practical move for most startups is to instrument your pipeline first. You can’t track deployment frequency if your deployments aren’t logged. Start with the data you can collect today, then build toward the metrics that matter most for your current growth stage.

Team structures, collaboration, and scaling your engineering org

Metrics give you a dashboard. But sustainable engineering velocity is built by people, and how you organize those people changes everything as you grow.

Research on scaling engineering teams shows that team structures need to evolve through distinct stages: flat teams work well from 5 to 10 engineers, pods with dedicated ownership emerge between 10 and 25, and platform teams with formal career ladders become necessary at 25 and beyond. Trying to run a 40-person engineering org with the same flat structure you used at 8 people is like trying to navigate a highway with a bicycle map.

Here’s a comparison of how team structure should evolve:

| Org size | Structure | Strengths | Risks |

|---|---|---|---|

| 5 to 10 | Flat, generalist | Fast decisions, low overhead | Knowledge silos, no specialization |

| 10 to 25 | Feature pods, EMs | Clear ownership, parallel work | Coordination overhead increases |

| 25 and up | Platform and domain teams | Scalability, career growth | Bureaucracy risk, slower alignment |

One of the most common and costly mistakes early-stage startups make is delaying senior engineering hires. The instinct is to hire junior engineers to stretch the budget. But senior engineers don’t just write better code. They establish the patterns, review standards, and architectural decisions that every engineer who joins after them will inherit. Hire senior talent early, even if it means hiring fewer people overall.

As teams grow, quality gates become critical infrastructure:

- Code review standards: Define what a good review looks like, not just that reviews happen

- Automated testing requirements: Set minimum coverage thresholds before any PR merges

- Architecture Decision Records (ADRs): Document why decisions were made, not just what was decided

- Runbooks: Operational documentation that lets any engineer handle incidents without heroics

- CI/CD enforcement: No manual deployments, ever

Pro Tip: When you hit 15 engineers, assign explicit ownership of each service or domain. Ambiguous ownership is the single biggest source of production incidents in scaling teams. Our work building scalable SaaS team structures confirms this pattern across industries.

The DevOps and team performance research is clear: high-performing teams invest in psychological safety and clear ownership as much as they invest in tooling. Structure without trust is just bureaucracy.

From code to production: Best practices for CI/CD, trunk-based development, and automation

Let’s get specific about the day-to-day practices that separate teams who ship with confidence from those who dread release day.

The trunk-based versus GitFlow debate has largely been settled for startups. Scaling engineering guidance is clear: for teams of 5 to 50 engineers, feature branches with pull requests and automated preview environments outperform GitFlow’s complexity. GitFlow was designed for teams managing multiple long-lived release versions simultaneously. Most startups don’t need that. What they need is fast feedback and clean integration.

Trunk-based development, where engineers merge small changes to the main branch frequently, keeps integration debt low and makes CI/CD pipelines dramatically more effective. The key discipline is keeping branches short-lived, ideally under 24 hours.

The 2025 DORA Report highlights AI-augmented pipelines as a growing differentiator, with teams using AI for automated code review, test generation, and deployment risk scoring seeing measurable cycle time improvements.

“Automation isn’t about replacing engineers. It’s about removing the friction that stops engineers from doing their best work.”

Your non-negotiable automation checklist:

- Automated unit and integration tests that run on every commit

- Static analysis and linting enforced before code review begins

- Preview environments spun up automatically for every pull request

- Automated security scanning (SAST/DAST) integrated into the pipeline

- One-click rollback capability for every production deployment

- Deployment frequency tracking built into your pipeline tooling

Our Git best practices resource covers branching strategies in detail, while our guide on AI trends in CI/CD explores how AI-assisted bug detection is reshaping pipeline design in 2026.

Pro Tip: If your CI pipeline takes longer than 10 minutes, engineers will start skipping it mentally, even if they can’t skip it technically. Invest in parallelizing your test suite early. A fast pipeline is a pipeline people trust.

Avoid big-bang releases at all costs. Shipping large batches of changes at once amplifies risk and makes root-cause analysis nearly impossible when something breaks. Small, frequent deployments with feature flags give you control without sacrificing velocity.

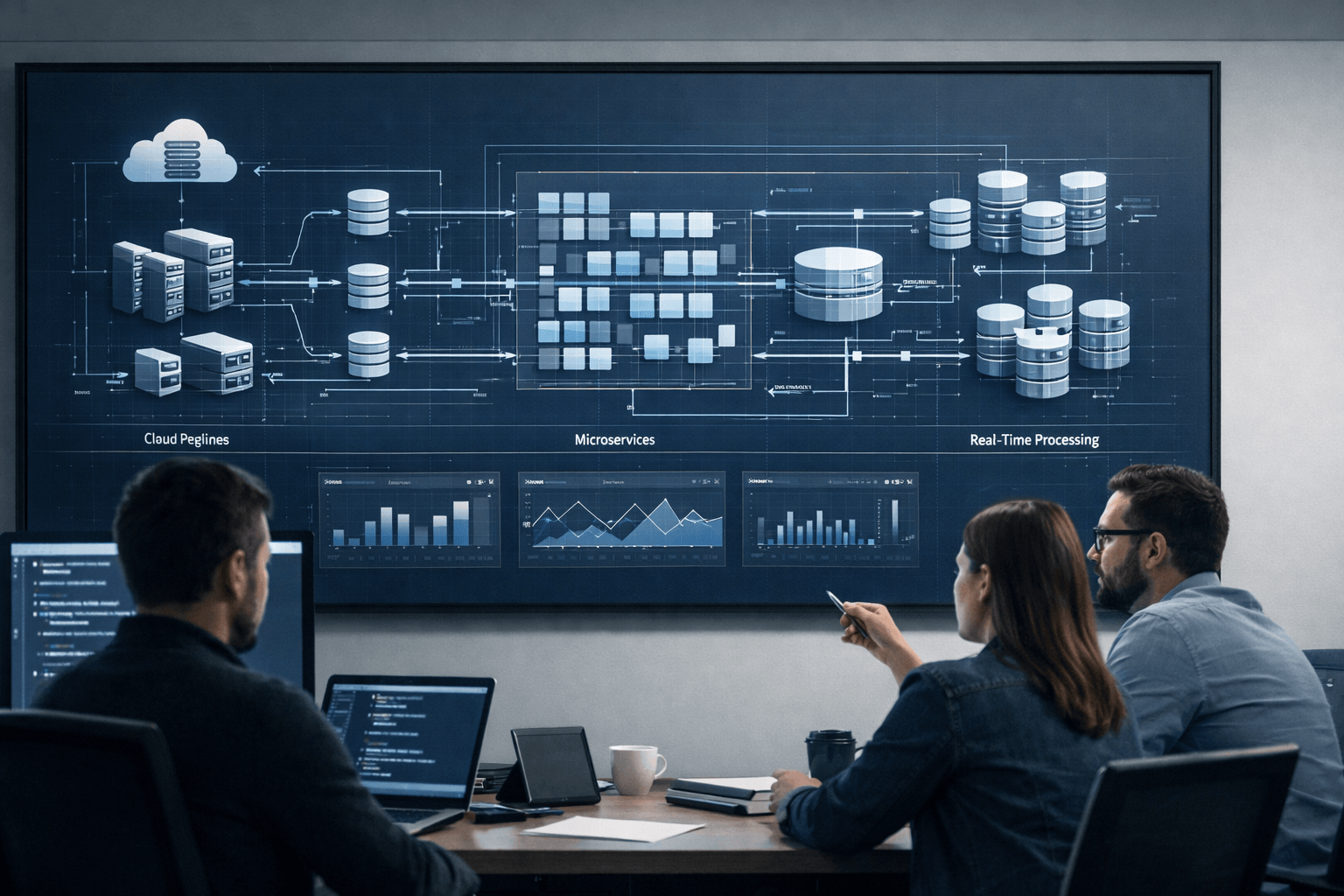

Achieving balance: Speed, stability, and the role of AI-platform integration

Every CTO eventually faces the same tension: the business wants faster shipping, the team wants stability, and everyone is watching AI tools promise to solve both at once. The reality is more nuanced.

The 2025 DORA Report found that 75 to 80% of engineering teams report productivity gains from AI adoption, but with a critical caveat: AI amplifies what’s already there. Strong teams with clean processes get 30 to 40% faster cycle times. Teams with poor fundamentals see little improvement.

AI doesn’t fix broken processes. It accelerates them, for better or worse.

The same pattern holds across software development methodologies: teams that adopt new frameworks without addressing underlying workflow mismatches rarely see the promised gains. The methodology becomes another layer of complexity rather than a source of clarity.

What actually works:

- Prioritize workflow clarity before adding AI tools. If your engineers can’t describe your deployment process in two sentences, AI won’t help.

- Use AI for high-friction, low-creativity tasks first: test generation, documentation drafts, code review summaries

- Monitor DORA metrics before and after AI adoption to measure real impact, not perceived impact

- Protect deep work time even as AI tools multiply. Constant context-switching erases productivity gains

- Run small experiments rather than org-wide tool rollouts. Find what works for your specific team before scaling it

Platform engineering plays a complementary role here. When your internal developer platform handles infrastructure provisioning, environment management, and deployment pipelines, engineers stop losing hours to operational friction. That’s where AI and platform engineering together create a genuine productivity edge.

Our resources on AI-powered software practices and building scalable AI SaaS go deeper on how to integrate AI capabilities without destabilizing your delivery pipeline.

The honest truth is that speed and stability aren’t opposites. They’re both outcomes of the same thing: disciplined, well-structured engineering habits practiced consistently over time.

A modern CTO’s perspective: What most guides get wrong about engineering transformation

Most engineering transformation guides hand you a list of tools and call it a roadmap. Adopt Kubernetes. Implement GitOps. Roll out AI code assistants. The list grows, the complexity compounds, and somehow the team is slower than before.

Here’s what we’ve seen across a decade of working with scaling startups: the bottleneck is almost never the toolstack. It’s the habits, the communication patterns, and the unspoken assumptions about how work gets done.

The 2024 DORA research is direct about this: prioritizing capabilities like trunk-based development, fast feedback loops, user-centricity, and platform engineering matters far more than which specific tools you choose. Capabilities are durable. Tools are replaceable.

Three mistakes we see founders make repeatedly:

First, copying big-company processes without the context that makes those processes work. Google’s engineering culture didn’t emerge from a playbook. It emerged from years of specific constraints, failures, and deliberate iteration. Importing their review process into a 12-person startup creates ceremony without value.

Second, treating engineering transformation as a one-time project rather than a continuous practice. You don’t implement modern practices. You build them, refine them, and protect them as the team grows.

Third, underestimating how much senior engineering judgment shapes culture. One strong senior engineer who models good habits is worth more than any framework adoption. Our AI-innovation with Python guide touches on this when discussing how technical leadership shapes long-term architecture decisions.

The real transformation happens quietly, in code reviews, in how incidents are handled, and in whether your team feels safe raising concerns. Build that culture first. The tools will follow.

Accelerate your team with modern engineering expertise

Reading about modern engineering practices is one thing. Implementing them while shipping product, managing a growing team, and keeping investors happy is another challenge entirely. That gap between knowing and doing is where most startups lose momentum.

At Meduzzen, we’ve spent over 10 years helping startups and scaling companies close exactly that gap. Our engineers bring custom DevOps expertise that goes beyond tooling setup: we help you build the pipelines, team habits, and quality gates that hold up under real growth pressure. Whether you need modern web development partners to accelerate your product roadmap or team staff augmentation to add pre-vetted senior engineers who integrate fast. If Python is your stack, see how we hire and vet Python developers. We’re built for the kind of long-term partnership that actually moves the needle. Let’s talk about where your engineering velocity stands today.

Frequently asked questions

Deployment Frequency, Lead Time for Changes, Change Failure Rate, and Time to Restore Service are the four key DORA metrics that define elite software delivery performance and give startups a clear baseline for improvement.

AI adoption boosts productivity by 75 to 80% for teams with strong foundational practices, but adds little value for teams that haven’t yet established clean workflows and disciplined delivery habits.

Shift from flat teams at 5 to 10 engineers to pods with engineering managers at 10 to 25, then introduce platform teams and formal career ladders once you reach 25 engineers or more.

Trunk-based development enables faster cycle times and cleaner CI/CD integration, while GitFlow’s branching complexity slows team velocity and complicates releases for teams that don’t manage multiple long-lived versions simultaneously.