In this article

How We Classified Thousands of AI Voice Agent Calls at 97% Accuracy

AI & Automation

Apr 21, 2026

15 min read

Most voice agent guides skip the hardest part: deciding whether a human, voicemail, or IVR menu answered the phone. We built classifiers that make that decision in 0.33 milliseconds at 97% accuracy, navigate IVR menus with fine-tuned BERT, and summarize calls at 95% accuracy on test data.

Key takeaways

- Our answering machine detection model (logistic regression on TF-IDF) achieves 97% accuracy with 0.33ms inference time. We chose it over deep learning because the speed and accuracy were already sufficient for production.

- Scripted phrase classification (SVM, 5 categories) reaches 99% accuracy and fires pre-recorded audio instantly when confidence exceeds 0.46, cutting response latency from 2 seconds to under 500ms.

- We abandoned LLM-based IVR navigation after two weeks. The model could not consistently pick the right menu option when multiple valid choices existed. We moved to semantic similarity + SVM, then to fine-tuned BERT, which returns the digit to press directly from raw menu text.

- Splitting one summarization prompt into three single-task prompts improved real-call accuracy from 40-50% to 60-65%. Switching from Groq’s 120B model to GPT-5.2 pushed it to 95% on our test set.

- The tag-based extraction approach (LLM extracts 25+ structured tags, backend applies 20-30 rules) gives far more control than asking an LLM to classify directly.

The problem nobody writes about

You have a voice agent. It dials a phone number. Something answers.

That something could be a human receptionist. It could be a recorded voicemail greeting. It could be an automated menu asking you to press 1 for billing. It could be hold music. It could be a fax tone.

Your agent has to classify what it just heard, decide what to do, and execute that decision in milliseconds. If it speaks to a voicemail, it wastes expensive LLM tokens on a recording. If it tries to press buttons during a human conversation, the call fails. If it waits too long to decide, the human hangs up.

This is the classification layer that sits between the raw audio stream and the conversational AI. It determines whether the LLM should even activate. In an AI outbound calling system built to scale to 200,000 calls per month, getting this layer wrong does not cause one bad call. It causes thousands. Whether you are building an AI cold calling agent or an automated verification system, the classification problem is the same.

Answering machine detection and AI phone screening: why logistic regression beats deep learning

The first gate in every outbound call is answering machine detection. Is this a human or a machine?

Commercial platforms like Twilio offer built-in answering machine detection and AI phone screening, but their async implementation introduces several seconds of dead air while the algorithm analyzes the greeting. In an AI voice agent where the first response must arrive in under a second, that silence kills the call before it starts.

We built our own classifier. The architecture is deliberately simple.

The model

Algorithm: Logistic regression

Features: TF-IDF vectorization on the STT transcript (the first 2-3 seconds of audio, transcribed by Deepgram)

Training data: 1,462 labeled samples. Approximately 60% from real call recordings, 40% synthetic (generated by GPT using real examples as templates). The synthetic data was text only, not generated audio.

Dataset composition: 783 total labeled samples in the production model (509 non-human, 274 human), with expanded validation data bringing the total to 1,462.

The results

precision recall f1-score support

HUMAN 0.96 0.95 0.95 55

NON_HUMAN 0.97 0.98 0.98 102

accuracy 0.97 15797% accuracy. 0.33 milliseconds inference time.

We did not test CNN on spectrograms or BERT on transcripts for this task. We started with the simplest possible model and it worked. When your classifier needs to run in under a millisecond on every single call, and logistic regression already gives you 97% accuracy, adding complexity is not engineering. It is vanity.

Where it fails

The most common misclassification: operator greetings that do not include a personal name.

Phrases like “Thank you for calling our support line, take care” or “Thank you for calling your care coordinators, what is your first and last name?” sit at the boundary between human and machine patterns. The TF-IDF features for these phrases overlap significantly with automated greetings.

Out of 157 test samples, 5 were misclassified: 3 humans tagged as machines, 2 machines tagged as humans. For a system making thousands of calls, 3% error translates to real failures. But at 0.33ms per classification, the cost of being wrong on 3% of calls is far lower than the cost of adding 500ms of processing time to 100% of calls with a heavier model.

Scripted phrase classification: responding in 500ms instead of 2 seconds

When the system determines it is speaking to a human, the next problem is speed. An LLM-generated response takes 1.5 to 2.5 seconds. A human will hang up in that time.

The solution: classify common opening phrases and respond with pre-recorded audio instantly.

Five categories, 420 training samples

| Category | Samples | Example |

|---|---|---|

| Greeting | 152 | “Hello,” “Hi, this is Sarah” |

| Thank you | 61 | “Thank you,” “Thanks, I’ll connect you” |

| Okay / confirmation | 87 | “Okay,” “Sure,” “Alright” |

| Have a good day | 55 | “Have a great day,” “Goodbye” |

| Can you repeat | 65 | “Sorry, what was that?” |

Algorithm: Support Vector Machine (SVM)

Accuracy: 99% on test set (84 samples, 20% holdout)

precision recall f1-score support

Greeting 1.00 1.00 1.00 31

Thank you 1.00 1.00 1.00 12

Okay/confirmation 1.00 0.94 0.97 17

Have a good day 0.92 1.00 0.96 11

Can you repeat 1.00 1.00 1.00 13

accuracy 0.99 84Only one misclassification in the entire test set. In production, “thank you” and “okay” were merged into a single backend category without retraining, since both trigger similar scripted responses.

The confidence threshold

If the SVM’s raw confidence score falls below 0.46, the system does not fire a scripted phrase. It routes to the LLM for a generated response instead. Unlike a standard probability between 0 and 1, this score can take arbitrary values, so the threshold was tuned empirically: lower values caused too many incorrect scripted responses, higher values sent too many simple phrases to the expensive LLM path.

One additional rule: the greeting category can only trigger once per call. If the system already greeted the person, it will not greet them again, regardless of confidence score. This prevents the agent from introducing itself three times in a single conversation.

In most human interactions, the first exchange uses a scripted phrase. The agent responds in under 500 milliseconds. The human hears an immediate reply and stays on the line. By the time the conversation moves to substantive topics, the LLM has already loaded the RAG context and is ready to generate.

IVR navigation: why we abandoned the LLM approach in two weeks

When an outbound voice agent calls a US business number, it will hit an automated phone menu more often than it reaches a human. Navigating these menus (listen to options, determine the right department, press the correct digit) is one of the hardest classification problems in voice AI.

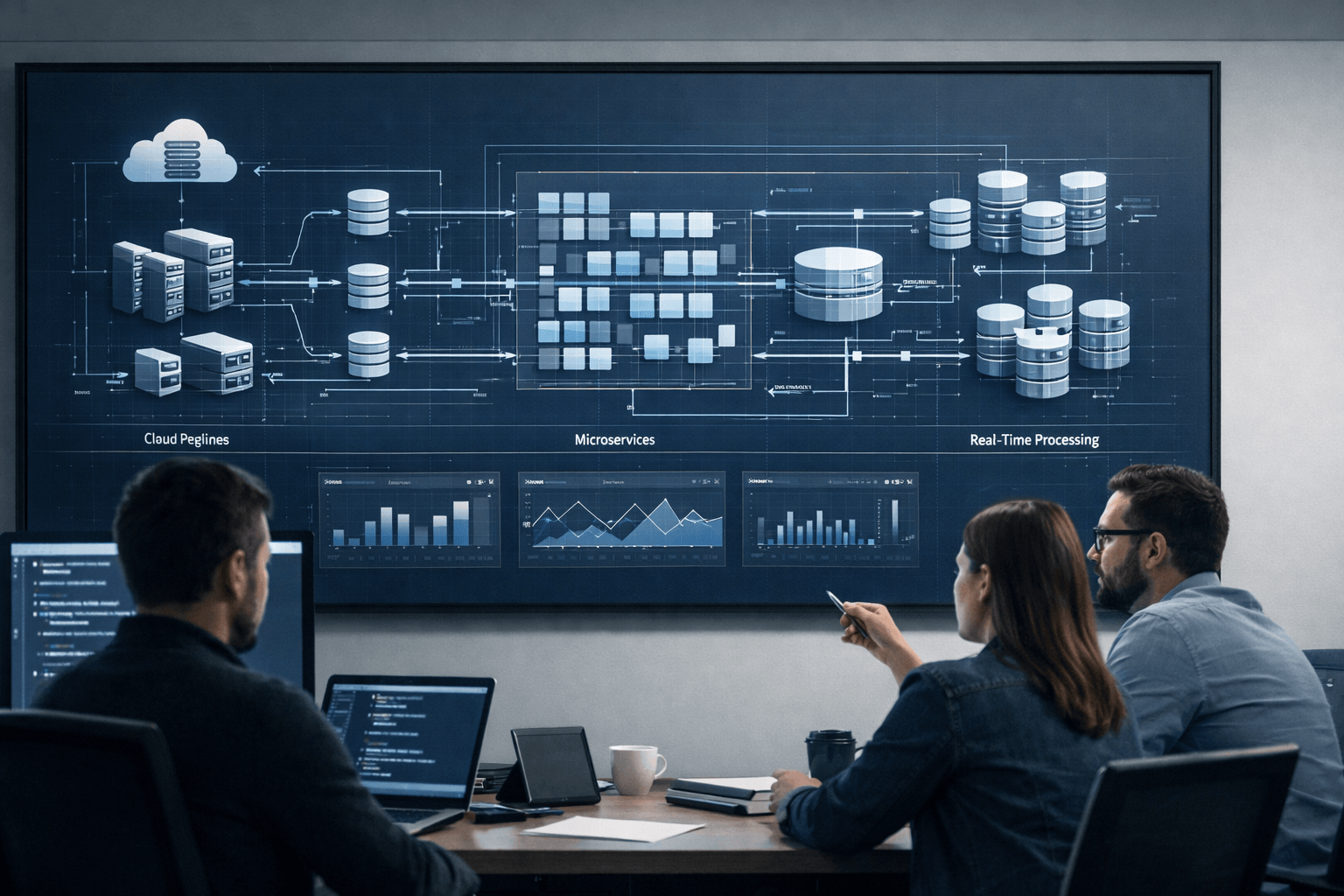

How the full pipeline works

Before diving into the IVR classification approaches, here is the complete decision flow:

Step 1: Receive the transcript from Deepgram.

Step 2: Classify Human or NonHuman using logistic regression (0.33ms).

Step 3: If NonHuman, send the transcript to the LLM for categorization into one of four types:

- hold_message: the system detected hold music or a recorded “please hold” message

- ivr: an interactive voice response menu with selectable options (“press 1 for…”)

- voice_prompt: a pre-recorded informational message that does not require input (e.g., office hours announcement, directory instructions)

- auto_end_message: an automated message that terminates the call (e.g., “this number is no longer in service,” “goodbye”)

Step 4: If IVR, classify the menu and determine which digit to press. This is where three different approaches were tested.

Step 5: Press the digit.

Step 6: If connected to an operator, return to Step 1. The system now classifies the new audio as Human and switches to conversational mode.

The challenge lives in Step 4.

Attempt 1: LLM-based navigation (2 weeks, abandoned)

We prompted the LLM with the transcribed menu options and a priority hierarchy: company directory first, then operator, then customer service, then department, then generic option.

On simple menus with one obvious choice, it worked. When the menu offered multiple plausible options (operator AND company directory AND customer service), the LLM became inconsistent. Given the same prompt with the same menu, it would select operator in one call and customer service in the next, even though the prompt explicitly specified the priority order.

This is not a prompting failure. It is a structural limitation of autoregressive decoding. The non-deterministic nature of token sampling means that identical inputs produce different outputs. Acceptable for conversation. Catastrophic for classification where consistency matters more than creativity.

We abandoned this approach after two weeks.

Attempt 2: Semantic search + SVM (2 weeks build, 1+ month tuning)

The second approach separated the problem into two parallel tracks.

Track A (SVM classification): The SVM takes the full IVR transcript and classifies which category we need to press: Directory, Operator, Customer Service, Department, or Generic Option. Five categories, trained on 1,228 labeled samples (872 Directory, 162 Operator, 90 Customer Support, 55 Department, 49 Generic). Approximately 30% real data, 70% synthetic.

Track B (option extraction + semantic similarity): Simultaneously, the system algorithmically splits the menu into separate options. “For operator press 1, for customer service press 2” becomes two tuples: (‘1’, ‘for operator’) and (‘2’, ‘for customer service’). Each option’s text portion is matched against keyword dictionaries using semantic similarity.

Consensus: The SVM says we need “Operator” (Track A). The semantic similarity matching found (‘1’, Operator) and (‘2’, Customer Service) (Track B). The system picks the digit from Track B that matches the category from Track A. Press 1.

Fallback: If the SVM and semantic similarity disagree, the system falls back to a fixed priority hierarchy: Directory, then Operator, then Customer Service, then Department, then Generic Option.

SVM accuracy exceeded 80% on category classification. The approach worked. But a critical problem remained.

The splitting problem that forced us to switch

IVR menus arrive as raw transcribed text. Extra periods. Missing commas. Unusual formatting. Transcription artifacts from background noise.

Before the SVM can classify individual options, the system must split the text into separate menu items and extract the digit associated with each one. This parsing step was brittle. The system would correctly identify “operator” as the right category but fail to extract the digit “0” because the transcript was punctuated differently than expected.

We spent over a month tuning splitting patterns and edge cases. The accuracy ceiling was always limited by transcript formatting, not by classification quality. We could detect the right category 80%+ of the time and still fail the call because we lost the digit during parsing.

Attempt 3: Fine-tuned BERT (2 weeks, current production model)

BERT solved the splitting problem by eliminating it entirely.

Instead of splitting the menu into options and classifying each one, we fine-tuned BERT to take the entire raw menu transcript as input and return the digit to press as output. No parsing. No splitting. No regex patterns for extraction. No tuple construction.

The old pipeline for Step 4:

Transcript → Split into options → Extract tuples → SVM classifies category

→ Semantic search matches options → Find digit for matching category → Press digitThe new pipeline:

Transcript → BERT → Digit → Press digitSix steps collapsed to one.

Training data: 1,421 labeled IVR menu samples

| Category | Samples |

|---|---|

| Directory | 872 |

| Operator | 162 |

| Customer support | 90 |

| Department | 55 |

| Generic option | 49 |

Approximately 30% real call data. The rest synthetic, generated from real examples.

Initial accuracy: approximately 70%. After iterative data collection and retraining with new production samples, accuracy continues to improve as we add edge cases from real calls.

The accuracy number is lower than SVM’s 80%+. We made the switch anyway. The reason is simple: SVM returned a category. BERT returns the digit. A model that is 80% accurate at naming the category but loses the digit during transcript parsing is less useful than a model that returns the digit directly at 70%+ accuracy. In production, the digit is the only thing that matters.

BERT also enables language portability. The SVM dictionary and keyword patterns were English-only. A multilingual BERT model fine-tuned on English IVR data can transfer to other languages with minimal retraining, because the encoder learns structural patterns (numbers adjacent to descriptions) rather than specific vocabulary.

The company directory problem: 11 patterns for name spelling

Some IVR systems require spelling a person’s name using the phone keypad instead of pressing a single digit. The prompt might say “please enter the first three letters of the person’s last name.”

We chose pattern matching over a classifier for this task. A classifier could theoretically learn to extract the required input format, but it would need significantly more training data, annotation effort, and development time than a rule-based approach. With 11 patterns, we covered the problem in days rather than months.

We wrote 11 patterns to cover the variations: first name only, first name and last name, first N letters of first name, first name with last name and pound key, and various other combinations. Each pattern detects keywords (“dial,” “first name,” “letters,” “last name”) and extracts the number of characters required. The system then converts each letter to its corresponding phone keypad digit (A/B/C = 2, D/E/F = 3, and so on).

On 100 real production calls, this system fails on 1 to 2 calls. The failures occur when the IVR prompt uses phrasing that matches none of the 11 patterns. Each new failure adds a pattern after manual review.

The limitation: this approach is English-only. The keypad-to-letter mapping and prompt patterns do not transfer to other languages.

AI call summarization: from 40% accuracy to 95%

After a call ends, the system must extract structured data from the conversation transcript. Was the contact verified? Was the company name confirmed? What type of connection was established? These statuses feed directly into the client’s CRM.

This is where we made the most mistakes and learned the most about prompting architecture.

Mistake 1: One prompt for three tasks

Our initial approach used a single LLM prompt to determine all three statuses (connection type, contact verified, company verified) in one call.

Accuracy on real production calls: 40-50%.

The prompt was too long. The model’s attention was split across three classification tasks simultaneously. It would get one status right and hallucinate another.

Fix: Three separate prompts

We split the monolithic prompt into three independent calls, each focused on a single classification task.

Accuracy on real calls: 60-65%.

A 20-percentage-point improvement from a purely architectural change. No new training data. No model change. Just prompt isolation.

Mistake 2: Wrong model for the task

Accuracy on call summarization is not a nice-to-have metric. It determines whether the system produces reliable results. If the classification is wrong, every call status in the CRM is suspect. Someone has to manually verify every call. That is exactly the manual work the system was built to eliminate.

We were running Groq’s GPT OSS 120B model for all three status prompts.

Accuracy on our 151-call test set: 79%.

We switched to OpenAI’s GPT-5.2 with identical prompts.

Accuracy on the same test set: 95%.

A 16-percentage-point improvement from swapping one API endpoint. The model’s reasoning capability, not the prompt, was the bottleneck.

On real production calls with more diverse scenarios, accuracy currently sits at 73-77% (measured across the two most recent evaluation batches). The gap between test set performance and production performance reflects the infinite variability of real conversations versus curated test samples.

The current architecture: tags, not classifications

Asking an LLM to directly output a final status code is fragile. The model must reason about the entire call, apply business rules, and produce a clean categorical output in a single inference step. When edge cases appear, the only fix is prompt engineering, which has diminishing returns.

We redesigned the system to separate extraction from decision-making.

Step 1: LLM extracts structured tags. The model answers specific factual questions about the transcript. Not “what is the call status?” but “did we speak to a human operator? yes/no” and “did the operator confirm the contact works there? yes/no.”

The full tag schema includes 25+ fields:

Boolean tags: spoke_to_human_operator, operator_mentioned_contact_employed, operator_confirmed_lead_no_longer_with_company, operator_mentioned_transfer_to_contact, lead_found_in_directory, poor_operator_interaction, operator_searched_global_directory, caller_asked_about_global_directory, is_contact_now_unavailable, is_non_working_number, office_is_closed, reached_direct_line_or_mobile, caller_spelled_contact_name

Categorical tags: ivr_result (15 possible states covering directory usage, operator routing, failed key presses), ivr_directory_result (5 states from “no directory” to “successfully found contact”), last_voicemail_type (7 types from lead-specific to wrong person), transfer_restriction_reason (4 types from “none” to “directed to website”)

Step 2: Backend rules convert tags to statuses. 20 to 30 deterministic rules map tag combinations to final CRM statuses. These rules are explicit, testable, and editable without touching the LLM prompt.

This architecture provides two advantages. When accuracy drops on a specific status, we can inspect the tags to determine whether the problem is extraction (LLM failed to detect an event) or decision logic (rules mapped tags incorrectly). And adding a new business rule does not require retraining or reprompting. It requires adding one conditional statement to the backend.

What I would do differently on day one

Two things.

Build the evaluation dataset before writing any prompts. When we started working on call summarization, we did not have a labeled dataset. We wrote prompts, tested them on a handful of calls, and iterated blindly. When something improved on one call type, it regressed on another. We could not see the full picture.

Once we built a labeled test set (375 PV status cases, 410 recall cases, approximately 172 full call flows), progress became measurable. We could run every prompt change against the full set and know instantly whether accuracy improved or regressed. That dataset should have existed on day one.

Test on real calls from the start. Our early development used clean, synthetic recordings with long pauses between phrases. Everything worked perfectly. When real calls arrived, everything broke: audio quality issues, transcription errors, VAD misfires, latency spikes under load. The gap between synthetic tests and production reality was enormous. Every model, every prompt, every classification threshold in this article was tuned against real call audio. That tuning process should start as early as possible.

Frequently asked questions

Answering machine detection (sometimes called AI phone screening) classifies whether an outbound call was answered by a live human, a voicemail system, or an automated menu. Each scenario requires a completely different response: conversation with a human, hangup on a voicemail, or DTMF button presses for an IVR menu. Misclassification wastes LLM compute on recordings or attempts to converse with automated systems. Commercial solutions exist but introduce multi-second detection delays that are unacceptable for voice agents needing sub-second response times.

Published research (Huggins et al., 2021, MIT Media Lab) demonstrates that fine-tuned BERT achieves 94% intent classification accuracy with as few as 25 training examples per category, rising to 98% with full datasets. Our experience aligns: 1,421 IVR menu samples across 5 categories produced a functional production model. BERT’s advantage is its pre-trained language understanding, which requires only task-specific fine-tuning rather than learning language representations from scratch.

Neither, exclusively. Our best results come from using the LLM as a feature extractor (answering specific yes/no and categorical questions about the transcript) and then applying deterministic backend rules to produce final statuses. Direct LLM classification was 40-50% accurate. The tag extraction plus rules approach reaches 73-77% on real production calls, with test set accuracy at 95%. The separation makes debugging straightforward: inspect tags to determine whether the LLM missed an event or the rules mapped it incorrectly.

We use 11 regex patterns that detect prompt keywords (“dial,” “first name,” “letters,” “last name”) and extract the required input format. The system converts letters to phone keypad digits (A/B/C = 2, D/E/F = 3). On 100 real production calls, this fails on 1-2 calls when the IVR prompt uses phrasing outside our pattern set. Each failure adds a new pattern. This approach works only for English.

For sub-millisecond text classification in a live voice pipeline, logistic regression and SVM are the only viable options. Our logistic regression classifier runs in 0.33ms. Our SVM runs in comparable time. Small BERT models achieve single-digit millisecond inference but are 10-30x slower. Full LLM inference calls require 500ms or more. In a pipeline where total latency must stay under 2 seconds, every millisecond spent on classification is a millisecond stolen from response generation.

Commercial answering machine detection software from providers like Twilio and Vonage uses synchronous analysis that blocks the audio pipeline for up to 4 seconds. Twilio claims 94% accuracy for enhanced AMD in US/Canada. Our custom logistic regression classifier achieves 97% accuracy with 0.33ms inference, meaning the call flow is never blocked. The trade-off: commercial solutions require zero development effort. A custom classifier requires labeled training data (we used 1,462 samples) and ongoing maintenance, but eliminates the dead-air problem that causes callers to hang up before the agent speaks.

Expect a significant gap between test set accuracy and production accuracy. Our answering machine detection model hits 97% on test data. Our call summarization model hits 95% on test data but drops to 73-77% on real production calls. The gap comes from audio quality variation, unexpected caller behavior, edge cases not represented in training data, and transcription errors from noisy lines. Plan for this gap. Build your evaluation dataset from real calls, not synthetic recordings.

Build AI systems that classify, not just converse

The classification layer documented in this article was built by AI and Python engineers who understand that production voice AI is not about generating fluent responses. It is about making correct decisions in milliseconds under noisy, unpredictable conditions.

The backend architecture and infrastructure behind this system is documented separately. The project management perspective, including timeline, budget, and the accuracy journey from 50% to 80%, is covered in our AI cold calling case study.

If your team is building voice AI that needs to classify, navigate, and extract, not just talk, reach out to our engineering team.