In this article

Debt collection automation: why we replaced LiveKit and cut false endpointing from 15% to 2%

AI & Automation

May 11, 2026

8 min read

LiveKit is the default framework for building AI voice agents. We started with it for debt collection automation. Two weeks later, we replaced it with a custom three-thread pipeline. False endpointing dropped from 15% to 2%. Here is the full architecture.

Key takeaways

- LiveKit could not preserve LangGraph session state across dialogue phase transitions. The integration library returned session IDs but not the session itself, breaking graph resumption after every interruption.

- Our custom pipeline uses three async threads (listener, generator, controller) to eliminate data loss during interruptions. Before this architecture, approximately 20% of user utterances during overlapping speech were lost entirely.

- TurnSense (a 135M-parameter end-of-utterance model) reduced false endpointing from 10-15% to under 2%, adding only 70-90ms of latency. Without it, the bot interrupted users mid-sentence on every sixth or seventh exchange.

- Phase routing via LLM tool calls eliminated double latency. Our initial approach used a separate LLM call for routing decisions, doubling the time to first response. Switching to simultaneous streaming + tool call routing cut one full LLM round-trip from every turn.

- Debt collection automation requires policy-driven turn-taking. During FDCPA-mandated disclosures, the system must disable interruption handling entirely. Standard voice frameworks do not support this.

The project: an AI agent that negotiates payment

The client needed a voice bot that calls customers about overdue payments. Not a reminder bot that reads a script and hangs up. A system that verifies identity, states the balance, and if the person pushes back, negotiates a payment plan on the spot according to specific business rules.

The call flow has five phases:

- Greeting (scripted, pre-recorded)

- Identity verification (driver’s license or other document)

- Payment notification (inform the customer of the amount owed)

- Negotiation (if the customer refuses: offer partial payment plans according to specific business rules)

- Resolution (process payment or schedule a callback with agreed terms)

The bot has to prioritize getting full payment. If the customer pushes back, it follows a rule-based escalation: installments first, then adjusted timelines, then scheduling a callback. Every phase has its own prompt, its own constraints, and its own compliance requirements.

The hard constraint: response latency under 1.5 seconds. At 2 seconds of silence, people assume the line is dead. At 3 seconds, they hang up. We learned that the hard way.

Why LiveKit failed in two weeks

LiveKit works well for simple voice agents. One system prompt, one conversational loop, no complex state. For that, it is genuinely good.

Our project was not that. We needed multi-phase dialogue with state preservation across interruptions. That is where things fell apart.

The LangGraph session problem

We used LangGraph to manage dialogue phases. LangGraph models conversations as state machines: each phase is a node, transitions between phases are conditional edges, and the entire conversation state (history, parsed entities, current phase) persists across the graph.

LangGraph supports a mechanism called Human-In-The-Loop, where the graph freezes its state, waits for external input, then resumes. In a voice bot, this is how turn-taking works: the graph freezes when the bot starts speaking, preserves its state, and resumes when the user responds.

The problem was in the LiveKit-LangGraph integration library. When the graph froze, the library saved the session ID but not the session state itself. On resumption, the system could not restore where it was. Every interruption corrupted the dialogue flow.

We modified the library to return the full session parameters on interruption. That fixed the immediate crash. But it exposed a deeper problem.

Interruptions destroyed data

This was the real problem. LiveKit processes audio sequentially: when the bot is generating a response, it stops listening.

Think about what that means on a real call. The bot starts explaining the balance. The customer says “Wait, no, that’s not right.” The bot does not hear it. It finishes its sentence, then generates a follow-up based on silence. The customer has to repeat themselves. The whole conversation feels off.

We measured this: roughly 20% of the time, users spoke while the bot was responding. Every single one of those interactions lost the user’s speech. The transcripts got dirtier with each lost phrase, the LLM got confused, and the model started repeating itself or asking questions that had already been answered.

We tried to fix it inside LiveKit. The framework does not expose the audio capture loop during generation. To change that behavior, we would have had to rewrite the core pipeline. At that point, we were not using LiveKit anymore. We were maintaining a fork of it.

Two weeks in, we stopped trying to fix it and started building our own.

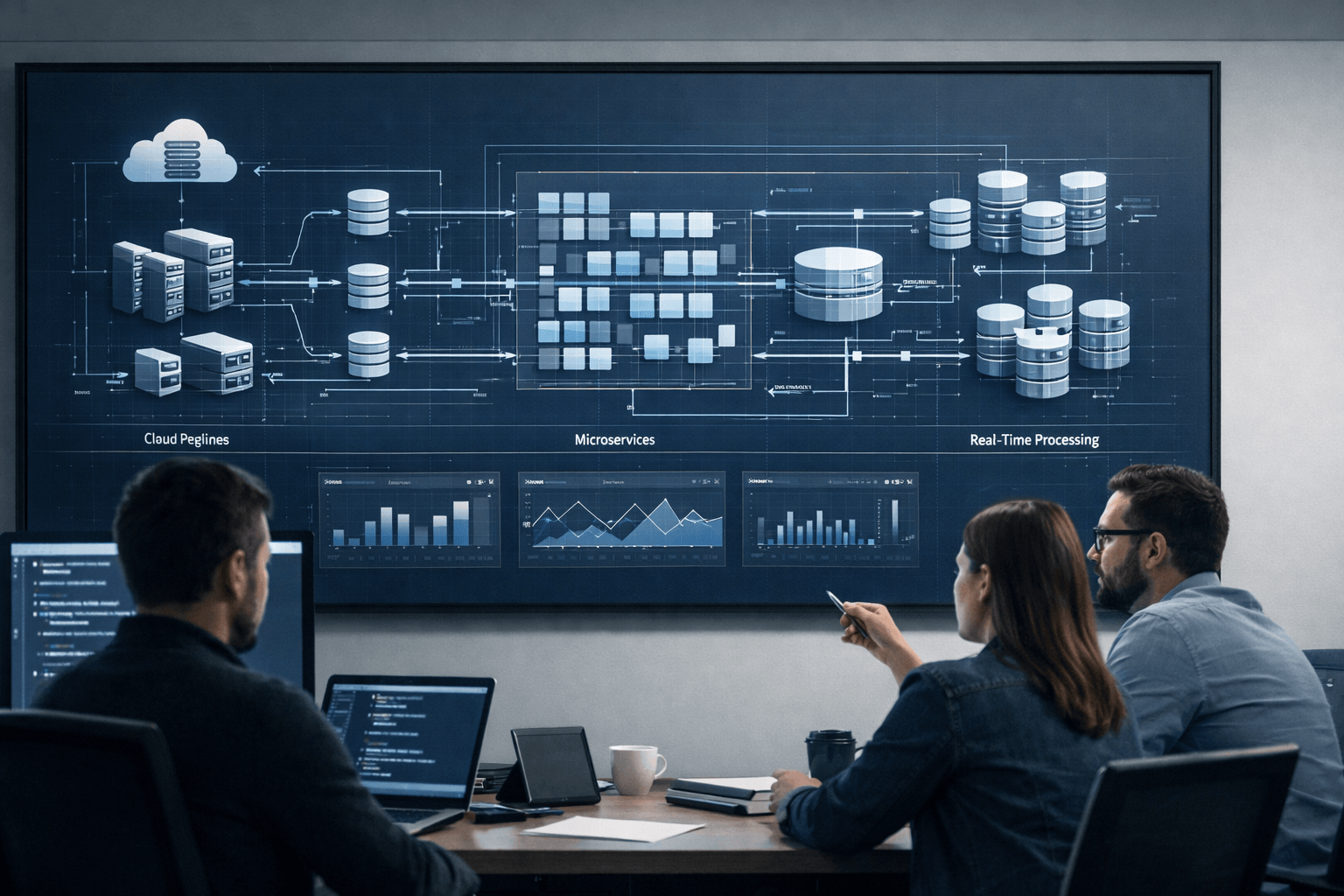

The three-thread architecture

The core problem was simple once we saw it: LiveKit alternates between listening and speaking. Real conversations do not work that way. People talk over each other constantly. You have to listen and generate at the same time.

We split everything into three concurrent async threads using Python’s asyncio:

Thread 1: Listener

This thread does one thing: it reads from the audio stream and never stops. The bot could be mid-sentence, mid-generation, completely idle. Does not matter. The listener is always recording what the user says.

It runs TenVAD on the incoming audio to find speech segments. When it detects voice, audio gets buffered. When 300ms of silence passes, the buffer goes to Whisper-2 on Fireworks AI for transcription.

Thread 2: Generator

Receives finalized transcripts from the listener and runs the full response pipeline:

- Pass transcript to the LangGraph state machine

- LangGraph determines the current phase and generates a response via GPT-4

- Stream the response text directly to ElevenLabs TTS

- Return synthesized audio for playback

The generator only activates when the listener produces a complete transcript. It never touches the audio stream directly.

Thread 3: Controller

Manages the other two threads and handles all interruption logic. When the listener detects new speech while the generator is active, the controller:

- Signals the generator to abort the current TTS stream

- Waits for the listener to finalize the new transcript (which includes both the pre-interruption buffer and the new speech)

- Sends the combined transcript to the generator for a new response

The controller also manages TenVAD state transitions and coordinates with TurnSense for end-of-utterance detection.

What this actually fixed

Before: 20% of user interactions during bot speech lost data. The transcripts were a mess and the model kept getting confused.

After: data loss dropped to near zero. The listener never stops, so nothing gets missed. If the user interrupts, the controller catches it, kills the current generation, and feeds the full context (old buffer plus the new speech) back into the generator.

The trade-off is complexity. Three threads sharing state through asyncio queues. Race conditions we had to find and fix one by one. It took about two weeks to build and stabilize. Worth it.

The 0.5-second pause problem

Even with the three-thread architecture running cleanly, one problem kept coming back.

People pause when they talk. They think for half a second, then keep going. Completely normal. But the VAD sees 500ms of silence and decides the person is done talking. It triggers transcription, the generator spins up a response, and then the person keeps talking. The bot just interrupted them.

This happened on roughly 10-15% of interactions. About every sixth or seventh exchange, the bot would jump in while the user was still mid-thought. The transcripts looked terrible because each interruption got marked as <interrupted> in the context, and after a few of those, the LLM started getting confused about what the user actually said.

Why raising the VAD threshold does not work

The obvious fix: bump the silence threshold from 300ms to 800ms. Wait longer before deciding the user stopped.

The problem is that delay hits every single turn. Every response comes 500ms later. When your total budget is 800-1500ms, you cannot add half a second to 100% of turns to fix a problem on 15% of turns. The math does not work.

TurnSense: a semantic check before the bot speaks

We added TurnSense, a 135-million-parameter transformer built on SmolLM2. It looks at the text coming out of STT (not the audio itself) and predicts whether that text looks like a finished thought or a mid-sentence pause.

The team tested a few end-of-utterance models on a small manually labeled dataset. TurnSense had the best results. Published benchmarks show 97.5% accuracy on the TURNS-2K dataset (2,000 conversational samples with backchannels and disfluencies).

It added 70-90ms per check. In exchange, false endpointing dropped from 10-15% to roughly 2%. The remaining failures happened in genuinely ambiguous spots where even a person listening would not be sure if the speaker was done.

How it works now: TenVAD detects 300ms of silence. Before the controller triggers generation, it passes the current transcript to TurnSense. If TurnSense says “not finished,” the system keeps listening. If it says “done,” generation starts.

This is faster than raising the VAD threshold because TurnSense only runs when silence is detected. Most of the time, the user is speaking continuously and TurnSense is idle. A higher threshold would delay every turn regardless.

LangGraph for phase-based dialogue

Building a LangGraph voice agent is a different problem than building a text chatbot. Most voice bots use a single system prompt. The LLM handles the whole conversation in one context window. That falls apart when your conversation has distinct phases, each with different rules, different compliance requirements, and strict transition logic that the model cannot be trusted to infer on its own.

Five phases, one state machine

Each dialogue phase is a LangGraph node with its own prompt and constraints:

Greeting: Scripted. Pre-recorded audio. No LLM involvement. This is the only phase that uses a pre-recorded phrase. Average response time: near zero.

Identity verification: The bot asks for a driver’s license number or other identifying document. The LLM must extract and validate specific data formats. Strict rules prevent the bot from proceeding until verification succeeds.

Payment notification: The bot informs the customer of the outstanding amount. In the US market, this phase must include the FDCPA mini-Miranda disclosure. During this disclosure, the system disables interruption handling entirely. The bot must complete the legally mandated statement without the user’s speech cutting it short.

Negotiation: The most complex phase. If the customer refuses to pay, the bot follows a priority-based negotiation strategy: full payment first, then partial payment plans, then scheduled callbacks. The LLM must reason about payment terms while staying within the client’s business rules.

Resolution: Either processes the payment or schedules a callback. The bot confirms the agreed terms and ends the call.

How routing works (and the mistake that doubled our latency)

First approach: after each user turn, send the transcript to one LLM call to decide whether to stay in the current phase or move to the next. Then send a second LLM call to actually generate the response.

Two sequential LLM calls per turn. At 400-800ms per call, the bot sat in silence for over a second before it even started speaking. Users noticed immediately.

We fixed this with tool call routing. The LLM now generates a streaming response AND makes the phase transition decision via tool calls in a single pass. It talks to the user while simultaneously deciding whether to advance. One call instead of two. The savings are significant: instead of waiting for two sequential calls, the model starts streaming immediately while routing happens in the background. Latency dropped back within the 800-1500ms target.

Handling off-script users

When a user says something completely unrelated to the current phase (asks about the weather, makes a joke, tries to change the subject), the LangGraph router keeps them in the current phase. The LLM responds with something like “I understand, but I need to discuss this specific matter with you” and redirects the conversation.

The router does not allow backward transitions. Once the identity verification phase is complete, the user cannot be routed back to it. The conversation only moves forward through the phases.

What the pipeline costs to run

Nobody publishes real cost numbers for voice AI in debt collection. Here is what our stack actually costs based on current provider pricing:

| Component | Provider | Cost |

|---|---|---|

| STT | Whisper V3 on Fireworks AI | ~$0.0015/min audio |

| LLM | GPT-4 (streaming) | ~$0.03/1K input, $0.06/1K output tokens |

| TTS | ElevenLabs | ~$0.10-0.30/min (varies by plan and model) |

| VAD + TurnSense | Local inference | Infrastructure cost only |

| Infrastructure | Python asyncio server | Variable |

The LLM is the largest variable cost. Each conversational turn involves one streaming LLM call (response generation + tool call routing combined). Post-call, additional LLM calls may handle summarization and status extraction. At an average call duration of 3-5 minutes with 8-12 conversational turns, LLM costs per call range from $0.10 to $0.40 depending on transcript length and negotiation complexity.

The critical cost advantage over human agents: one server handles multiple concurrent calls. A human agent handles one. At 10,000+ calls per month, the per-call cost of automated debt collection drops below the human equivalent, even accounting for the AI stack.

Compliance is architecture, not configuration

Deploying an AI voice agent for US debt collection means operating under three overlapping regulatory frameworks: FDCPA, TCPA, and Regulation F.

The FCC ruled in February 2024 that AI-generated voice calls fall under the TCPA’s restrictions on artificial or prerecorded voice messages. This means every call requires prior express written consent. The system cannot cold-call.

Regulation F enforces the 7-in-7 rule: no more than seven calls within seven consecutive days about a specific debt. It also restricts calling hours to 8 AM through 9 PM in the consumer’s local timezone.

The FDCPA mandates the mini-Miranda disclosure: the bot must state that “this is an attempt to collect a debt” and that “any information obtained will be used for that purpose.” This disclosure must be delivered in full. If the user interrupts mid-disclosure and the bot stops speaking to acknowledge the interruption, the disclosure is incomplete. That is a federal violation.

Standard voice frameworks cannot handle this. You need the ability to selectively disable interruption handling during legally mandated speech, then re-enable it for normal conversation. That means low-level control over the VAD and TTS abort mechanisms, which managed platforms do not expose.

Our implementation: during compliance-critical audio segments, the controller thread raises the speech detection threshold to maximum, effectively requiring near-shouting levels of audio to trigger an interruption. The mini-Miranda plays in full. Once the disclosure is complete, normal thresholds resume. This level of pipeline control is only possible because we own every layer of the audio processing stack. Managed platforms abstract these controls away.

What I would do differently on day one

Skip LiveKit entirely.

We spent two weeks with it, another week patching the LangGraph integration, and still ended up building everything from scratch. Three weeks of work that produced nothing we kept. The custom three-thread pipeline took two weeks to build. If we had started there, we would have shipped three weeks earlier.

LiveKit is the right tool for simple voice agents. A single prompt, a single loop, no state management. For teams evaluating a LiveKit voice agent setup, it genuinely works well for that. But the moment you need multi-phase dialogue, LangGraph integration, or policy-driven turn-taking, you need a LiveKit alternative. For us, that alternative was writing our own orchestration layer in Python.

Frequently asked questions

LiveKit is an open-source WebRTC media server and real-time agent framework. It handles audio routing, connection management, and basic turn detection for voice AI applications. Developers use it because it dramatically reduces the time to build a working voice agent prototype. OpenAI, Skydio, and Assort Health use LiveKit infrastructure in their products. The limitation appears when production requirements demand deep control over interruption handling, state management, or regulatory compliance that the framework does not expose.

An AI voice agent calls the customer, verifies their identity, delivers legally required disclosures, informs them of the outstanding balance, and either processes payment or negotiates a payment plan. The conversation is managed by a state machine (we use LangGraph) that enforces phase transitions and business rules. The system must comply with FDCPA, TCPA, and Regulation F requirements, including the mini-Miranda disclosure, calling hour restrictions, and the 7-in-7 contact limit.

Our target was 800-1500ms from end of user speech to start of bot speech. Industry benchmarks confirm that latency above 2 seconds causes callers to assume the line is dead. Pre-recorded phrases (we use one for the greeting) achieve near-zero latency. LLM-generated responses through the full pipeline (STT + LLM + TTS) average 800-1500ms depending on response complexity and model load.

TurnSense is a 135-million-parameter transformer model (built on SmolLM2 architecture) that predicts whether a transcribed utterance is a complete thought or a mid-sentence pause. Published benchmarks show 97.5% accuracy. In our pipeline, it reduced false endpointing from 10-15% to approximately 2%, adding only 70-90ms of processing time. It runs only when the VAD detects silence, so it adds no latency to turns where the user speaks continuously.

Three overlapping frameworks: the Fair Debt Collection Practices Act (FDCPA) prohibits harassment and mandates the mini-Miranda disclosure. The Telephone Consumer Protection Act (TCPA) requires prior express written consent for AI-generated calls (per FCC’s February 2024 ruling that AI voices qualify as artificial or prerecorded). Regulation F enforces the 7-in-7 calling limit and restricts contact hours to 8 AM through 9 PM in the consumer’s local timezone.

Build voice agents that handle real conversations

This pipeline was not built for demos. It was built for regulated, multi-phase conversations where a missed disclosure is a federal violation and a missed utterance is a failed negotiation.

If your team is building voice AI for debt collection, payment reminders, or any application where compliance and conversation quality cannot be traded for development speed, talk to our engineering team.

The backend infrastructure and call classification architecture behind our other voice agent projects are documented separately.

We build AI systems with Python engineers who understand real-time audio, regulatory constraints, and the failure modes that only surface when you dial real phone numbers.