In this article

Step-by-step guide to AI-powered solution development

Business & Strategy

Apr 5, 2026

10 min read

A practical step-by-step guide to AI-powered solution development in 2026: lifecycle stages, team setup, Python best practices, and how to scale without breaking your system.

The pressure on startup founders and CTOs to ship production-ready AI systems has never been more intense. One wrong turn in architecture, one gap in your team’s expertise, and you’re looking at months of rework and a product that can’t scale past its first thousand users. The real bottleneck in 2026 isn’t access to AI technology. It’s the engineering talent capable of turning that technology into something that actually works under load, in production, with real users. This guide walks you through every critical stage of AI-powered solution development, from problem validation to continuous improvement, with the practical clarity that ambitious teams need to move fast without breaking everything.

Key Takeaways

| Point | Details |

|---|---|

| Structured lifecycle | Following a clear development framework reduces risk and accelerates delivery. |

| Scalable Python practices | Clean architecture and solid coding habits are essential for long-term maintainability. |

| Edge case vigilance | Testing for data and model edge cases ensures reliability after launch. |

| Fast time-to-market | AI-first teams with the right skillset can build production-ready solutions in weeks, not months. |

Understanding the AI solution development lifecycle

Every successful AI product starts with structure. Not bureaucracy. Structure. The kind that keeps teams from building elegant solutions to the wrong problems, or shipping models that collapse the moment real-world data looks different from the training set.

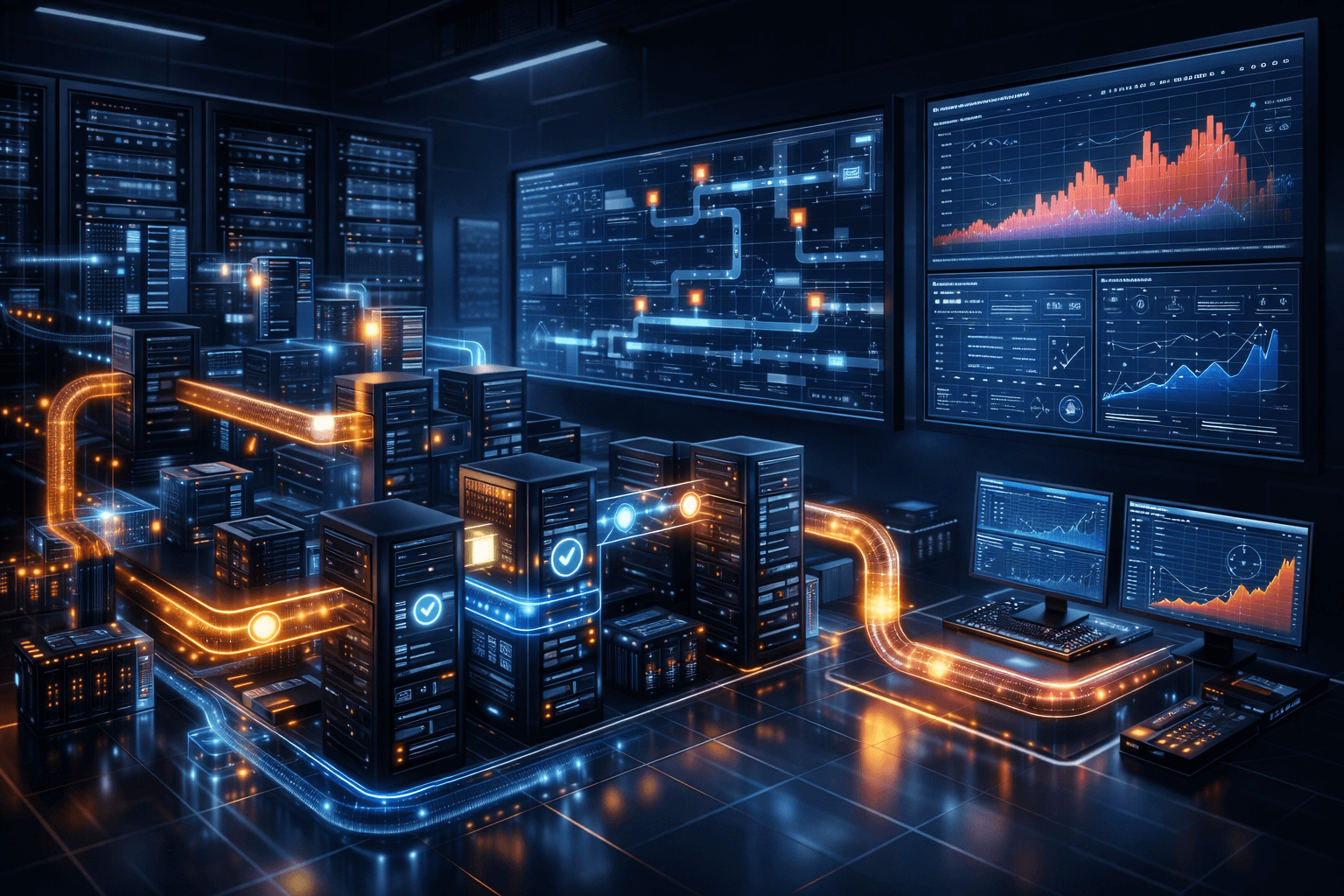

AI-powered solution development follows structured frameworks with stages including problem validation, data strategy, MVP prototyping, model development, deployment, monitoring, and scaling. Understanding what each stage demands, and what it produces, is the foundation of everything else.

Here’s how those stages break down in practice:

| Phase | Purpose | Key deliverable |

|---|---|---|

| Problem validation | Confirm the AI approach solves a real need | Problem statement, success metrics |

| Data strategy | Assess, collect, and structure training data | Data pipeline, labeling plan |

| MVP prototyping | Build the smallest working version | Functional prototype, early feedback |

| Model development | Train, evaluate, and refine models | Trained model, evaluation report |

| Deployment | Ship to production with CI/CD | Live system, monitoring setup |

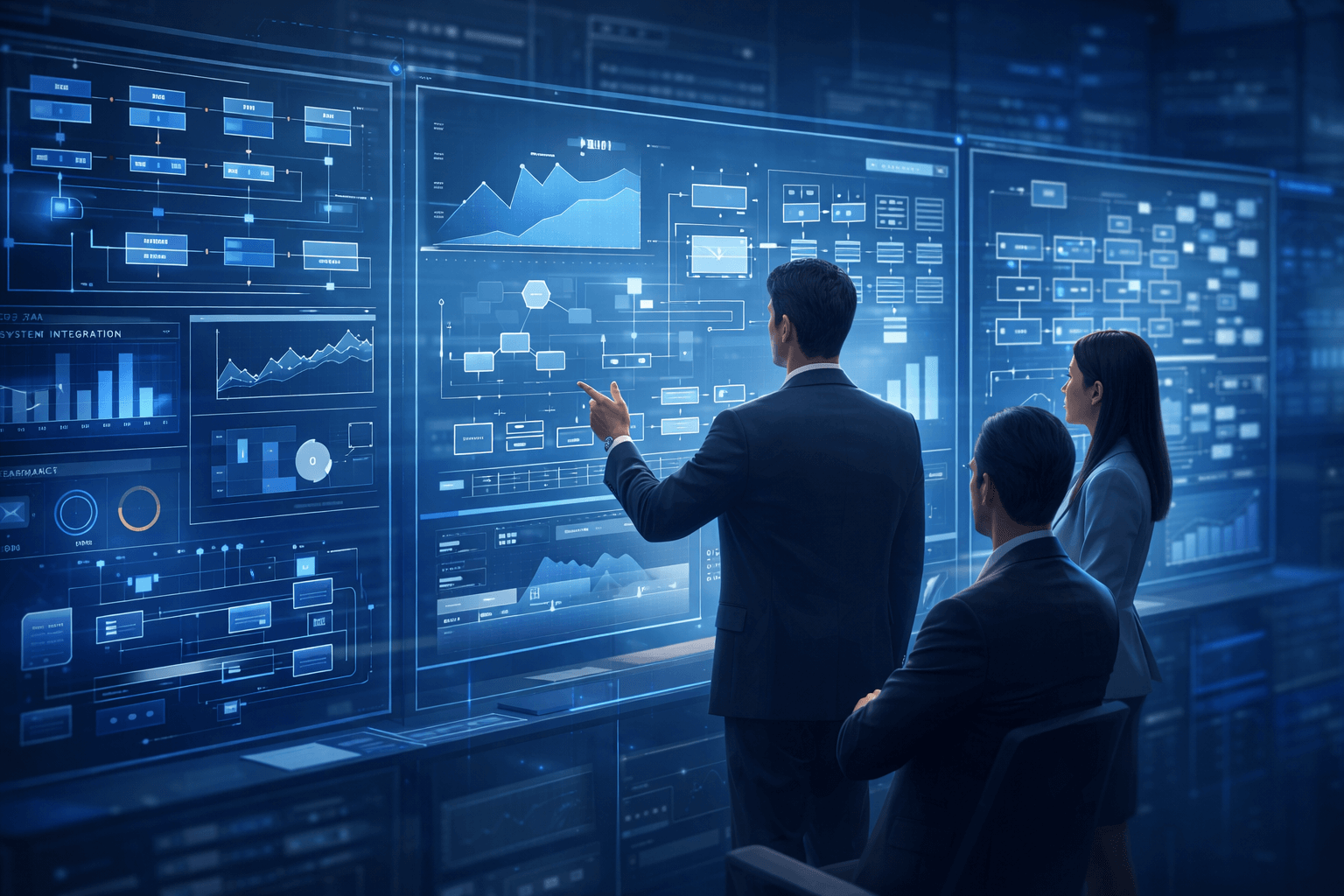

| Monitoring | Track performance and drift in real time | Dashboards, alerting rules |

| Scaling | Handle growth without degradation | Distributed architecture, load tests |

Skipping the validation or MVP stages is one of the most expensive mistakes a team can make. It leads to over-engineering: sophisticated RAG pipelines built for a use case that users don’t actually need, or computer vision models trained on datasets that don’t reflect production conditions. Explore the phases of AI solution development in depth to understand how each phase connects to the next.

Common challenges teams face at each stage include:

- Problem validation: Stakeholders who conflate AI hype with genuine business need

- Data strategy: Incomplete labeling, privacy constraints, and inconsistent data sources

- MVP prototyping: Scope creep and pressure to add features before the core works

- Model development: Overfitting, evaluation gaps, and poor baseline comparisons

- Deployment: Environment mismatches and missing rollback strategies

- Monitoring: Alert fatigue and insufficient logging of model decisions

- Scaling: Architectural debt that makes horizontal growth painful

“The teams that ship great AI products aren’t the ones with the most advanced models. They’re the ones who respected the process enough to validate before they built.”

Understanding the AI-powered software components that underpin each stage gives your team a shared vocabulary and a clearer map of the work ahead.

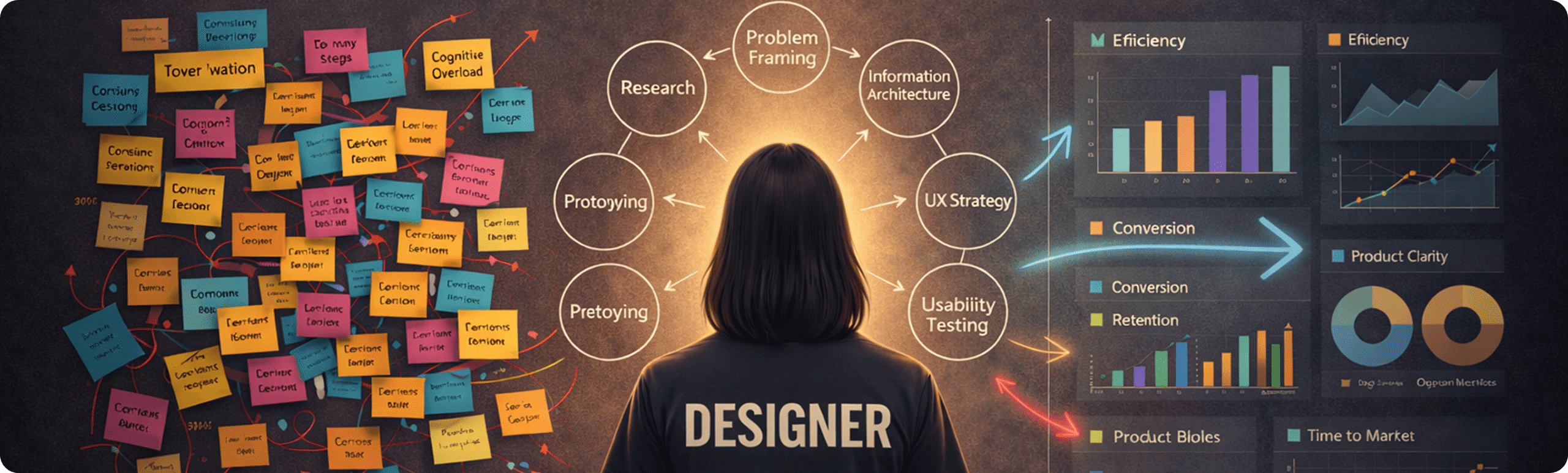

Setting your foundation: Preparation, team, and tools

With lifecycle stages in mind, effective execution depends on smart preparation. Before writing a single line of code, you need the right people, the right data, and the right tools aligned to your project’s actual requirements.

The essential roles for an AI-powered product team include a machine learning engineer, a senior Python engineer, a data engineer, a product owner, and a DevOps or MLOps specialist. Each role covers a distinct layer of the system. Gaps in any of them tend to surface at the worst possible moment, usually during deployment or the first scaling event.

Here’s a practical comparison of building in-house versus using staff augmentation:

| Capability | In-house team | Staff augmentation |

|---|---|---|

| Ramp-up time | 3-6 months | 1-2 weeks |

| Senior AI expertise | Hard to hire | Pre-vetted, available |

| Cost flexibility | Fixed overhead | Scales with project |

| Domain knowledge | Builds over time | Brought in immediately |

| Long-term retention | High risk | Managed externally |

AI-first product teams can deliver in 6 to 10 weeks compared to 4 to 8 months for traditional approaches, largely because pragmatic design choices and senior talent reduce decision latency at every stage.

Before development begins, run through this data readiness checklist:

- Is your training data labeled, or do you have a labeling plan?

- Have you identified personally identifiable information and mapped your privacy obligations?

- Do you have a synthetic data strategy for edge cases your real data doesn’t cover?

- Is your data pipeline reproducible and version-controlled?

- Have you defined ground truth for model evaluation?

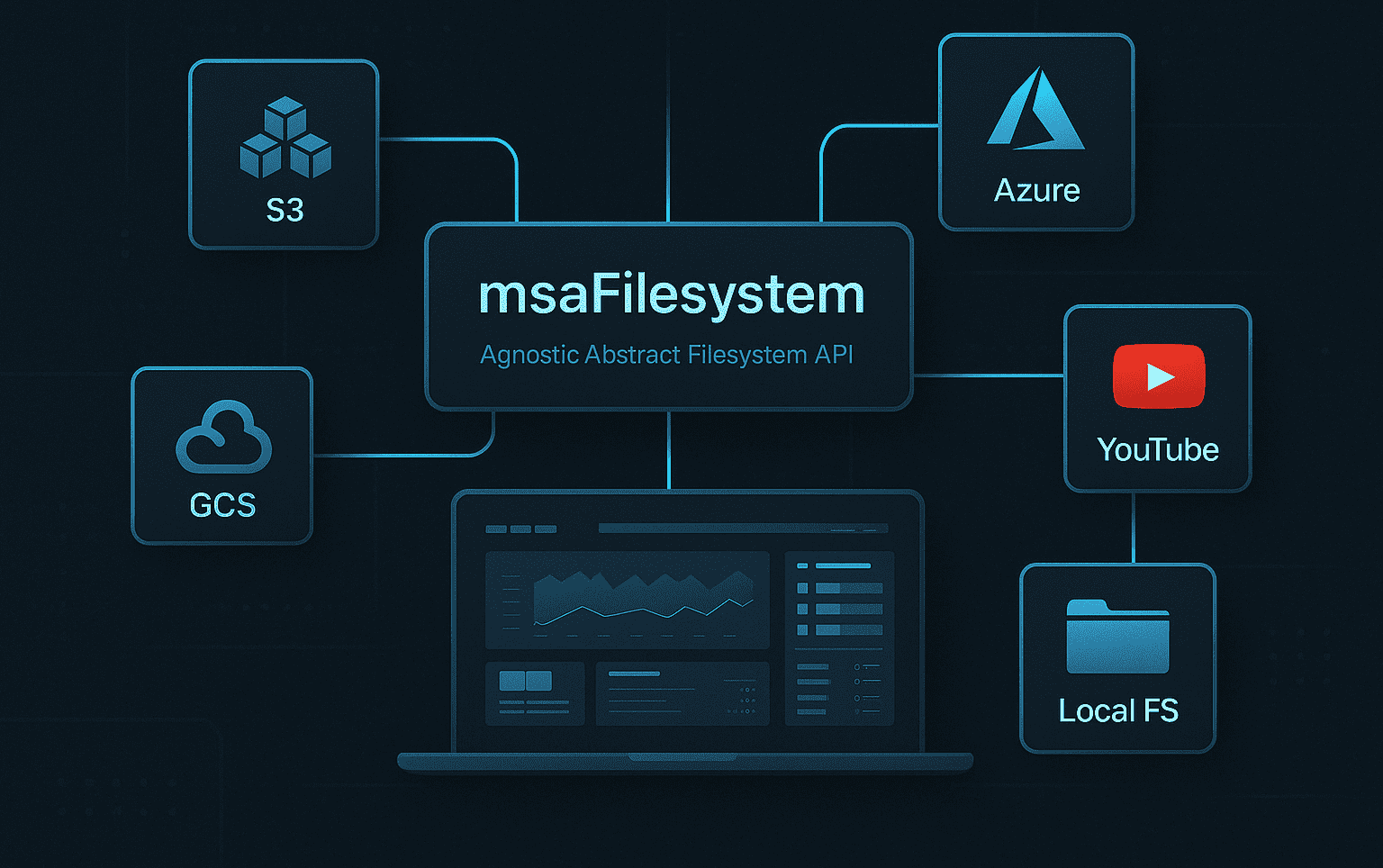

On the tooling side, Python for scalable AI remains the backbone of production AI systems in 2026. Pydantic handles config validation, Tenacity manages retry logic, Ray enables distributed computation, and a well-configured CI/CD pipeline keeps deployments predictable. Cloud platforms like AWS, GCP, or Azure provide the infrastructure layer.

Pro Tip: Lean teams move significantly faster when they hire Python engineers who already understand production AI patterns. A senior engineer who has shipped RAG pipelines or LLM integrations before doesn’t need to learn on your timeline.

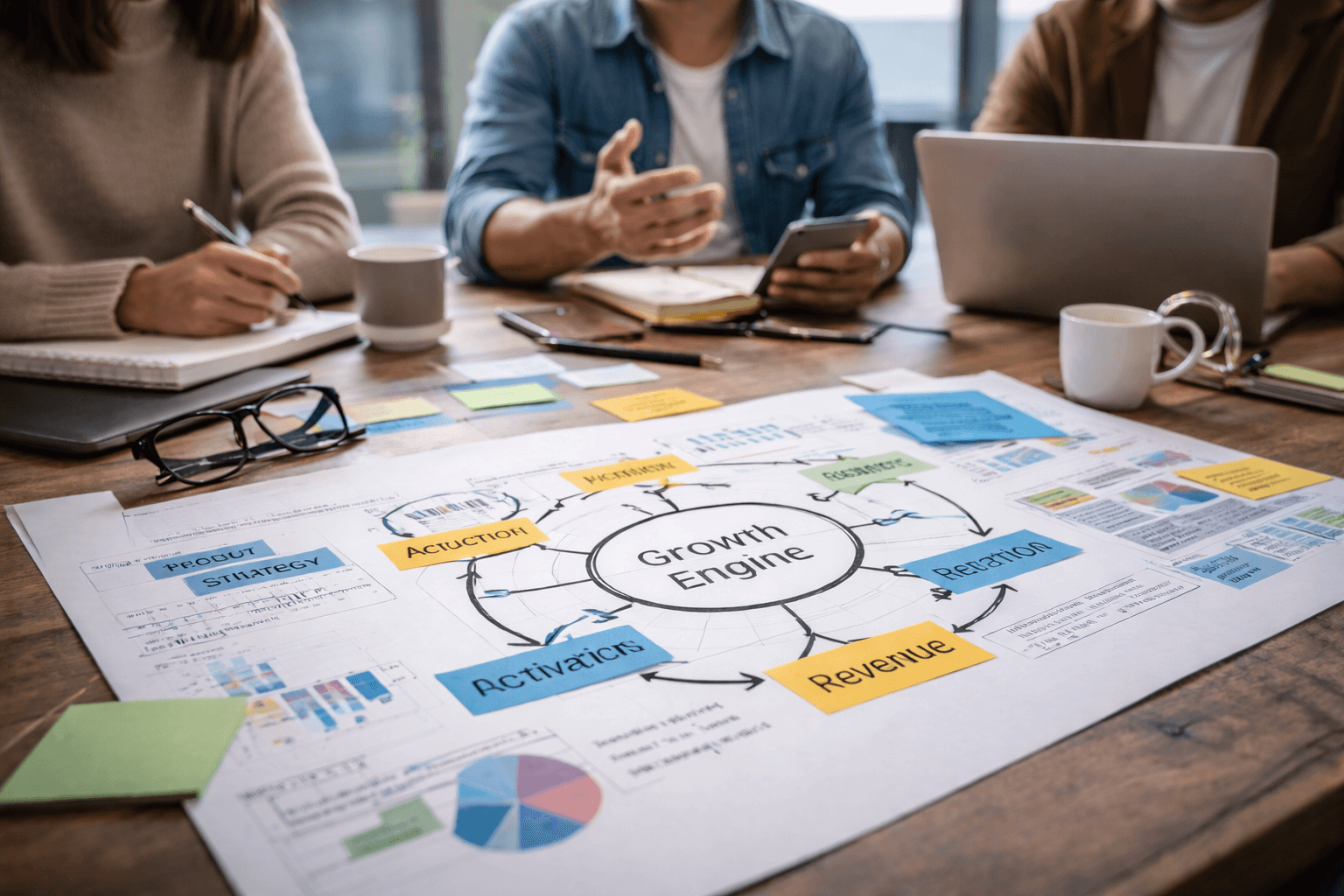

Building and deploying scalable AI-powered solutions

With your foundation set, let’s walk through building, scaling, and deploying an AI-powered solution designed for real growth.

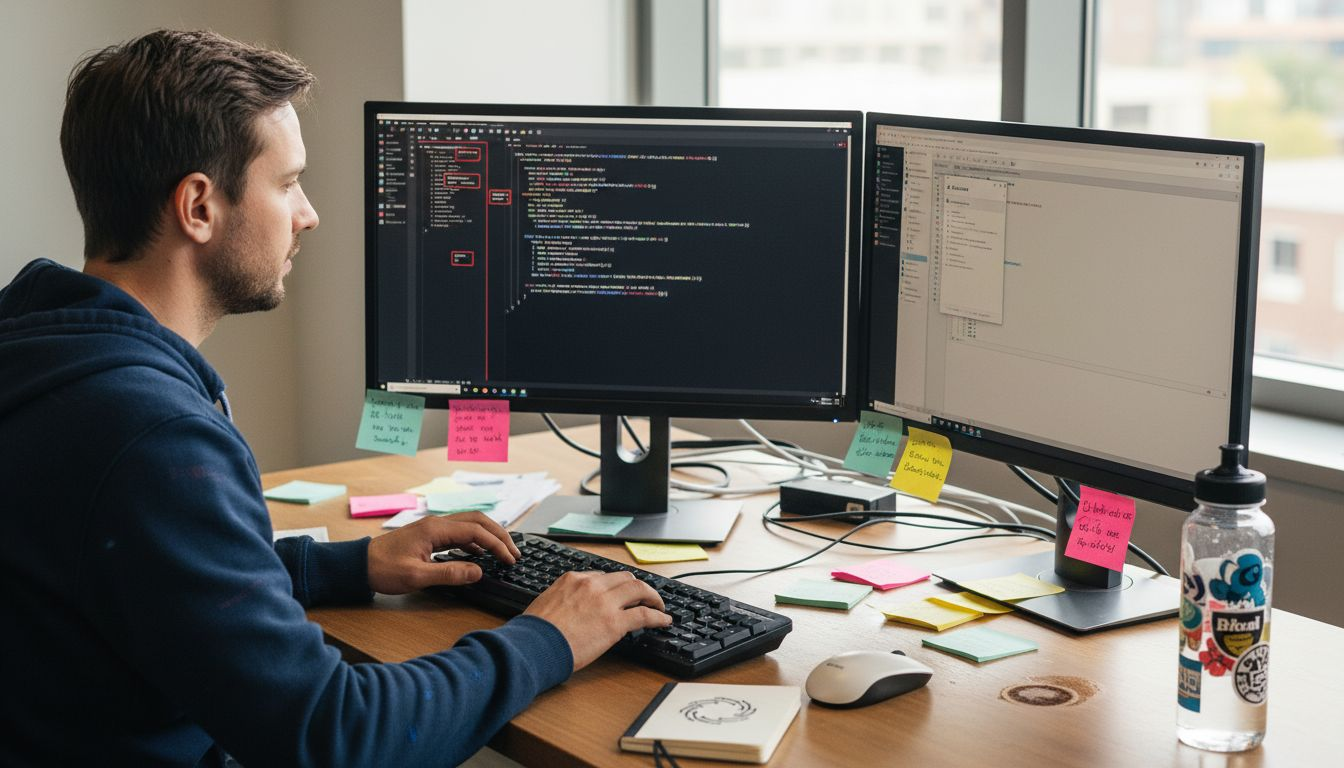

Python best practices for scalable AI emphasize Clean Architecture, separation of concerns, factory patterns, Pydantic for config, retries, and comprehensive logging. These aren’t theoretical preferences. They’re the difference between a codebase you can extend in six months and one you have to rewrite.

Here are the core execution steps:

- Build your MVP. Implement the narrowest version of your AI feature that delivers measurable value. Use it to validate assumptions before scaling anything.

- Select your architecture. Clean Architecture separates your domain logic, application layer, and infrastructure. This makes it possible to swap models, databases, or APIs without cascading rewrites.

- Train and evaluate your models. Whether you’re fine-tuning an LLM, building a computer vision pipeline, or developing predictive analytics models, define your evaluation metrics before training begins.

- Wrap your models in APIs. FastAPI is the standard for Python AI services in 2026. Keep your API contracts clean and version them from day one.

- Deploy with CI/CD. Automate testing, staging, and deployment. Manual deployments are a liability in production AI systems.

- Implement logging and monitoring. Log model inputs, outputs, and confidence scores. You cannot debug what you cannot see.

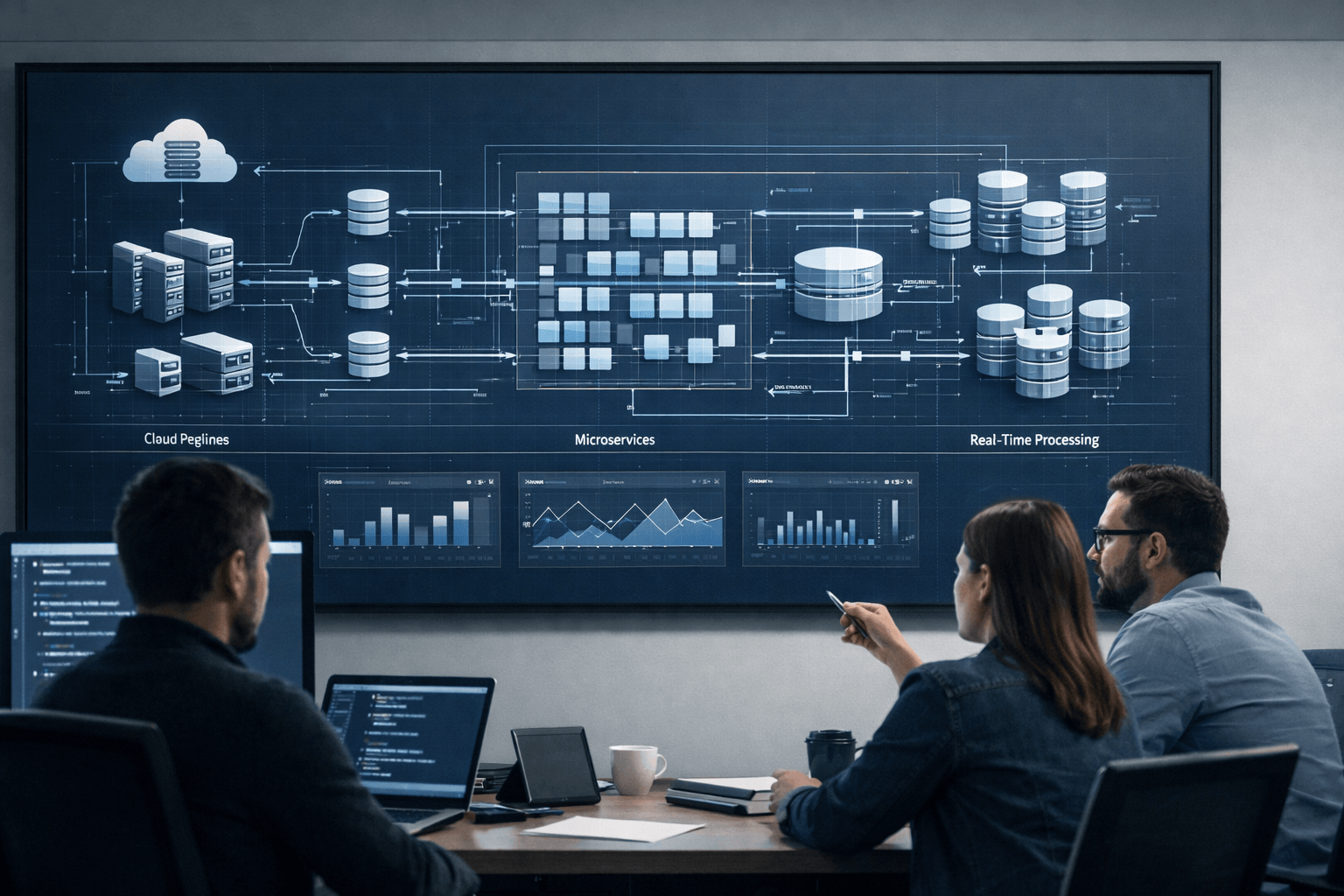

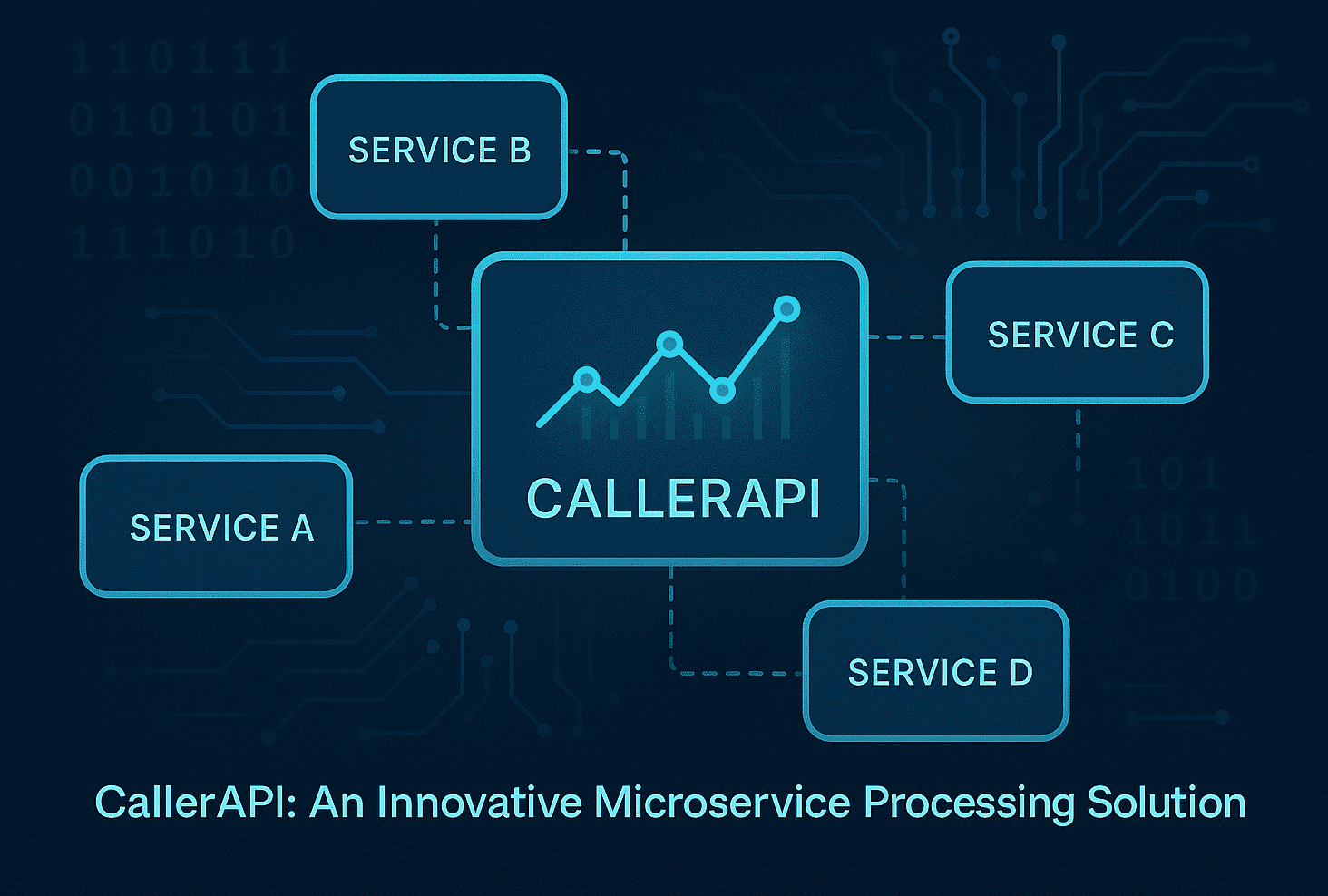

Scalability via Ray for distributed computing, microservices, async processing, caching, and horizontal scaling gives Python AI systems the headroom to grow without architectural rewrites. Pair this with microservices in AI solutions to isolate components and deploy them independently.

Pro Tip: Avoid over-engineering. The teams that optimize for maintainability and pragmatism consistently outperform those chasing architectural elegance. If a simpler pattern solves the problem, use it. Explore scalable Python patterns that production teams actually rely on.

AI-first teams reduce time to market by 2 to 4 times compared to traditional development models, and the primary driver is senior engineering talent making faster, better-informed decisions at every step.

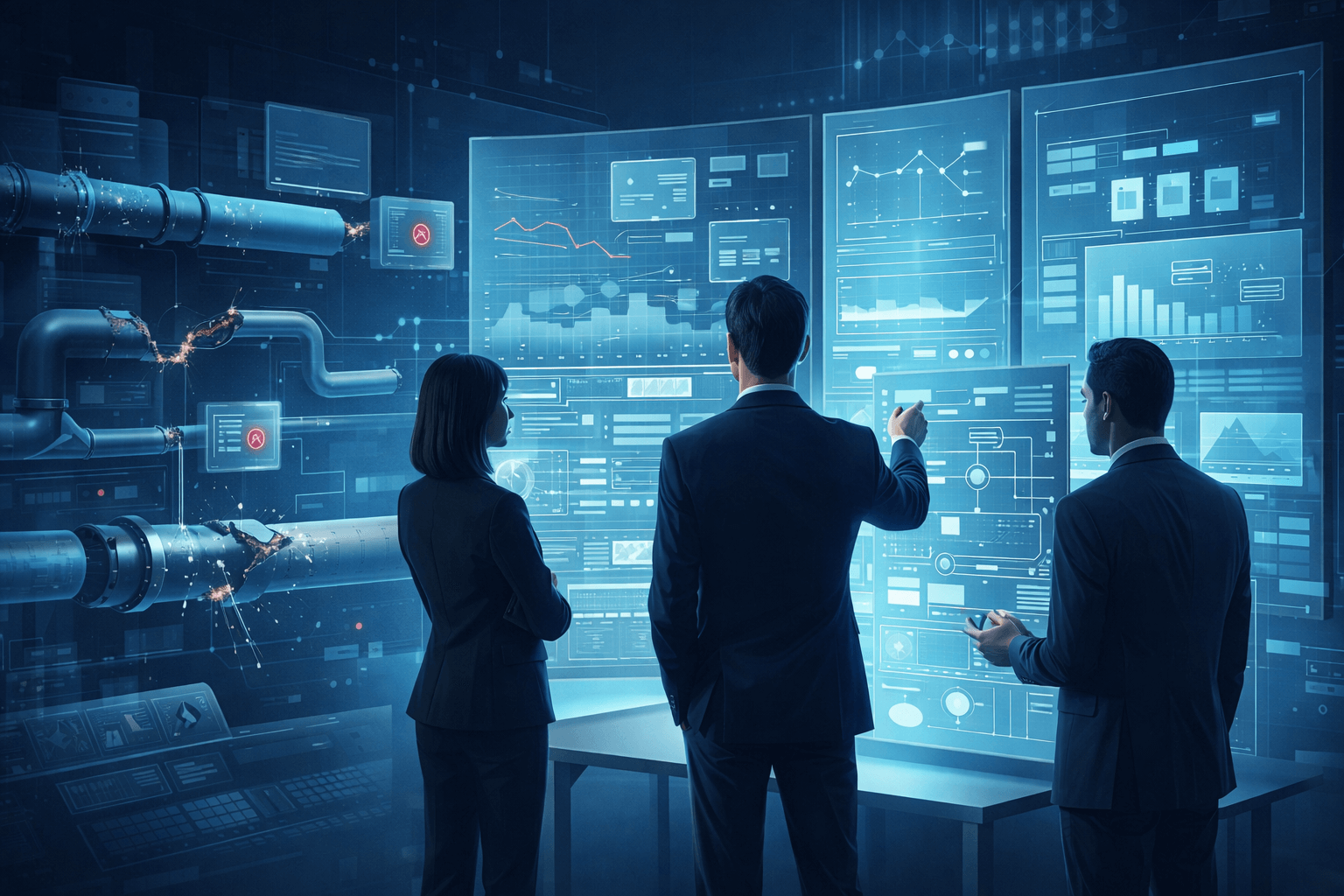

Testing, edge cases, and continuous improvement

Even with smart architectures and strong teams, AI solutions can fail in surprising ways. The failure modes are different from traditional software. They’re often subtle, statistical, and difficult to reproduce.

Edge cases in AI systems include data distribution shifts, prompt injection attacks, hallucinations, latency spikes, and model failures. Each requires a distinct mitigation strategy. Red-teaming surfaces adversarial inputs your team wouldn’t think to test. Synthetic data fills gaps in your real-world dataset. Fallback chains ensure that when your primary model fails, the system degrades gracefully rather than crashing.

Post-launch, monitor these signals continuously:

- Latency: Response time degradation often signals infrastructure issues or model bloat

- Accuracy drift: Model performance erodes when production data diverges from training data

- User behavior: Unexpected usage patterns reveal gaps between what you built and what users need

- Cost per inference: Unchecked, this scales faster than revenue in poorly optimized systems

- Error rates: Distinguish between model errors and infrastructure errors for faster diagnosis

“Log the AI’s thought process, not just its outputs. When something goes wrong in production, the decision trail is the only thing that tells you why.”

Continuous improvement isn’t optional. Use benchmarks like SWE-bench to track model capability over time, and build feedback loops that surface real user outcomes back into your training and evaluation process. Review common AI mistakes that teams make post-launch to avoid the patterns that quietly erode product quality.

The teams that treat testing and monitoring as ongoing disciplines, not launch checklists, are the ones whose AI products improve with age rather than accumulate technical debt.

From the trenches: Our hard-won lessons on AI-powered development

Here’s something most guides won’t tell you: the biggest risk in AI product development isn’t choosing the wrong model. It’s choosing the wrong team structure and the wrong delivery rhythm.

We’ve seen traditional agencies slow launches not because they lacked technical knowledge, but because rigid processes and communication overhead turned two-week decisions into two-month ones. Speed in AI development comes from trust, autonomy, and tight feedback loops, not from elaborate planning frameworks.

The contrarian truth is that minimal, scalable architecture almost always outperforms elaborate theoretical designs. Teams that learn from counterintuitive AI lessons tend to ship faster and iterate more effectively. Don’t chase the latest framework if it doesn’t solve your core problem. A well-implemented RAG pipeline beats a poorly implemented agentic system every time.

What actually works? Effective logging, short delivery cycles, and engineers who understand what separates a senior Python developer from someone who has simply called a few APIs. The bottleneck is always talent. Everything else is solvable.

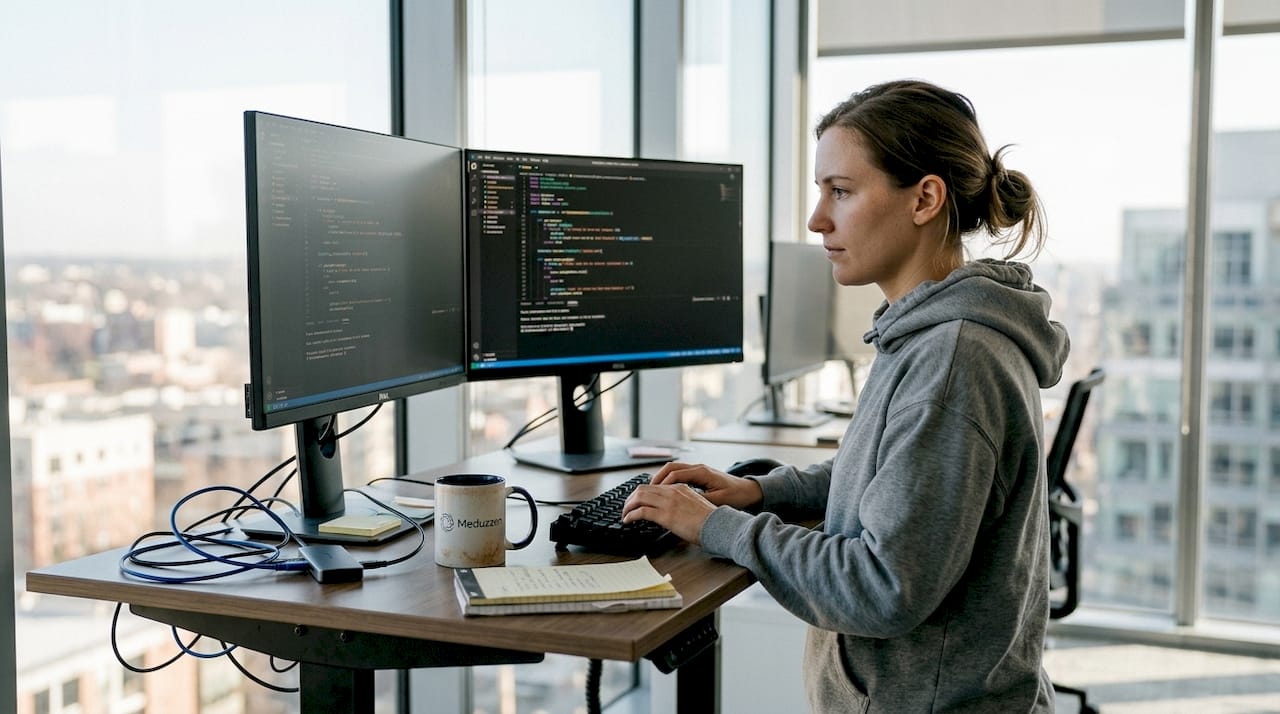

Accelerate your AI-powered journey with Meduzzen

Ready to put this guide into practice? Here’s how our team can help you launch and grow faster.

At Meduzzen, we’ve spent over 10 years helping startups and CTOs build production-grade AI systems without the overhead of traditional agencies. Whether you need to hire a Python AI developer for a specific sprint, scale with a dedicated Python development team, or access our full range of AI development services, we bring pre-vetted senior engineers who integrate into your team from day one.

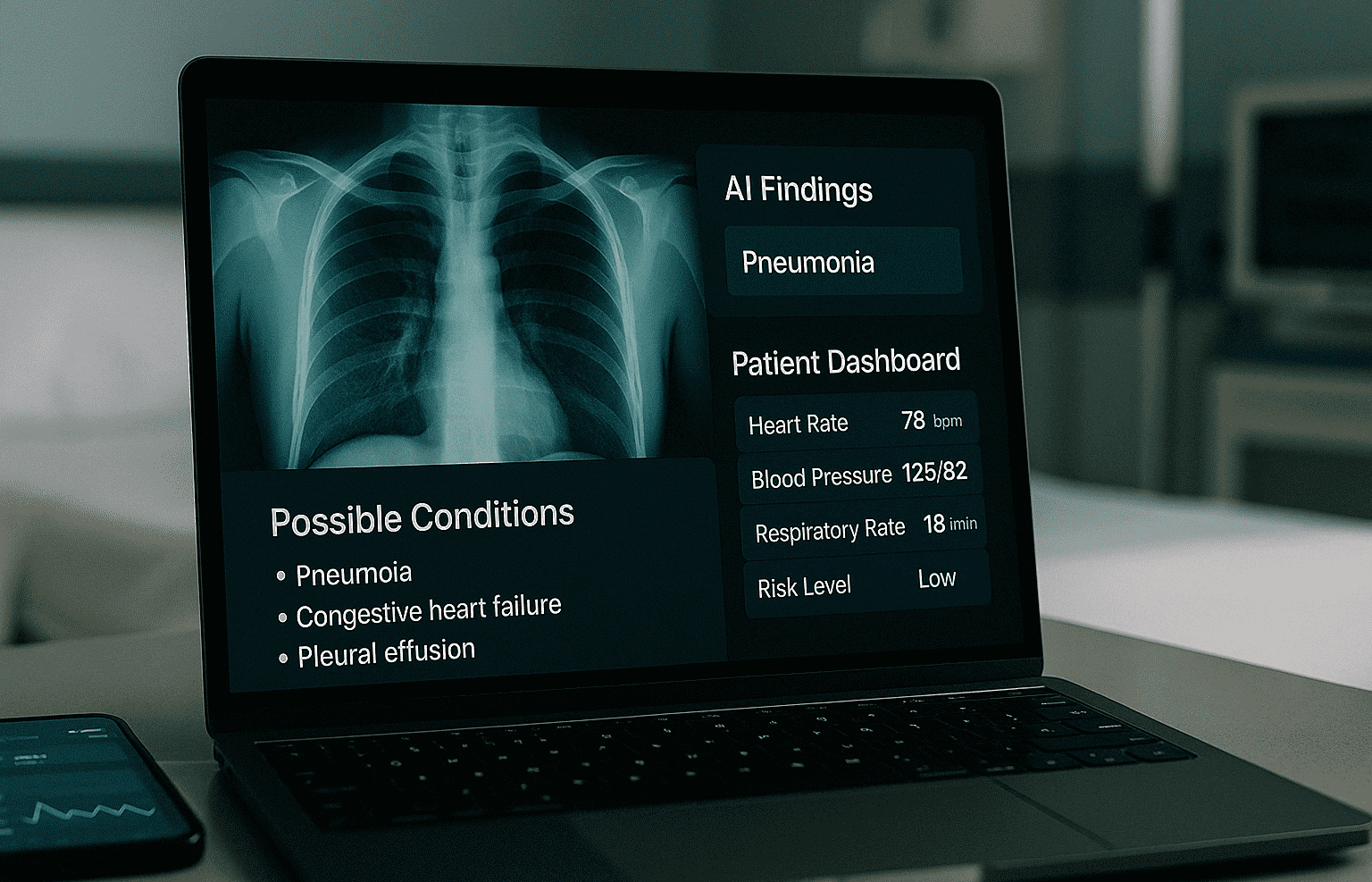

Our Python engineering experts have shipped RAG pipelines, LLM integrations, computer vision systems, and predictive analytics platforms across FinTech, Healthcare, and Logistics. If you’re looking to hire Python developers who understand production AI, not just API wrappers, we’re ready to move as fast as you need. Explore our staff augmentation model and see how quickly your team can scale.

Frequently asked questions

What are the most critical stages of AI-powered solution development?

The most critical stages are problem validation, data strategy, MVP prototyping, model development, deployment, monitoring, and scaling your AI system. Skipping validation or prototyping almost always leads to costly rework.

Which Python best practices matter most for scalable AI?

Use Clean Architecture patterns, separation of concerns, factory patterns, strong config management with Pydantic, retry logic with Tenacity, and detailed logging for scalable Python AI development.

How do AI teams handle edge cases and failures?

Teams mitigate edge cases in production using red-teaming, synthetic data generation, fallback chains, and continuous monitoring for distribution shifts and latency spikes.

What tools are essential for AI-powered solution development?

Essential tools include Python, Ray for distributed AI computing, Pydantic, Tenacity, CI/CD platforms, and cloud services for scaling and deployment.

How fast can AI-first teams deploy working solutions?

AI-first teams deliver solutions in 6 to 10 weeks compared to 4 to 8 months for traditional agencies, primarily because senior engineers make faster architectural decisions with fewer hand-off delays.

Recommended

- How to build AI solutions for scalable SaaS in 2026

- Hire Python developers: cost, skills, and hiring models 2026

- Master AI development process: 85% projects fail in 2026

- AI-powered software: key components and startup insights

- AI trends in software development 2026: 50% bug detection

- What is software development? Guide, methodologies, careers